Try this SIMPLE trick when scraping product data

Summary

TLDRThis video script outlines a method for extracting product data from websites using the schema.org product schema. The tutorial demonstrates how to create a Python script with libraries like urllib3, selectox, and Rich to scrape structured data from script tags on various e-commerce sites. The script simplifies the process of pulling product information, potentially saving time and effort in data collection for analysis or database management.

Takeaways

- 🌐 Websites from different companies use the schema.org product schema to structure product data on their pages.

- 🔍 The script tag with 'application/ld+json' contains structured data that can be scraped from these websites.

- 📚 Schema.org provides documentation on how to organize data using their product schema, including common data points.

- 🤖 A single scraping script can be used to pull data from multiple sites that use the same schema format, simplifying the process.

- 🛠️ The script involves creating a Python environment, installing necessary packages, and using libraries like urllib3, selectox, and Rich.

- 🔧 A function called 'new_client' is used to create an HTTP client with a proxy and custom headers for making requests.

- 📈 The 'schema_get_html' function fetches HTML content from a given URL using the HTTP client, decoding the response data.

- 📝 The 'pass_html' function extracts script tags containing 'application/ld+json' from the HTML and converts the data into a dictionary.

- 🔎 The 'run' function orchestrates the process by creating a client, fetching HTML, and extracting schema data from the page.

- 📊 The resulting data can be used for various purposes, such as populating a database or creating a spreadsheet, leveraging structured data across multiple sites.

Q & A

What do the two websites mentioned in the script have in common?

-Both websites use the schema.org product schema to organize product data on their pages.

What is the purpose of the schema.org product schema?

-The schema.org product schema is used to organize product data in a structured way across different websites, making it easier to scrape and analyze the data.

Why is using a common schema like schema.org beneficial for data scraping?

-Using a common schema like schema.org allows a single scraping script to pull data from multiple websites, as the data is organized in the same way, saving time and effort.

What programming language is being used to create the scraping script in the video?

-The programming language used in the video is Python.

What libraries are being installed and used in the Python script?

-The libraries being installed and used are urllib3, selectolax, and Rich.

What is the purpose of creating a new client function in the script?

-The new client function creates an HTTP client that can be used to make requests to web servers, with specific headers and proxy settings.

How does the script identify and extract the product data from the HTML?

-The script identifies the product data by searching for script tags with type 'application/ld+json' and extracting the JSON-LD content.

What is JSON-LD and why is it used?

-JSON-LD (JavaScript Object Notation for Linked Data) is a standard for embedding linked data in web pages. It is used because it provides a structured and machine-readable way to represent data.

How does the script handle multiple URLs for scraping?

-The script uses a list of URLs and iterates through them, scraping data from each URL in turn.

What can be done with the data once it is scraped and stored in a dictionary?

-The scraped data can be further processed, such as being put into a spreadsheet using pandas, or certain parts of the information can be extracted and stored in a database.

Outlines

Esta sección está disponible solo para usuarios con suscripción. Por favor, mejora tu plan para acceder a esta parte.

Mejorar ahoraMindmap

Esta sección está disponible solo para usuarios con suscripción. Por favor, mejora tu plan para acceder a esta parte.

Mejorar ahoraKeywords

Esta sección está disponible solo para usuarios con suscripción. Por favor, mejora tu plan para acceder a esta parte.

Mejorar ahoraHighlights

Esta sección está disponible solo para usuarios con suscripción. Por favor, mejora tu plan para acceder a esta parte.

Mejorar ahoraTranscripts

Esta sección está disponible solo para usuarios con suscripción. Por favor, mejora tu plan para acceder a esta parte.

Mejorar ahoraVer Más Videos Relacionados

🛑 Stop Making WordPress Affiliate Websites (DO THIS INSTEAD)

Web scraper dasar (single page)

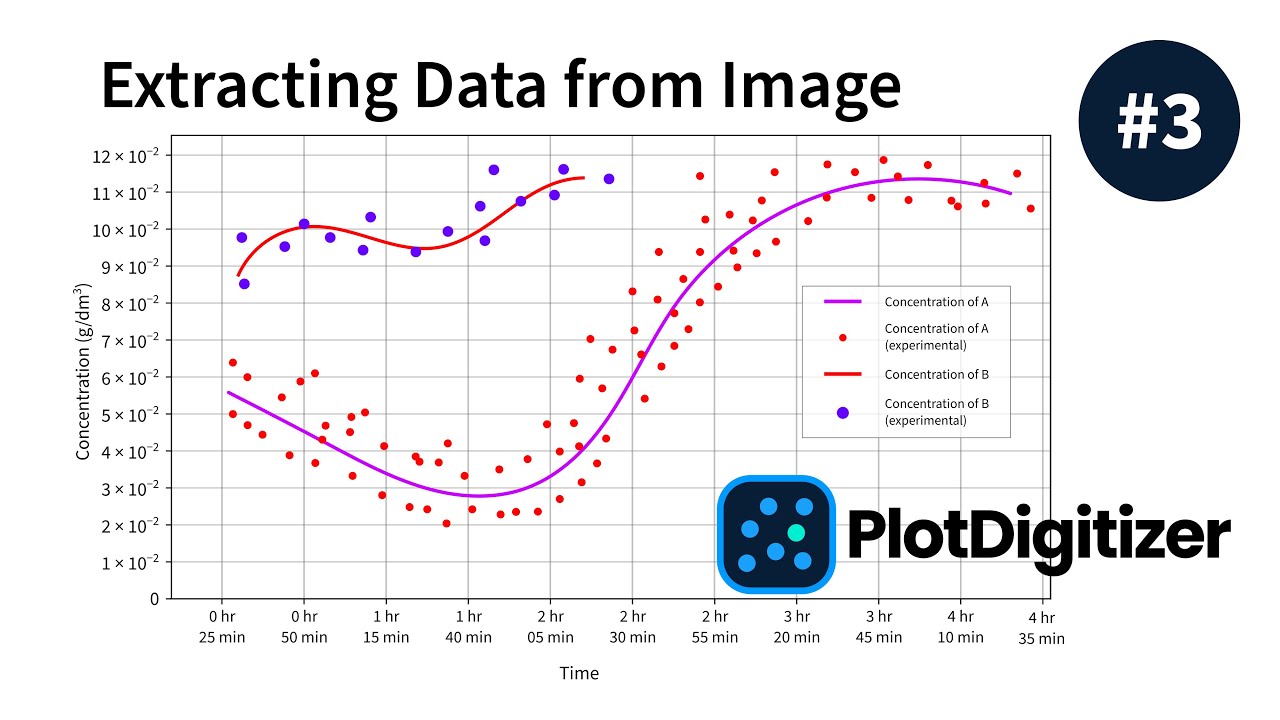

PlotDigitizer - How to Automatically Extract Data from Graph Image (#3)

Praktikum Kimia Kayu Materi 3 Alkohol Benzen

ChatGPT - OpenAI API w Excelu (za darmo)

Materi Fitokimia - Praktikum Ekstraksi Metode Maserasi

5.0 / 5 (0 votes)