Lecture 05 : Basis and Dimension

Summary

TLDRThis lecture delves into the fundamental concepts of basis and dimension in vector spaces, crucial for machine learning algorithms and applications like dimension reduction and dictionary learning. It explains the criteria for a set of vectors to form a basis, emphasizing linear independence and the ability to span the space. The lecture also distinguishes between finite and infinite dimensional spaces, provides examples of bases in various spaces, and introduces the concept of dimension as the number of vectors in a basis. Important results related to basis and dimension, including the implications for linear dependence and the extension of linearly independent sets, are highlighted.

Takeaways

- 📚 The lecture discusses the concept of basis and dimension in vector spaces, which are fundamental to machine learning algorithms.

- 🔍 A basis for a vector space is a set of linearly independent vectors that span the entire space, allowing any vector to be expressed as a linear combination of these basis vectors.

- 📏 There are two types of vector spaces: finite-dimensional and infinite-dimensional, differentiated by the number of vectors in their basis.

- 🧩 The dimension of a vector space is the number of vectors in its basis, which is a key characteristic of the space.

- 📉 In machine learning, basis and dimension concepts are crucial for algorithms such as dimension reduction and dictionary learning.

- 🌐 The standard basis for R2, R3, and RN are sets of vectors with elements that are zero except for a single one, representing the standard orientation in space.

- 🔢 The dimension of spaces like R2, RN, and the space of all 2x2 real matrices are given by simple formulas related to the number of elements in the basis.

- 🔑 If a set of vectors contains more than the dimension of the vector space, it is linearly dependent, emphasizing the importance of the basis size.

- 📈 Any linearly independent subset of a vector space can be extended to form a basis for that space, highlighting the flexibility in choosing a basis.

- 📊 For finite-dimensional vector spaces, all bases contain the same number of vectors, which equals the space's dimension.

- 📘 The lecture provides examples of finding the basis and dimension of subspaces in R3 and R4, illustrating the process of determining these properties.

Q & A

What is the main topic of the lecture?

-The main topic of the lecture is the concept of basis and dimension in vector spaces, which are important in the context of machine learning algorithms.

Why are basis and dimension important in machine learning?

-Basis and dimension are important in machine learning because they help in representing input data as a linear combination of certain vectors, which is essential for algorithms like dimension reduction and dictionary learning.

What is the definition of a basis in vector spaces?

-A basis for a vector space V is a set of linearly independent vectors in V that spans the vector space V. This means every vector in the space can be written as a linear combination of the basis vectors.

What are the two main properties a set of vectors must have to be considered a basis?

-A set of vectors must be linearly independent and must span the entire vector space to be considered a basis.

What is the difference between finite and infinite dimensional vector spaces?

-A finite dimensional vector space has a basis containing a finite number of vectors, while an infinite dimensional vector space has a basis with an infinite number of vectors.

Can you provide an example of a basis for R2 over the real numbers?

-An example of a basis for R2 over the real numbers is the set of vectors {(1, 0), (0, 1)}.

What is the dimension of a vector space?

-The dimension of a vector space is the number of elements in a basis of the vector space.

What is the relationship between the dimension of a vector space and the number of vectors in its basis?

-The dimension of a vector space is equal to the number of vectors in any of its bases.

How can you determine if a set of vectors is linearly dependent?

-A set of vectors is linearly dependent if it contains more vectors than the dimension of the vector space it is supposed to span.

Can a linearly independent set of vectors be extended to form a basis of a vector space?

-Yes, if a linearly independent set of vectors does not span the entire vector space, it can be extended by adding more linearly independent vectors until it becomes a basis.

What is the significance of the dimension of the intersection of two subspaces in relation to the dimensions of the subspaces themselves?

-The dimension of the intersection of two subspaces, along with the dimensions of the subspaces themselves, follows the formula: dimension of S1 + dimension of S2 = dimension of (S1 + S2) + dimension of (S1 ∩ S2).

Outlines

Esta sección está disponible solo para usuarios con suscripción. Por favor, mejora tu plan para acceder a esta parte.

Mejorar ahoraMindmap

Esta sección está disponible solo para usuarios con suscripción. Por favor, mejora tu plan para acceder a esta parte.

Mejorar ahoraKeywords

Esta sección está disponible solo para usuarios con suscripción. Por favor, mejora tu plan para acceder a esta parte.

Mejorar ahoraHighlights

Esta sección está disponible solo para usuarios con suscripción. Por favor, mejora tu plan para acceder a esta parte.

Mejorar ahoraTranscripts

Esta sección está disponible solo para usuarios con suscripción. Por favor, mejora tu plan para acceder a esta parte.

Mejorar ahoraVer Más Videos Relacionados

Basis dan Dimensi - Aljabar Linear

ALE Ruang Vektor 06a

Ruang Baris, Ruang Kolom dan Rank dari Sebuah Matriks (Bagian Pertama)

Types of Machine Learning for Beginners | Types of Machine learning in Hindi | Types of ML in Depth

Deep Learning: In a Nutshell

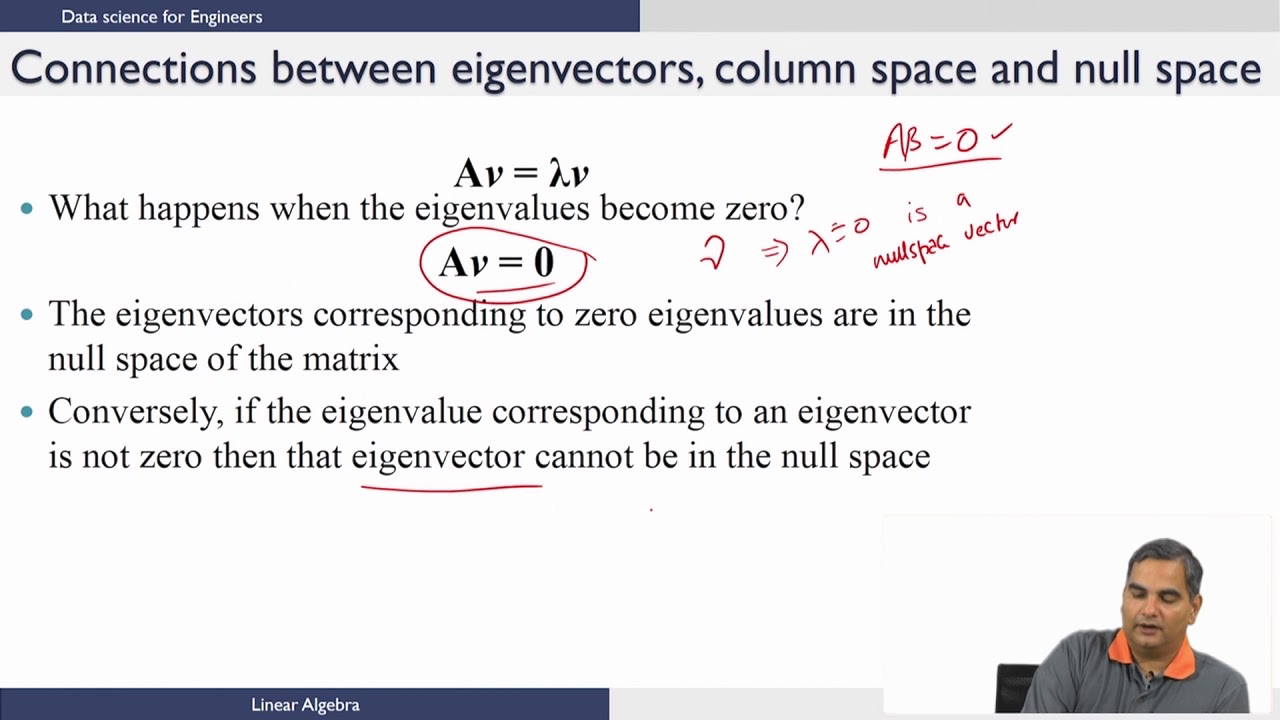

Linear Algebra - Distance,Hyperplanes and Halfspaces,Eigenvalues,Eigenvectors ( Continued 3 )

5.0 / 5 (0 votes)