Pre-Built Evaluators | LangSmith Evaluations - Part 5

Summary

TLDRこの動画スクリプトは、データセットの作成と評価方法について説明しています。データセットには、質問と回答のペアが含まれており、それをもとにLang Chain Evaluatorを用いてモデルの正確性を評価します。特に注目すべきは、Chain of Thought reasoningを使用したCoot QA Evaluatorの活用方法で、モデルの生成と正解の一致を評価することができます。このプロセスは、データセットの品質とモデルの性能を正確に把握するために非常に有用であり、次回の動画でさらに深堀される予定です。

Takeaways

- 📚 データセットの重要性と興味深さについて説明された。

- 🛠️ データセットの作成方法が紹介され、手動で整理された質問と回答のペアから始まる。

- 🔍 データセットの評価方法について学ぶ。入力と出力を比較し、評価器を用いてスコアを算出する。

- 📈 評価器の世界は広範で、カスタム評価器や組み込み評価器が存在する。

- 🏆 CoT (Chain of Thought) 評価器の利点が強調された。理論的思考を用いて回答を評価する。

- 🔧 データセットの名前が「dbrx」で、SDKを使って作成された。

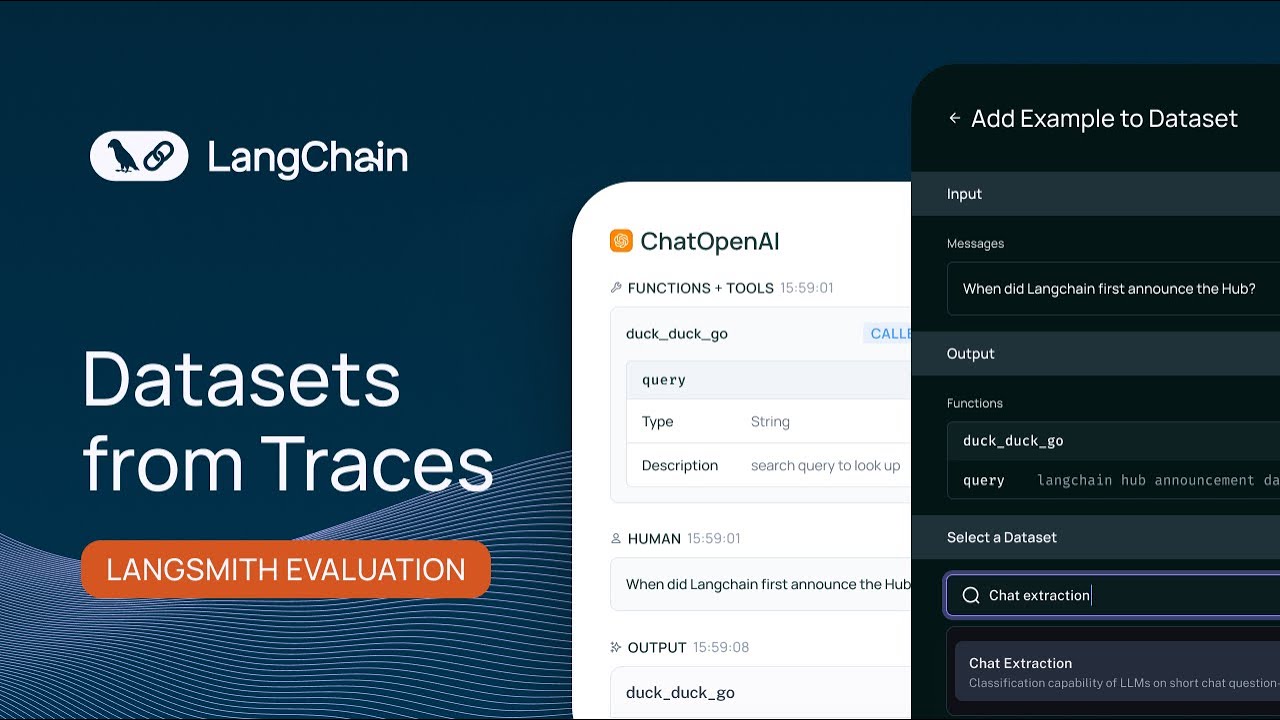

- 🔎 ユーザーログからデータセットを構築する方法も提案されている。実際のユーザーデータからデータセットを作成する。

- 📊 評価結果は、遅延、P99、P50、P99などのメトリックとともに表示される。

- 👀 各テスト結果を詳細に調査でき、入力の質問、参考答案、モデルの出力、そして評価器のスコアが確認できる。

- 🛠️ 評価器の設定や使用方法が説明され、オープンAIを使用しての設定例が示されている。

- 📝 データセットの定義、評価器の選択、テストの実行、結果の分析までがスクリプト内で説明されている。

Q & A

なぜラグ(lags)が重要で興味深いのか説明されていますか?

-初めのビデオでは、lagsが重要な理由と興味深い点について説明されています。

データセットを作成するために使用される主なLang Primitivesは何ですか?

-第二のビデオでは、データセットを作るために使用される主なLang Primitivesが説明されています。

データセットの作成方法について説明されていますか?

-はい、手動でキュレートされた質問と回答のペアからデータセットを作成する方法について説明されています。

dbrxデータセットはどのようにして作成されましたか?

-dbrxデータセットは、特定のブログ記事に基づいて手動で多くの質問と回答のペアを作成し、それらをデータセットに追加することで作成されました。

ユーザーログからデータセットをどのように作成するか説明されていますか?

-はい、ユーザーの質問を実際のユーザーデータとして取り、それを真理応答付きのデータセットに変換する方法について説明されています。

データセットができたら、どうやってLMを評価するか説明されていますか?

-データセットとLMの出力を評価器に渡し、その評価結果とスコアを返す方法について説明されています。

評価器の世界はどのくらい広範囲にわたっているか説明されていますか?

-はい、カスタム評価器、組み込み評価器、ラベルがある場合やない場合の評価方法について触れられています。

CoQA評価器の特徴は何ですか?

-CoQA評価器は、Chain of Thought推理を使用して、LMが生成した答えと真実の答えを比較し、一致するかどうかを評価するという特徴があります。

実際にCoQA評価器を使用してLMを評価する方法について説明されていますか?

-はい、CoQA評価器を使用して、データセットの質問と回答ペアに対してLMを評価し、そのプロセスと結果を詳しく説明しています。

評価結果を確認する方法について説明されていますか?

-はい、データセットのテスト結果を確認し、Latency、Error Rateなどのメトリックスを確認する方法について説明されています。

評価結果を深く掘り下げて分析する方法について説明されていますか?

-はい、各テスト結果をクリックして詳細を確認し、評価器がどのように動作したかを理解する方法について説明されています。

Outlines

Cette section est réservée aux utilisateurs payants. Améliorez votre compte pour accéder à cette section.

Améliorer maintenantMindmap

Cette section est réservée aux utilisateurs payants. Améliorez votre compte pour accéder à cette section.

Améliorer maintenantKeywords

Cette section est réservée aux utilisateurs payants. Améliorez votre compte pour accéder à cette section.

Améliorer maintenantHighlights

Cette section est réservée aux utilisateurs payants. Améliorez votre compte pour accéder à cette section.

Améliorer maintenantTranscripts

Cette section est réservée aux utilisateurs payants. Améliorez votre compte pour accéder à cette section.

Améliorer maintenantVoir Plus de Vidéos Connexes

Custom Evaluators | LangSmith Evaluations - Part 6

Why Evals Matter | LangSmith Evaluations - Part 1

Manually Curated Datasets | LangSmith Evaluations - Part 3

Eval Comparisons | LangSmith Evaluations - Part 7

Datasets From Traces | LangSmith Evaluations - Part 4

Evaluation Primitives | LangSmith Evaluations - Part 2

5.0 / 5 (0 votes)