FSE100 - Analysis

Summary

TLDRThis lecture delves into engineering analysis, defining it as a set of techniques to evaluate and understand system behavior. It distinguishes between model verification, ensuring a model meets specifications, and validation, confirming it aligns with customer expectations. The talk covers various analysis tools, including simulation, retrospective studies, and statistical methods, emphasizing their importance in iterative engineering design to refine models and meet requirements.

Takeaways

- 🔍 Analysis in engineering design involves breaking down an object or system to understand its basic building blocks and their relationships.

- 📏 Model verification is about ensuring the model behaves as expected by the engineers, focusing on internal mechanisms and specifications.

- 🌐 Model validation checks if the model meets the customer's expectations and performs as the system is supposed to, considering external inputs and outputs.

- 🔧 The iterative aspect of analysis is crucial for identifying and fixing issues in models, leading to improved and more accurate representations of the system.

- 🛠️ Various tools and techniques are available for analysis, including simulation, retrospective studies, statistical methods, and mathematical principles like calculus and linear algebra.

- 💡 The goal of analysis is to gain insights into the system's behavior, evaluate the model's quality, and ensure it meets both internal and external expectations.

- 🔄 The iterative process of engineering design involves cycling between modeling and analysis to produce a valid and verified solution that meets all requirements.

- 🧑💻 In software engineering, prototyping is a common technique to create a simplified version of the product for customer feedback and internal evaluation.

- 🔬 Statistical techniques are particularly useful for handling variability, a common challenge in engineering that can cause issues if not properly managed.

- 🔗 There's a close relationship between modeling techniques and analysis, with the latter often informing and improving the former throughout the engineering process.

Q & A

What is the primary focus of the lecture?

-The lecture primarily focuses on the analysis from the perspective of engineering design, emphasizing the definition of analysis in engineering, the differences between model verification and validation, and the tools available for analysis.

How does engineering define analysis?

-In engineering, analysis is defined as a set of techniques or a collection of tools used to evaluate a model or system, leading to an understanding of how the model or system behaves, allowing for better interaction with the environment and making changes to these models.

What is the difference between model verification and model validation?

-Model verification is the process of ensuring that the model behaves as expected according to the internal specifications set by the engineers, while model validation is about ensuring that the model conforms to what the system is supposed to be doing and meets the customer's expectations.

Why is it important to perform both model verification and model validation?

-Both model verification and model validation are important because they ensure that the model not only meets the internal specifications (verification) but also aligns with the external expectations and requirements of the customers (validation).

What is the iterative aspect of analysis within engineering design?

-The iterative aspect of analysis within engineering design refers to the continuous process of improving the model by identifying issues through verification and validation, making improvements, and then re-analyzing the updated model to ensure it is valid and verified.

What are some common tools used for analysis in engineering?

-Common tools for analysis in engineering include simulation, retrospective studies, statistical techniques, basic mathematical principles like calculus and linear algebra, and software prototyping.

How does simulation contribute to the analysis process?

-Simulation contributes to the analysis process by allowing engineers to create a simulated environment to test 'what if' scenarios and evaluate whether the model performs as expected or as the customers expect it to.

What is the purpose of using retrospective studies in analysis?

-The purpose of using retrospective studies in analysis is to collect past data, apply it to the current model, and evaluate how well the model performs relative to historical outcomes, with the goal of improving the system.

Why are statistical techniques important in engineering analysis?

-Statistical techniques are important in engineering analysis because they help classify, identify, and manipulate variability, which can cause problems in engineering. By understanding and controlling variability, engineers can improve their models.

How do basic mathematical principles aid in engineering analysis?

-Basic mathematical principles such as calculus, differential equations, linear algebra, and real analysis aid in engineering analysis by providing the necessary tools to find optimal points, solve systems of equations, and perform other mathematical operations that are crucial for model evaluation and improvement.

What is the role of software prototyping in the analysis process?

-Software prototyping plays a role in the analysis process by allowing engineers to create a simplified version of the product that can be tested and evaluated by customers. This helps in gaining insights into whether the prototype meets expectations and functions as intended, guiding further improvements.

Why is the iterative nature of engineering important for analysis?

-The iterative nature of engineering is important for analysis because it allows for continuous improvement of the model through cycles of modeling, analysis, identification of issues, and refinement. This iterative process ensures that the final solution is bug-free, meets customer requirements, and is both valid and verified.

Outlines

Cette section est réservée aux utilisateurs payants. Améliorez votre compte pour accéder à cette section.

Améliorer maintenantMindmap

Cette section est réservée aux utilisateurs payants. Améliorez votre compte pour accéder à cette section.

Améliorer maintenantKeywords

Cette section est réservée aux utilisateurs payants. Améliorez votre compte pour accéder à cette section.

Améliorer maintenantHighlights

Cette section est réservée aux utilisateurs payants. Améliorez votre compte pour accéder à cette section.

Améliorer maintenantTranscripts

Cette section est réservée aux utilisateurs payants. Améliorez votre compte pour accéder à cette section.

Améliorer maintenantVoir Plus de Vidéos Connexes

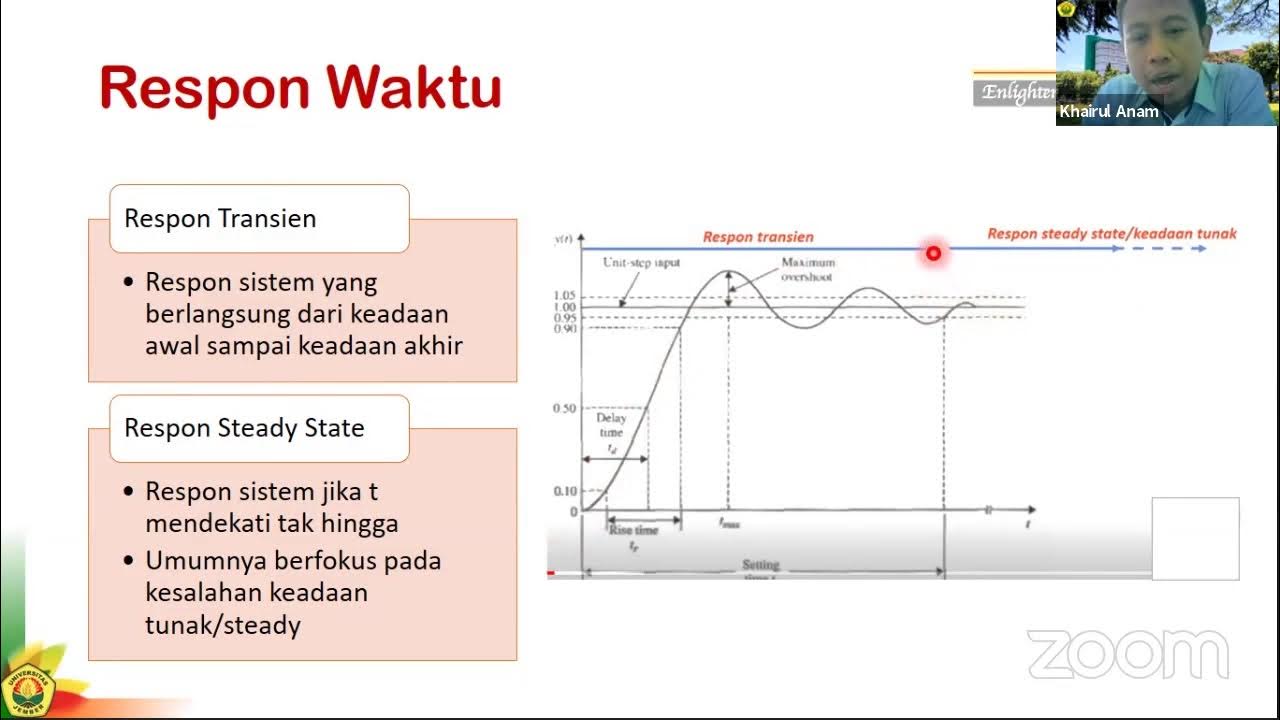

Sistem Kontrol #3a: Analisis Repon Sistem - Pendahuluan

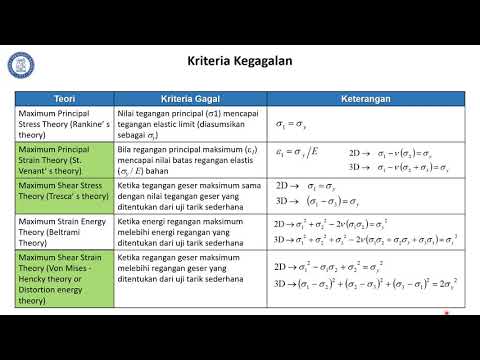

Analisis Kegagalan Logam: Modul 1 Segmen 2 (Kriteria Kegagalan)

Analisis Kekurangan Sistem: Sasaran, Batasan, dan Masalah Sistem

10 ESTABLISHING REQUIREMENTS

13 PSK Kontroller Pid Tuning Gain 1

Topik 01 Sistem Hukum Indonesia | Pengertian Sistem dan Hukum

5.0 / 5 (0 votes)