Stanford CS25: V1 I Transformers United: DL Models that have revolutionized NLP, CV, RL

Summary

TLDRThis video introduces the CS 25 course 'Transformers United,' taught at Stanford in 2021, covering the groundbreaking Transformer deep learning models. The instructors, Advay, Chetanya, and Div, explain the context and timeline leading to the development of Transformers, their self-attention mechanism, and their applications beyond natural language processing. They delve into the advantages, such as efficient handling of long sequences and parallelization, as well as drawbacks like computational complexity. The video also explores notable Transformer-based models like GPT and BERT, highlighting their novel approaches and widespread impact across various fields.

Takeaways

- 👉 Transformers are a type of deep learning model that revolutionized fields like natural language processing, computer vision, and reinforcement learning.

- 🌟 The key idea behind Transformers is the attention mechanism, introduced in the 2017 paper "Attention is All You Need" by Vaswani et al.

- 🧠 Transformers can effectively encode long sequences and context, overcoming limitations of previous models like RNNs and LSTMs.

- 🔄 Transformers use an encoder-decoder architecture with self-attention layers, feed-forward layers, and residual connections.

- 📐 Multi-head self-attention allows Transformers to learn multiple representations of the input data.

- 🚀 Major advantages of Transformers include constant path length between positions and parallelization for faster training.

- ⚠️ A key disadvantage is the quadratic time complexity of self-attention, which has led to efforts like Big Bird and Reformer to make it more efficient.

- 🌐 GPT (Generative Pre-trained Transformer) and BERT (Bidirectional Encoder Representations from Transformers) are two influential Transformer-based models.

- 🎯 GPT excels at language modeling and in-context learning, while BERT uses masked language modeling and next sentence prediction for pretraining.

- 🌉 Transformers have enabled various applications beyond NLP, such as protein folding, reinforcement learning, and image generation.

Q & A

What is the focus of the CS 25 class at Stanford?

-The CS 25 class at Stanford focuses on Transformers, a particular type of deep learning model that has revolutionized multiple fields like natural language processing, computer vision, and reinforcement learning.

Who are the instructors for the CS 25 class?

-The instructors for the CS 25 class are Advay, a software engineer at Applied Intuition, Chetanya, an ML engineer at Moveworks, and Div, a PhD student at Stanford.

What are the three main goals of the CS 25 class?

-The three main goals of the CS 25 class are: 1) to understand how Transformers work, 2) to learn how Transformers are being applied beyond natural language processing, and 3) to spark new ideas and research directions.

What was the key idea behind Transformers, and when was it introduced?

-The key idea behind Transformers was the simple attention mechanism, which was developed in 2017 with the paper "Attention is All you Need" by Vaswani et al.

What are the advantages of Transformers over older models like RNNs and LSTMs?

-The main advantages of Transformers are: 1) There is a constant path length between any two positions in a sequence, solving the problem of long sequences, and 2) They lend themselves well to parallelization, making training faster.

What is the main disadvantage of Transformers?

-The main disadvantage of Transformers is that self-attention takes quadratic time (O(n^2)), which does not scale well for very long sequences.

What is the purpose of the Multi-head Self-Attention mechanism in Transformers?

-The Multi-head Self-Attention mechanism in Transformers enables the model to learn multiple representations of the input data, with each head potentially learning different semantics or aspects of the input.

What is the purpose of the positional embedding layer in Transformers?

-The positional embedding layer in Transformers introduces the notion of ordering, which is essential for understanding language since most languages are read in a particular order (e.g., left to right).

What is the purpose of the masking component in the decoder part of Transformers?

-The masking component in the decoder part of Transformers prevents the decoder from looking into the future, which could result in data leakage.

What are some examples of applications or models that have been built using Transformers?

-Some examples of applications or models that have been built using Transformers include GPT-3 (for language generation and in-context learning), BERT (for bidirectional language representation and various NLP tasks), and AlphaFold (for protein folding).

Outlines

Esta sección está disponible solo para usuarios con suscripción. Por favor, mejora tu plan para acceder a esta parte.

Mejorar ahoraMindmap

Esta sección está disponible solo para usuarios con suscripción. Por favor, mejora tu plan para acceder a esta parte.

Mejorar ahoraKeywords

Esta sección está disponible solo para usuarios con suscripción. Por favor, mejora tu plan para acceder a esta parte.

Mejorar ahoraHighlights

Esta sección está disponible solo para usuarios con suscripción. Por favor, mejora tu plan para acceder a esta parte.

Mejorar ahoraTranscripts

Esta sección está disponible solo para usuarios con suscripción. Por favor, mejora tu plan para acceder a esta parte.

Mejorar ahoraVer Más Videos Relacionados

What are Transformers (Machine Learning Model)?

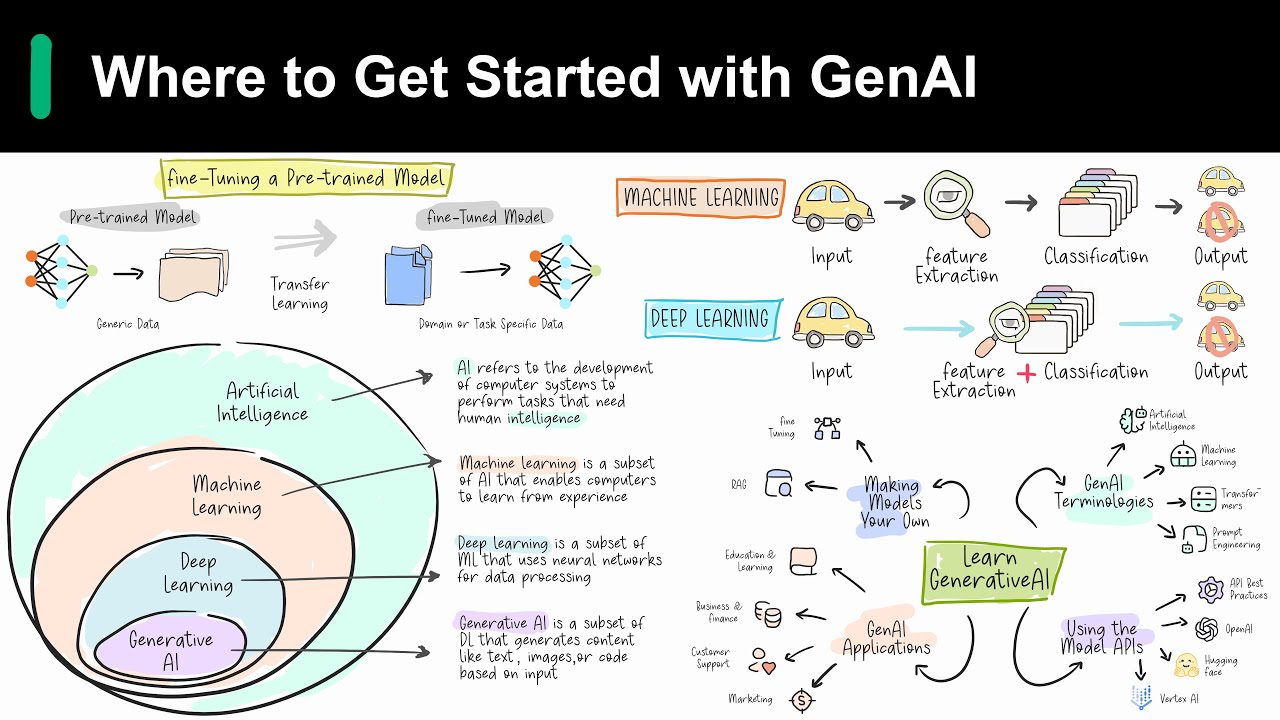

Introduction to Generative AI

Introduction to Generative AI

IPA Kelas 9 Semester 2 : Kemagnetan (part 5 : Transformator)

Machine Learning Course curriculum | Machine Learning - Roadmap

Introduction to Generative AI and LLMs [Pt 1] | Generative AI for Beginners

5.0 / 5 (0 votes)