Local OpenClaw & Ollama in 27 minutes

Summary

TLDRThis video guides users through the process of setting up Open Claw locally on their machines, offering a step-by-step walkthrough from beginner to advanced configurations. The tutorial highlights the benefits of running Open Claw locally, such as avoiding high cloud API costs, enhancing privacy, and ensuring uninterrupted access even when cloud services go down. It covers selecting the right LLM models, setting up networking, and managing multiple devices like Jetson Nano and gaming laptops. The video ultimately shows how local AI can offer both flexibility and control but requires significant setup and technical knowledge.

Takeaways

- 😀 Running Open Claw locally provides privacy, as all data stays within your network and isn't sent to external servers.

- 💸 Running Open Claw with cloud APIs can be expensive, consuming a lot of tokens and possibly leading to high costs.

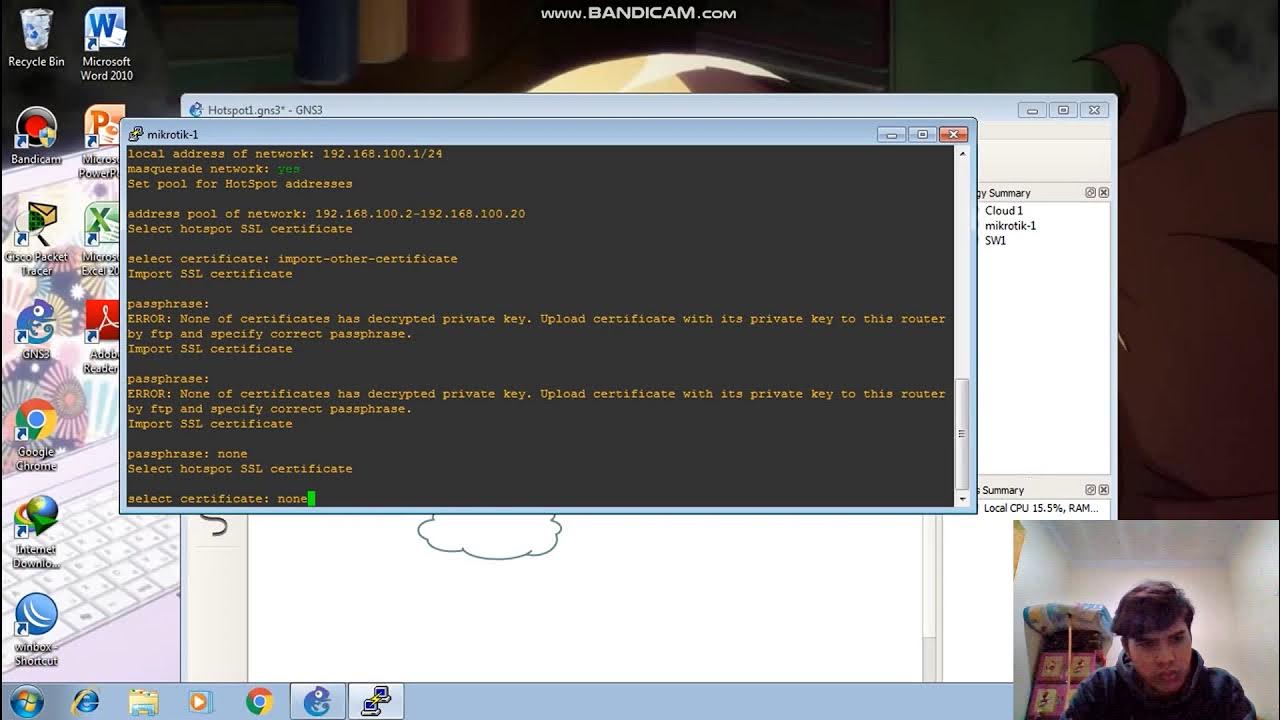

- 🔧 Setting up local AI models like Open Claw can be complex, requiring understanding of networking and hardware configurations.

- 💡 A local setup gives you full control over your AI models, but you also become the system administrator, managing issues and updates yourself.

- 🔍 You can choose between three types of Open Claw setups: fully local, fully cloud-based, or a hybrid setup combining both.

- 💻 Running Open Claw on a powerful computer such as a Mac Mini might be the best choice, but you can use cheaper hardware like old gaming laptops or Raspberry Pi.

- 🧑💻 Setting up a local Open Claw system involves installing the OAMA software, downloading AI models, and configuring the system for the best performance.

- 🛠️ While Open Claw can be run on a single machine, more advanced setups might involve multiple devices, such as using a Jetson Nano for Open Claw and a gaming laptop for AI model processing.

- ⚡ Choosing the right LLM model is key to performance—balance speed and quality for the best results, with models like Quen 3.5-9B being a good option for home setups.

- 🌐 Tailscale can be used for secure, remote access to your local Open Claw setup, making it possible to use the system even outside of your home network.

- 🛑 Setting a static IP address is essential for ensuring your devices can reliably connect and communicate with each other, especially when running Open Claw over a network.

Q & A

What is the main advantage of running Open Claw locally?

-Running Open Claw locally provides several benefits, including avoiding the high cost of tokens, maintaining privacy by keeping data within your own network, and ensuring continuous operation even if the cloud or servers go down.

What challenges did the speaker face while setting up Open Claw locally?

-The speaker faced difficulties with networking configurations, testing different local models, and managing hardware requirements. Additionally, they had to split the system across two machines for better performance, as some hardware couldn't handle the full load.

Why did the speaker choose to run Open Claw on a Jetson Nano and an old gaming laptop?

-The speaker chose to run Open Claw on a Jetson Nano for 24/7 operation due to its low power usage, while the old gaming laptop was used to host the AI model, providing better performance compared to their MacBook.

What is the role of a local AI model in this setup?

-The local AI model processes requests made by Open Claw, providing responses directly from within the home network without needing to rely on cloud-based models or servers.

How does the speaker recommend picking the right AI model for the system?

-The speaker suggests using LM Studio to test different models, paying attention to both the model's size (which affects performance) and compatibility with agent use. They recommend finding a balance between speed and model quality.

What is the difference between a fully cloud, fully local, and hybrid setup?

-A fully cloud setup hosts Open Claw and the AI model on external servers, a fully local setup runs both Open Claw and the AI model within the home network, and a hybrid setup runs Open Claw locally but uses a cloud-based model for AI processing.

What model does the speaker recommend for local use, and why?

-The speaker recommends using Quen 3.59B for local setups due to its balance between speed and performance. They find it to be the best option for their hardware, though they acknowledge that updates and testing with new models are necessary over time.

How can the speaker add a new AI model to an existing Open Claw installation?

-The speaker demonstrates two methods: one is using a coding tool like Claude or OpenAI Codeex to update the config file automatically, and the other involves manually editing the config file to include the new model.

What are some hardware and network requirements for running Open Claw locally?

-A fast computer is required to ensure good performance. The system needs to be able to handle the AI models' size and processing demands. Networking configurations are also crucial to ensure all devices can communicate and serve the AI model effectively.

What is the purpose of using TailScale in this setup, and how does it improve the system?

-TailScale is used to secure remote access to the local AI server, allowing the speaker to connect to their server from outside their home network. It provides a more secure and efficient way to manage the system remotely.

Outlines

此内容仅限付费用户访问。 请升级后访问。

立即升级Mindmap

此内容仅限付费用户访问。 请升级后访问。

立即升级Keywords

此内容仅限付费用户访问。 请升级后访问。

立即升级Highlights

此内容仅限付费用户访问。 请升级后访问。

立即升级Transcripts

此内容仅限付费用户访问。 请升级后访问。

立即升级5.0 / 5 (0 votes)