Enhance RAG Chatbot Performance By Refining A Reranking Model

Summary

TLDRThis video script outlines a workflow using Labelbox to enhance a retrieval process for a custom chatbot. It demonstrates how to utilize a re-ranker model to improve document retrieval by incorporating human-in-the-loop for context evaluation. The process involves embedding documents into vector space, fine-tuning the model with human annotations, and testing the model's performance. The script highlights the importance of human expertise in refining AI responses, showcasing an effective technique for improving chatbot accuracy.

Takeaways

- 📚 The script demonstrates a workflow for improving a retrieval process using the Labelbox platform.

- 🤖 A custom chatbot is utilized to interact with an internal corpus of documents through a retrieval method to find relevant information chunks.

- 🔍 The process involves using a ranker model to enhance retrieval performance by re-ranking initial search results, which may not always be contextually relevant.

- 🧑🔧 A human loop is introduced to evaluate text chunks against search queries, using human judgment to refine the reranking model.

- 📈 The script shows a baseline experiment for retrieval using a consistent Transformer model to embed documents into vector embeddings.

- 📑 An example of embedding the extensive NFL rulebook into a vector index for efficient retrieval is provided.

- 📝 The script details how to create a training dataset for fine-tuning the ranker model by extracting top chunks from initial data sets for human review.

- 🔄 The use of Labelbox SDK to format and submit text queries and response chunks to the Labelbox platform for human review is demonstrated.

- 📊 The process of generating pre-labels using a model like OpenAI's GP4 to assist annotators in efficiently labeling data is explained.

- 📝 Annotation projects are set up in Labelbox to allow human annotators to review and adjust pre-labels, improving the relevance of retrieved chunks.

- 🔧 After human review, the annotations are exported in JSON line format to fine-tune the ranker model, incorporating human expertise into the model's learning process.

- 📈 The fine-tuned ranker model is then used to re-rank query-response pairs, aiming to improve the context provided to the language model for generating responses.

Q & A

What is the primary goal of using Labelbox in the described workflow?

-The primary goal of using Labelbox in the workflow is to improve the retrieval process by fine-tuning a re-ranker model with human-in-the-loop to enhance the performance of retrieving relevant information from an internal corpus of documents.

What is a 'chunk' in the context of this script?

-In the context of this script, a 'chunk' refers to a segment of text retrieved from the internal corpus of documents, which is used as context for the language model to generate a response.

Why is a re-ranker model used in the retrieval process?

-A re-ranker model is used to improve retrieval performance by re-evaluating the initial top results and potentially promoting more contextually relevant information that might be ranked lower by the initial retrieval algorithm.

What role does the human loop play in the fine-tuning process of the re-ranker model?

-The human loop involves expert annotators who evaluate the relevance of each text chunk to the search query, providing valuable feedback that is used to fine-tune the re-ranker model, ensuring it aligns with human expertise.

How does the script suggest improving the baseline retrieval performance?

-The script suggests improving baseline retrieval performance by using a re-ranker model that has been fine-tuned with human feedback, which helps in retrieving more contextually relevant information.

What is the significance of the NFL rules PDF in the provided example?

-The NFL rules PDF serves as an extensive document corpus for the example, which referees or a chatbot would need to be familiar with to answer queries related to NFL rules effectively.

What is the purpose of pre-processing in the context of this script?

-Pre-processing in this context involves loading documents into a consistent Transformer model to embed them into vector embeddings, which is a foundational step for both experiments and the retrieval process.

How does the script handle the limitation of token length in LLMs?

-The script addresses the token length limitation by selecting only the top two documents as context for the LLM model, which helps in managing the input size while still providing relevant information.

What is the process of exporting annotations and ground truth data from Labelbox?

-The process involves using the export section of the labeling project in Labelbox, where code is provided to export all ground truth data. This data is then formatted into a JSON line format suitable for fine-tuning the re-ranker model.

How does the fine-tuning process of the re-ranker model work?

-The fine-tuning process involves feeding the model with labeled data in JSON line format, which contains positive and negative examples. The model learns to score the relevance of responses, with the goal of improving the ranking of contextually relevant information.

What is the final step in the workflow after fine-tuning the re-ranker model?

-The final step is to replicate the experiment using the same LLM and embedding models but now with the fine-tuned re-ranker model to rank all query-response pairs, aiming to provide improved context for generating responses.

Outlines

This section is available to paid users only. Please upgrade to access this part.

Upgrade NowMindmap

This section is available to paid users only. Please upgrade to access this part.

Upgrade NowKeywords

This section is available to paid users only. Please upgrade to access this part.

Upgrade NowHighlights

This section is available to paid users only. Please upgrade to access this part.

Upgrade NowTranscripts

This section is available to paid users only. Please upgrade to access this part.

Upgrade NowBrowse More Related Video

Microsoft Power Platform Fundamentals in 15 Minutes

How to train your AI chatbot with Zapier | Thinkstack AI Tutorial

How to Build Custom AI Chatbots 🔥(No Code)

How to Connect GPT Assistants With Zapier & Notion Database

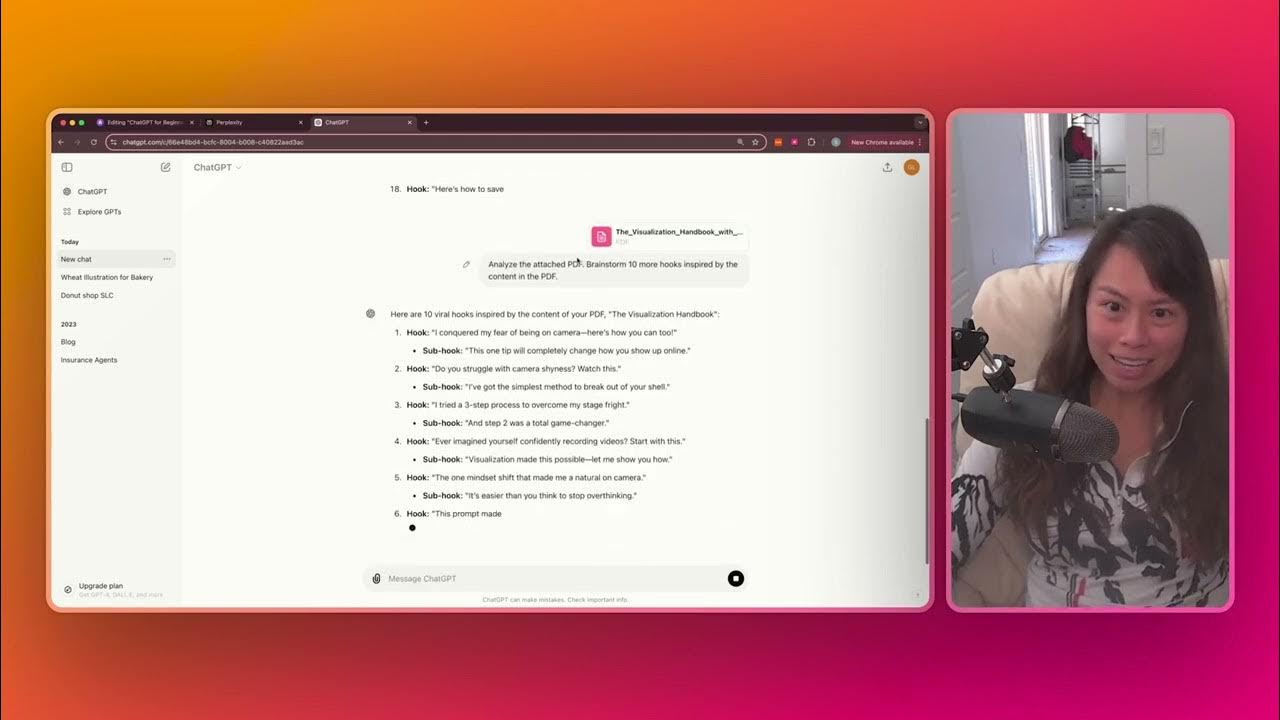

Attach Files and Long Prompts in ChatGPT | Sabrina Ramonov 🍄

This 100% uncensored AI model will answer anything, just watch

5.0 / 5 (0 votes)