Is Dify the easiest way to build AI Applications?

Summary

TLDRこのビデオスクリプトでは、新しいAIアプリのアイデアを実現するためのツール「Defy」を紹介しています。ウェブ検索や他のツールから情報を取得し、モデルに渡して答えを出す仕組みを簡単に構築できると説明されています。Defyを使うと、グラフィカルインターフェースでコンポーネントを接続、質問に答え、ボタンをクリックするだけでデプロイが可能です。さらに、Amaをインストールし、DockerでDefyを実行する方法、モデルプロバイダーの設定、ツールの設定、そしてアプリの作成方法について詳細にガイドされています。初心者でも始められ、経験豊富な開発者でもアイデアのプロトタイピングに役立つ、非常に魅力的なツールです。

Takeaways

- 🤖 新AIアプリのアイデアを実現するプロセスが説明されています。

- 🔍 アプリはウェブ検索や他のツールから情報を取得し、モデルに渡して回答を生成します。

- 🚀 'Defy'ツールを使用することで、インフラのセットアップを簡素化し、グラフィカルインターフェースでコンポーネントを接続できます。

- 💻 自己ホスティングも可能で、DockerとAMA(Anthropic Model API)が必要になります。

- 📝 Defyの設定には、ポートの設定やモデルプロバイダーの追加などいくつかのステップが必要です。

- 🔗 モデルプロバイダーとしてAMAを使用し、特定のモデルを追加して設定します。

- 🔎 ウェブ検索ツールの設定もアプリに必要で、'Search and G'のようなツールが挙げられます。

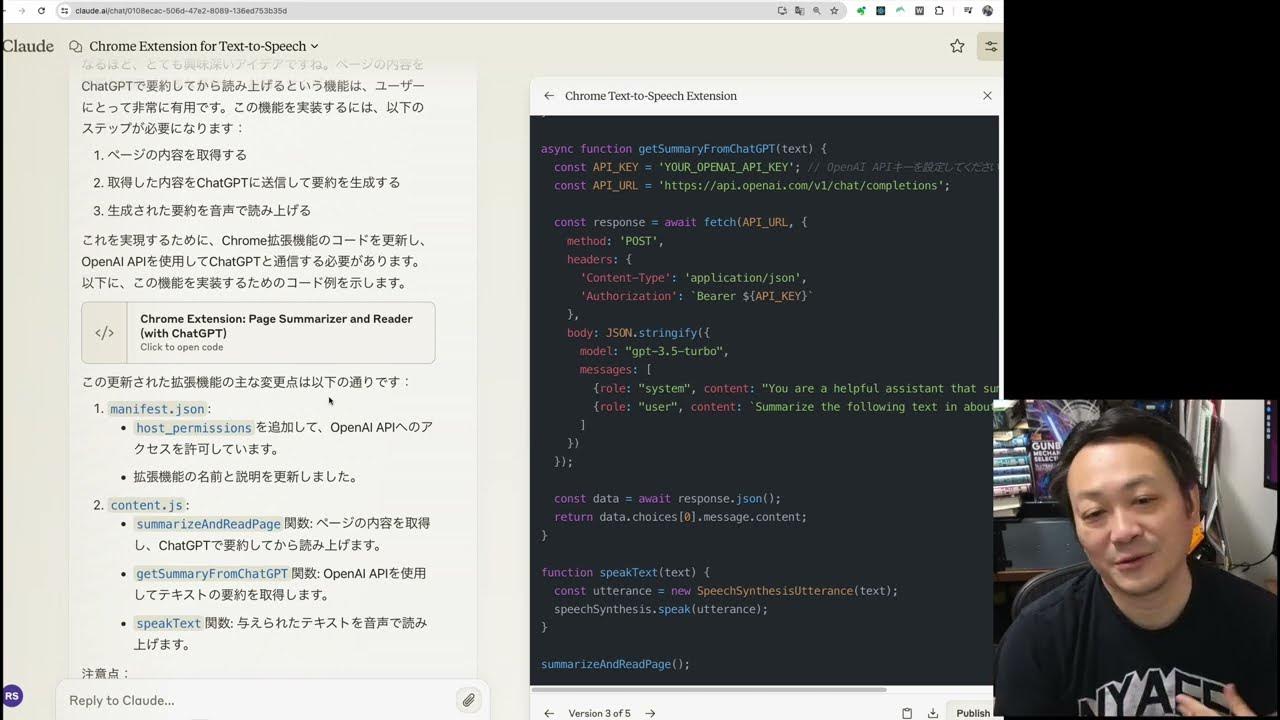

- 🛠️ Defyのアプリ作成プロセスには、入力フィールドの定義やワークフローの構築が含まれます。

- 📊 モニタリング機能を使って、アプリの実行状況を確認できます。

- 🔄 アプリのワークフローは公開して再利用でき、さまざまなシナリオで応用できます。

- 🛑 Defyにはいくつかの欠点もありますが、初心者向けに非常に使いやすく、プロトタイピングに最適です。

Q & A

新しいAIアプリのアイデアをどうやって実現するの?

-ウェブ検索や他のツールから情報を取得し、それをモデルに渡して答えを出すようなAIアプリの開発を提案しています。

アプリのインフラストラクチャを構築するにはどれくらいの時間がかかりますか?

-ツールDefyを使用することで、グラフィカルインターフェースでコンポーネントを接続し、数クリックでアプリを展開できます。

Defyツールとは何ですか?

-Defyは、AIアプリを素早く構築できるツールで、グラフィカルインターフェースでアプリを作成・展開することが可能です。

Defyを使用する前に何が必要ですか?

-Defyを使用するには、Dockerがインストールされた環境と、GitHubリポジトリのクローンが必要です。

Defyでアプリを作成する際の最初のステップは何ですか?

-最初のステップは、adminアカウントの設定です。その後、アプリを作成し、必要なツールやモデルプロバイダーを設定します。

Ama(Anthropic Model API)とは何ですか?

-Amaは、モデルプロバイダーの一つで、Defyと組み合わせて使用することで、AIモデルを活用できます。

Defyでアプリを作成する際に、モデルプロバイダーとしてAmaを設定するにはどうすればよいですか?

-Defyの設定ページでモデルプロバイダーを追加し、AmaのベースURLやモデル名、コンテキストサイズなどの詳細を入力します。

Defyでアプリを作成する際に使用するツールとは何ですか?

-Defyでは、ウェブ検索やその他のAPIリクエストを行うためのツールを使用することができます。

Defyで作成したアプリを実際に動かすためには?

-Dockerコンテナ内でDefyを実行し、ブラウザで指定のポートにアクセスしてアプリを操作します。

Defyのアプリ作成プロセスで、ワークフローとは何ですか?

-ワークフローは、Defyでアプリを作成する際のステップやロジックを定義するもので、様々なツールやモデルを組み合わせて使用します。

Defyのアプリ作成で、どのような問題点があると指摘されていますか?

-ナビゲーションの改善や、UIのカスタマイズ性の制限、アプリの重さなどが指摘されています。

Defyを使用してAIアプリを開発することの利点は何ですか?

-素早くプロトタイプを作成し、デプロイの複雑さを避けることができます。また、初心者向けにも使いやすいインターフェースがあります。

Outlines

This section is available to paid users only. Please upgrade to access this part.

Upgrade NowMindmap

This section is available to paid users only. Please upgrade to access this part.

Upgrade NowKeywords

This section is available to paid users only. Please upgrade to access this part.

Upgrade NowHighlights

This section is available to paid users only. Please upgrade to access this part.

Upgrade NowTranscripts

This section is available to paid users only. Please upgrade to access this part.

Upgrade NowBrowse More Related Video

5.0 / 5 (0 votes)