24 Auto Loader in Databricks | AutoLoader Schema Evolution Modes | File Detection Mode in AutoLoader

Summary

TLDRThis video provides an in-depth guide on using AutoLoaders to manage incremental data processing in cloud storage environments. It covers file detection modes, schema evolution strategies (including handling new columns), and checkpointing for ensuring exactly-once processing. The session demonstrates the configuration of AutoLoader with options like directory listing and file notification, as well as how to handle schema changes using various modes such as adding new columns, rescuing data, or failing on new columns. Viewers are introduced to using AutoLoader with Delta Lake tables for efficient data ingestion and processing.

Takeaways

- 😀 Autoloaders in Databricks are used to incrementally and efficiently ingest new files from cloud storage (AWS, GCP, Azure) or DBFS into a Data Lakehouse.

- 😀 Autoloaders support both batch and streaming modes, providing flexibility depending on the data processing requirements.

- 😀 There are two file detection modes: Directory Listing (default) which polls cloud storage, and File Notification which uses cloud notification and queue services.

- 😀 Checkpoint directories are essential for autoloaders to manage exactly-once processing and track which files have already been ingested.

- 😀 The 'cloudFiles' structured streaming source is used to read files with autoloaders, supporting nested folder structures and multiple file formats like CSV.

- 😀 Schema evolution is handled with four modes: addNewColumns (default), rescue, none, and failOnNewColumns, each controlling how new columns in incoming files are processed.

- 😀 The rescue mode stores schema changes in a hidden column (_rescued_data) to prevent stream failures while still capturing new data.

- 😀 The none mode ignores any schema changes, dropping new columns and not storing any rescued data.

- 😀 Autoloaders use schema hints to define specific column data types and maintain accurate data typing for fields such as integer or double.

- 😀 File notification mode requires elevated cloud privileges and allows for real-time detection of new files without polling.

- 😀 Autoloaders integrate seamlessly with Delta tables, allowing incremental data ingestion, schema evolution, and exactly-once processing to create a reliable and scalable ETL workflow.

- 😀 RocksDB is used under the hood to manage checkpoints and ensure scalability, capable of handling millions of files efficiently.

Q & A

What is Autoloader in Databricks?

-Autoloader is a utility in Databricks that helps in efficiently ingesting data from cloud storage into a Data Lakehouse. It supports both streaming and batch modes to process new incoming files incrementally.

What are the different file detection modes available in Autoloader?

-Autoloader provides two primary file detection modes: 'Directory Listing' (default) and 'File Notification'. Directory Listing polls cloud storage by listing directories, while File Notification uses a notification service to detect new files.

How does Autoloader handle schema evolution?

-Autoloader has several options to manage schema evolution. It can automatically add new columns, rescue new data into a special column, or even fail if new columns are introduced. The behavior can be controlled using the 'addNewColumns', 'rescueColumn', and 'failOnNewColumn' options.

What happens when schema changes occur with Autoloader in the 'addNewColumns' mode?

-In the 'addNewColumns' mode, Autoloader automatically adds new columns to the schema without any issues. The incoming data with new columns is processed without failure, and the new columns are appended to the DataFrame.

How does the 'rescueColumn' option work in Autoloader?

-When the 'rescueColumn' option is specified, Autoloader saves any new or modified columns that it cannot automatically handle into a special 'rescue_column'. This ensures that no data is lost, even when the schema evolves unexpectedly.

What is the 'none' schema evolution mode, and when should it be used?

-In the 'none' schema evolution mode, any schema changes, such as new columns, are completely ignored. No data is rescued or added. This mode should be used when the user does not want to handle schema changes or rescue columns.

What happens in the 'failOnNewColumn' mode in Autoloader?

-In the 'failOnNewColumn' mode, if a new column is detected in incoming files, the streaming process will fail. This mode is useful when you want to strictly control schema changes and ensure that new columns are manually handled.

What is the role of the RocksDB in the Autoloader schema management?

-RocksDB is used by Autoloader to manage state and checkpoints for exactly-once processing. It enables scalability and ensures reliable file processing by managing the schema and file metadata for efficient incremental processing.

How can Autoloader be configured to use file notifications instead of directory listing?

-To configure Autoloader to use file notifications, you need to add the option 'CloudFiles.useNotifications' and set it to 'true'. This will switch the file detection mode to notifications. However, this requires elevated privileges to set up the notification service in the cloud account.

What other options are available with Autoloader beyond schema evolution and file detection?

-Autoloader provides various additional options such as support for different file formats, backfill options, and handling structured streaming sources. It also supports managing incremental loading of data using Delta Live Tables (DLTs) and other advanced configurations for specific use cases.

Outlines

This section is available to paid users only. Please upgrade to access this part.

Upgrade NowMindmap

This section is available to paid users only. Please upgrade to access this part.

Upgrade NowKeywords

This section is available to paid users only. Please upgrade to access this part.

Upgrade NowHighlights

This section is available to paid users only. Please upgrade to access this part.

Upgrade NowTranscripts

This section is available to paid users only. Please upgrade to access this part.

Upgrade NowBrowse More Related Video

Snowflake Overview - Architecture, Features & Key Concepts

Computer Concepts - Module 3: Computer Hardware Part 1B (4K)

Snowflake Storage Layer frequently asked Interview Questions #snowflake #micropartition #database

Data Warehouse vs Data Lake vs Data Lakehouse

CIC Cockpit - Integrations Page

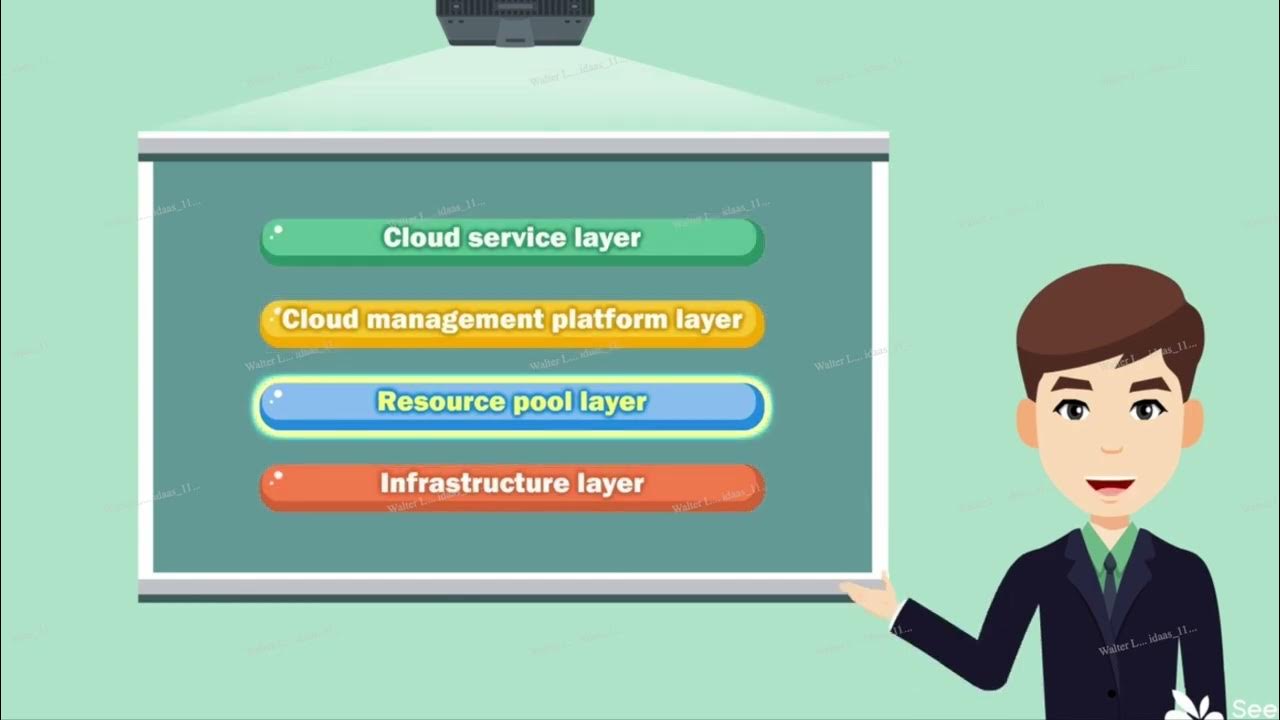

Módulo 7 - Cloud Architecture

5.0 / 5 (0 votes)