LLMs are not superintelligent | Yann LeCun and Lex Fridman

Summary

TLDRThe transcript discusses the limitations of large language models (LLMs) in achieving superhuman intelligence. It highlights that while LLMs can process vast amounts of text, they lack the ability to understand the physical world, possess persistent memory, reason, and plan effectively. The speaker argues that intelligence requires grounding in reality and that most knowledge is acquired through sensory input and interaction with the world, not just language. They also touch on the challenges of creating AI systems that can build a comprehensive world model and the current methods being explored to improve AI's understanding and interaction with the physical environment.

Takeaways

- 🤖 Large language models (LLMs) like GPT-4 and LLaMa 2/3 are not sufficient for achieving superhuman intelligence due to their limitations in understanding, memory, reasoning, and planning.

- 🧠 Human and animal intelligence involves understanding the physical world, persistent memory, reasoning, and planning, which current LLMs lack.

- 📚 LLMs are trained on vast amounts of text data, but this is still less than the sensory data a four-year-old processes, highlighting the importance of non-linguistic learning.

- 📈 Language is a compressed form of information, but it is an approximate representation of our mental models and percepts, suggesting that more than language is needed for true intelligence.

- 🚀 There is a debate among philosophers and cognitive scientists about whether intelligence needs to be grounded in reality, with the speaker advocating for a connection to physical or simulated reality.

- 🤔 The complexity of the world is difficult to represent, and current LLMs are not trained to handle the intricacies of intuitive physics or common-sense reasoning about the physical space.

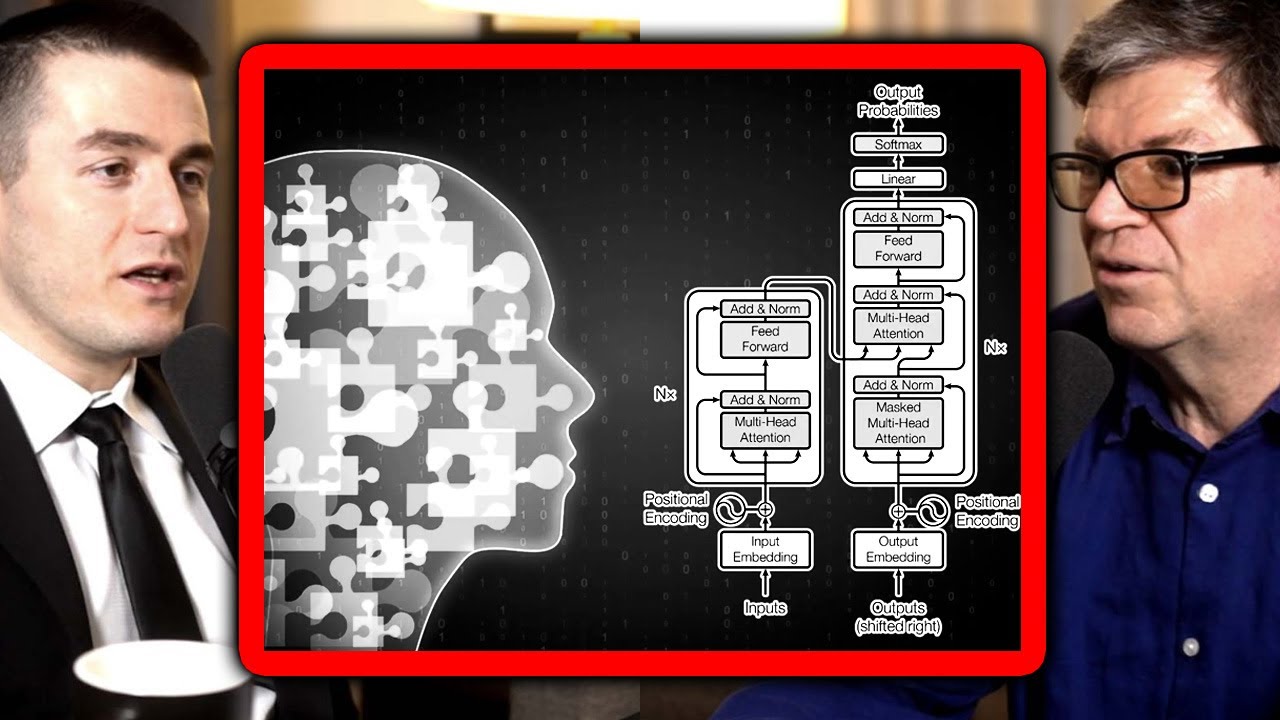

- 🛠️ LLMs are trained using an autoregressive prediction method, which is different from human thought processes that are not strictly tied to language.

- 🌐 Building a complete world model requires more than just predicting words; it involves observing and understanding the world's evolution and predicting the consequences of actions.

- 🔍 Current methods for training systems to learn representations of images by reconstruction from corrupted versions have largely failed, indicating a need for alternative approaches.

- 🔗 Joint embedding predictive architecture (JEA) is a promising alternative to traditional reconstruction-based training, which involves training a predictor to understand the full representation from a corrupted one.

Q & A

What are the key characteristics of intelligent behavior mentioned in the transcript?

-The key characteristics of intelligent behavior mentioned are the capacity to understand the world, the ability to remember and retrieve things (persistent memory), the ability to reason, and the ability to plan.

Why are Large Language Models (LLMs) considered insufficient for achieving superhuman intelligence?

-LLMs are considered insufficient because they do not possess or can only perform in a primitive way the essential characteristics of intelligence such as understanding the physical world, persistent memory, reasoning, and planning.

How does the amount of data a four-year-old processes visually compare to the data used to train LLMs?

-A four-year-old processes approximately 10^15 bytes of visual data, which is significantly more than the 2 * 10^13 bytes used for 170,000 years of reading text that LLMs are trained on.

What is the argument against the idea that language alone contains enough wisdom and knowledge to construct a world model?

-The argument is that language is a compressed and approximate representation of our percepts and mental models. It lacks the richness of the environment and most of our knowledge comes from observation and interaction with the real world, not just language.

What is the debate among philosophers and cognitive scientists regarding the grounding of intelligence?

-The debate is whether intelligence needs to be grounded in reality, with some arguing that intelligence cannot appear without some grounding, whether physical or simulated, while others may not necessarily agree with this.

Why are tasks like driving a car or clearing a dishwasher more challenging for AI compared to passing a bar exam?

-These tasks are more challenging because they require intuitive physics and common-sense reasoning about the physical world, which LLMs currently lack. They are trained on text and do not understand intuitive physics as well as humans do.

How do LLMs generate text?

-LLMs generate text through an autoregressive prediction process where they predict the next word based on the previous words in a text, using a probability distribution over possible words.

What is the difference between the autoregressive prediction of LLMs and human speech planning?

-Human speech planning involves thinking about what to say independent of the language used, while LLMs generate text one word at a time based on the previous words without an overarching plan.

What is the fundamental limitation of generative models in video prediction?

-The fundamental limitation is that the world is incredibly complex and rich in information compared to text. Video is high-dimensional and continuous, making it difficult to represent distributions over all possible frames in a video.

What is the concept of joint embedding and how does it differ from traditional image reconstruction methods?

-Joint embedding involves encoding both the full and corrupted versions of an image and training a predictor to predict the representation of the full image from the corrupted one. This differs from traditional methods that focus on reconstructing a good image from a corrupted version, which has proven to be ineffective in learning good generic features of images.

Outlines

Cette section est réservée aux utilisateurs payants. Améliorez votre compte pour accéder à cette section.

Améliorer maintenantMindmap

Cette section est réservée aux utilisateurs payants. Améliorez votre compte pour accéder à cette section.

Améliorer maintenantKeywords

Cette section est réservée aux utilisateurs payants. Améliorez votre compte pour accéder à cette section.

Améliorer maintenantHighlights

Cette section est réservée aux utilisateurs payants. Améliorez votre compte pour accéder à cette section.

Améliorer maintenantTranscripts

Cette section est réservée aux utilisateurs payants. Améliorez votre compte pour accéder à cette section.

Améliorer maintenantVoir Plus de Vidéos Connexes

Large Language Models (LLMs) - Everything You NEED To Know

Yann LeCun: We Won't Reach AGI By Scaling Up LLMS

Intelligence Artificielle: la fin du bullshit ? (AI News)

Can LLMs reason? | Yann LeCun and Lex Fridman

Que sont les Grands Modèles de langage (LLM) ?

Mixture-of-Agents Enhances Large Language Model Capabilities

5.0 / 5 (0 votes)