LLM Chronicles #3.1: Loss Function and Gradient Descent (ReUpload)

Summary

TLDRThis episode delves into training a Multi-Layer Perceptron (MLP), focusing on parameter adjustments to optimize network performance. It explains the importance of preparing training data, including collecting samples and converting labels for classification tasks using one-hot encoding. The script also covers data normalization to ensure balanced feature importance and the division of data into training, validation, and testing sets. It introduces loss functions for regression and classification, emphasizing their role in guiding network training. The episode concludes with an overview of gradient descent, illustrating how it is used to minimize loss by adjusting weights and biases, and the concept of a computation graph for calculating gradients.

Takeaways

- 🧠 MLPs (Multi-Layer Perceptrons) can approximate almost any function, but the exact details of the function for real-world tasks like image and speech recognition are often unknown.

- 📚 Training an MLP involves tweaking weights and biases to perform a desired task, starting with collecting samples for which the function's values are known.

- 🏷 For regression tasks, the target outputs are the expected numerical values, while for classification tasks, labels need to be one-hot encoded into a numeric format.

- 📉 Normalization is an important step in preparing data sets to ensure that no single feature dominates the learning process due to its scale.

- 🔄 Data sets are partitioned into training, validation, and testing subsets, each serving a specific purpose in model development and evaluation.

- 📊 The training set is used to adjust the neural network's weights, the validation set helps in tuning parameters and preventing overfitting, and the testing set evaluates model performance on unseen data.

- ⚖️ Loss functions quantify the divergence between the network's output and the target output, guiding the network's performance; the choice of loss function depends on the problem's nature.

- 📉 Mean Absolute Error (L1) or Mean Squared Error (L2) are common loss functions for regression, while Cross-Entropy Loss is used for classification tasks.

- 🔍 Training a network is about minimizing the loss function by adjusting weights and biases, which is a complex task due to the non-linearity of both the network and its loss.

- 🏔 Gradient descent is an optimization approach used to minimize the loss by adjusting weights in the direction opposite to the gradient, which points towards the greatest increase in loss.

- 🛠️ A computation graph is built to track operations during the forward pass, allowing the application of calculus to compute the derivatives of the loss with respect to each parameter during the backward pass.

Q & A

What is the primary goal of training a Multi-Layer Perceptron (MLP)?

-The primary goal of training an MLP is to tweak the parameters, specifically the weights and biases, so that the network can perform the desired task effectively.

Why is it challenging to define the function for real-world tasks like image and speech recognition?

-It is challenging because these tasks involve complex functions that cannot be simply defined with a known formula due to the vast amount of possible data points.

What is the first step in training a neural network?

-The first step is to collect a set of samples for which the values of the function are known, which involves pairing each data point with its corresponding target label or output.

How are target outputs handled in regression tasks?

-In regression tasks, the target outputs are the expected numerical values themselves, such as the actual prices of houses in a dataset.

What is one hot encoding and why is it used in classification tasks?

-One hot encoding is a method of converting categorical labels into a binary vector of zeros and ones, where each position corresponds to a specific category. It is used because neural networks operate on numbers, not textual labels.

Why is normalization an important step in preparing datasets for neural networks?

-Normalization is important because it standardizes the range and scale of data points, ensuring that no particular feature dominates the learning process solely due to its larger magnitude.

What are the three distinct subsets that a dataset is typically partitioned into during model development and evaluation?

-The three subsets are the training set, the validation set, and the testing set, each serving specific purposes such as learning, tuning model parameters, and providing an unbiased evaluation of the model's performance.

How does a loss function help in training a neural network?

-A loss function quantifies the divergence between the network's output and the target output, acting as a guide for how well the network is performing and what adjustments need to be made.

What is gradient descent and why is it used in training neural networks?

-Gradient descent is an optimization approach that adjusts the network's parameters in the direction opposite to the gradient of the loss function, aiming to minimize the loss. It is used because it is an efficient way to find values that minimize the loss in complex networks.

How is the gradient computed in the context of gradient descent?

-The gradient is computed by building a computation graph that tracks all operations in the forward pass, and then applying the calculus chain rule during the backward pass to find the derivatives of the loss with respect to each parameter.

What is the purpose of the backward path in the context of training a neural network?

-The backward path is used to apply the calculus chain rule to compute the derivatives of the loss with respect to each parameter, which are then used to update the network's weights and biases in a way that reduces the loss.

Outlines

Esta sección está disponible solo para usuarios con suscripción. Por favor, mejora tu plan para acceder a esta parte.

Mejorar ahoraMindmap

Esta sección está disponible solo para usuarios con suscripción. Por favor, mejora tu plan para acceder a esta parte.

Mejorar ahoraKeywords

Esta sección está disponible solo para usuarios con suscripción. Por favor, mejora tu plan para acceder a esta parte.

Mejorar ahoraHighlights

Esta sección está disponible solo para usuarios con suscripción. Por favor, mejora tu plan para acceder a esta parte.

Mejorar ahoraTranscripts

Esta sección está disponible solo para usuarios con suscripción. Por favor, mejora tu plan para acceder a esta parte.

Mejorar ahoraVer Más Videos Relacionados

Solved Example Multi-Layer Perceptron Learning | Back Propagation Solved Example by Mahesh Huddar

Feedforward and Feedback Artificial Neural Networks

Building makemore Part 5: Building a WaveNet

Training Neural Networks on GPU vs CPU | Performance Test

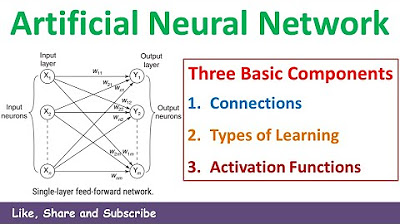

2. Three Basic Components or Entities of Artificial Neural Network Introduction | Soft Computing

Feedforward Neural Networks and Backpropagation - Part 1

5.0 / 5 (0 votes)