Eval Comparisons | LangSmith Evaluations - Part 7

Summary

TLDRこの動画スクリプトは、EV(評価)システムの重要性と興味深さを説明し、Lang Smith Primitives、手動データセットの作成、ユーザーログからのデータセット構築、データセット評価方法、組み込みLang Chain Evaluatorの使用、カスタム評価器の構築など、一連のトピックについて説明しています。実際の使用事例に焦点を当て、ローカル環境でのMistra 7とGP35 Turboの比較を通じて、品質とパフォーマンスの比較を行いました。ABテストの有用性と、Lang Chainの柔軟性についても触れています。

Takeaways

- 📚 シリーズの第7回目の動画で、EV valsの重要性と興味深さを紹介しています。

- 🔍 以前の動画では、Lang Smith Primitivesや手動で作成したデータセットについて説明していました。

- 💻 今回は、実際のユースケースとニーズに焦点を当て、「mistra 7」がローカル環境で動作する様子を比較します。

- 📈 データセットの評価方法として、組み込みのLang chain evaluatorを使用する方法を紹介しています。

- 🔧 カスタム評価器を構築する方法についても説明し、実際のデータセットを使ってABテストを行いました。

- 🖥️ 動画では、ローカル環境で動作する「mistra 7」とOpenAIのパフォーマンスを比較しています。

- 📊 ABテストの結果、OpenAIの方がスコアや遅延の面で優れていることが示されていますが、「mistra 7」の品質についても詳細に分析しています。

- 🔎 動画では、個々の回答の詳細を比較し、どちらが正解であるかを確認するプロセスを説明しています。

- 🛠️ データセットの作成方法についても再確認し、ユーザーログからのデータセット作成や手動キュレーションなど様々な方法を紹介しています。

- 📝 評価器やABテストの使用方法が詳細に説明されており、様々なパラメーターを比較するための柔軟性があることが示されています。

- 🚀 このシリーズの最終動画では、導入概念を概説し、今後の動画でより深いテーマに掘り下げることを示唆しています。

Q & A

EV valsの重要性について説明してください。

-EV valsは、言語モデルの性能を評価するために非常に重要です。適切な評価方法を用いることで、モデルが実際の使用場面でどのように機能するかを正確に評価することができます。

Lang Smith Primitivesとは何ですか?

-Lang Smith Primitivesは、言語モデルの開発と評価において使用される基本的なツールや機能のことを指します。これには、データセットの作成や問題の設定、評価方法の選択などが含まれます。

手動でキュレートされたデータセットを作成する方法を説明してください。

-手動でキュレートされたデータセットを作成するには、専門家の知識や経験を利用して、特定のタスクに関連するデータを選択し、適切なフォーマットに整理する必要があります。このプロセスには、データの収集、分類、注釈付けなどが含まれます。

ユーザーログからデータセットを作成する方法について説明してください。

-ユーザーログからデータセットを作成するには、実際のアプリケーションの使用状況を記録し、ユーザーの質問や要望を抽出します。これらの情報をもとに、問題と解答のペアを作成し、モデルの訓練や評価に使用できるデータセットを構築します。

データセットの評価方法として 언급されたバリュエーターとは何ですか?

-バリュエーターは、データセットの質を評価するために使用されるツールです。異なる種類のバリュエーターを用いて、問題の正解率やモデルの応答の品質などを定量的に評価することができます。

Lang chain evaluatorの機能について説明してください。

-Lang chain evaluatorは、言語モデルの応答を自動的に評価するためのツールです。この評価器は、問題とその正解のペアを用いて、モデルの応答がどの程度正確かどうかを判断します。

ABテストとは何ですか?

-ABテストは、製品やサービスの異なるバージョンを比較するための一種の試験方法です。ここでは、異なる言語モデルやプロンプトなどを比較し、どちらがより優れた結果を出すかを評価します。

ローカル環境でMira 7Bを実行することについて説明してください。

-Mira 7Bをローカル環境で実行するには、適切なハードウェアとソフトウェアの設定が必要です。この場合、M2 Macと32GBのRAMを使用して、OpenAIのAPIを用いてモデルを実行しています。

Mira 7BとGP35 Turboの比較結果について説明してください。

-Mira 7BとGP35 Turboの比較では、OpenAIのAPIを使用したGP35 Turboの方がスコアや遅延の点で優れていますが、Mira 7Bもローカル環境で十分な品質の応答を生成しています。

ABテストの利点は何ですか?

-ABテストの利点は、異なるモデルやプロンプトの効果を直接比較できることです。これにより、最適な設定や改善の方向性を明確にし、製品やサービスの品質向上に繋がります。

このビデオスクリプトの目的は何ですか?

-このビデオスクリプトの目的は、言語モデルの評価方法やABテストの重要性について説明し、実際の使用例を通じてこれらの概念を理解しやすくすることです。

Outlines

Esta sección está disponible solo para usuarios con suscripción. Por favor, mejora tu plan para acceder a esta parte.

Mejorar ahoraMindmap

Esta sección está disponible solo para usuarios con suscripción. Por favor, mejora tu plan para acceder a esta parte.

Mejorar ahoraKeywords

Esta sección está disponible solo para usuarios con suscripción. Por favor, mejora tu plan para acceder a esta parte.

Mejorar ahoraHighlights

Esta sección está disponible solo para usuarios con suscripción. Por favor, mejora tu plan para acceder a esta parte.

Mejorar ahoraTranscripts

Esta sección está disponible solo para usuarios con suscripción. Por favor, mejora tu plan para acceder a esta parte.

Mejorar ahoraVer Más Videos Relacionados

Custom Evaluators | LangSmith Evaluations - Part 6

Evaluation Primitives | LangSmith Evaluations - Part 2

Manually Curated Datasets | LangSmith Evaluations - Part 3

Pre-Built Evaluators | LangSmith Evaluations - Part 5

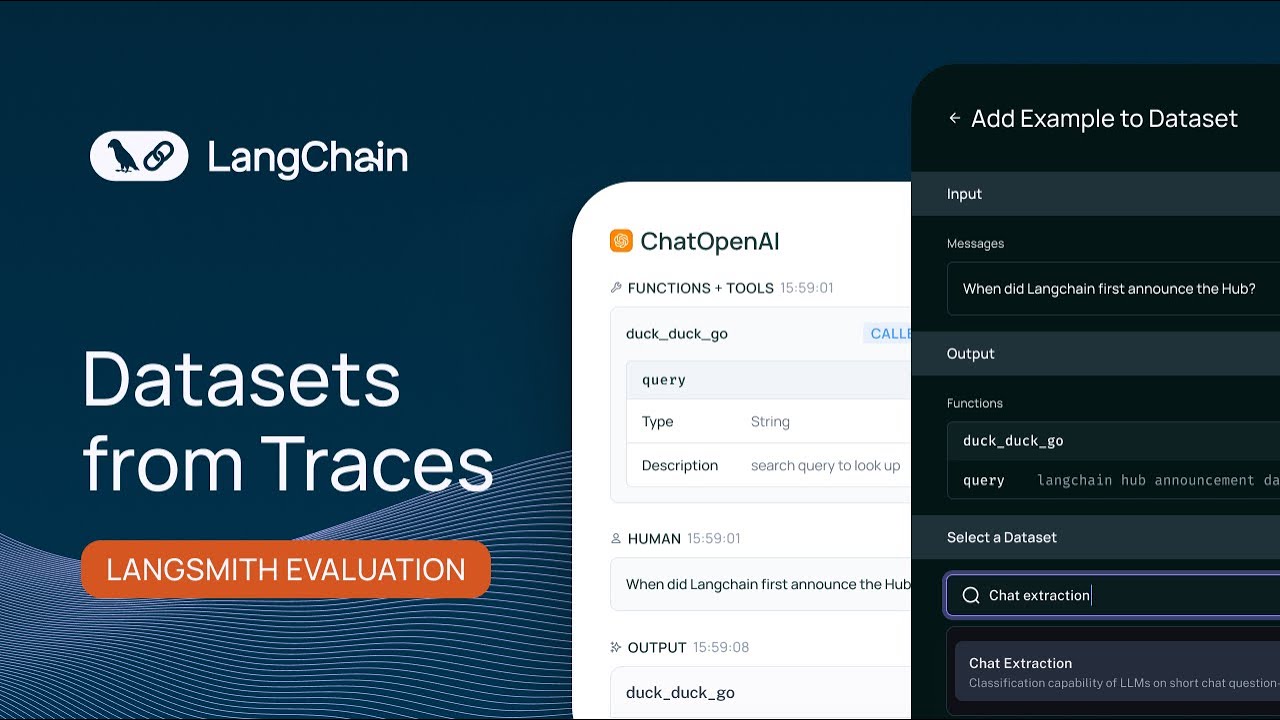

Datasets From Traces | LangSmith Evaluations - Part 4

Why Evals Matter | LangSmith Evaluations - Part 1

5.0 / 5 (0 votes)