#黃仁勳 驚喜為 #美超微 #梁見後 #COMPUTEX 主題演講站台|完整精華

Summary

TLDRIn this engaging keynote, Nvidia CEO Jensen Huang discusses the transformative impact of AI and accelerated computing on data centers. Highlighting the convergence of efficiency and performance, he introduces the concept of 'Green Computing' and the emergence of generative AI. Huang emphasizes the need for modernizing data centers to harness the potential of these technologies, which are projected to grow to a $3 trillion industry by 2030. He also unveils new products, including advanced liquid cooling systems, designed to optimize energy consumption and computational throughput, ultimately driving revenue in what he refers to as 'AI factories.' The talk underscores the importance of safety, technology, and policy advancements in the AI domain.

Takeaways

- 🧠 AI is revolutionizing computing with the advent of accelerated computing and Green Computing, focusing on energy efficiency.

- 📈 The demand for AI is soaring, with data centers needing to be modernized to handle the transition to generative AI, which is expected to impact every data center globally.

- 💡 Green Computing is not just an environmentally friendly approach but also a cost-efficient one, aiming to reduce waste and energy consumption in data centers.

- 🚀 Nvidia is at the forefront of this change with new products and technologies, including a significant number of new products aimed at accelerating data centers.

- 💧 Nvidia is also innovating in cooling technologies, such as data center liquid cooling (DLC), to lower power consumption and enable more AI chips to be manufactured.

- 🔢 The scale of operations is massive, with Nvidia shipping thousands of units per month and aiming to increase this number significantly in the near future.

- 🔑 The importance of software cannot be overstated, as it plays a crucial role in the performance and efficiency of high-performance computing systems.

- 🌐 Networking is evolving into a computing fabric, facilitating distributed computing and the efficient distribution of workloads across networks.

- 🔄 Checkpoint restart is a vital feature for high uptime and utilization in AI systems, and new technologies like Grace CPU are designed with this in mind.

- 🛡️ Safety is a paramount concern in AI, with the need for guardrails, monitoring systems, and good practices to ensure the responsible advancement of AI technology.

- 🌟 The future of AI is bright, with the potential for significant revenue generation through the creation and utilization of intelligent tokens across various industries.

Q & A

What is the significance of the term 'Green Computing' as mentioned in the transcript?

-In the context of the transcript, 'Green Computing' refers to energy-efficient computing. It's about making data centers more efficient and reducing wasted energy and costs, which is a key focus for Nvidia and the future of computing.

What is the current state of CPU scaling according to the transcript?

-The transcript mentions that CPU scaling has slowed for many years, leading to an enormous amount of wasted energy and cost trapped inside data centers.

What is the role of accelerated computing in the context of data centers?

-Accelerated computing is crucial for making data centers more efficient. It helps to release the trapped waste and use that energy for new purposes, such as accelerating every application and data center.

What does the term 'generative AI' refer to in the transcript?

-Generative AI in the transcript refers to the process of AI generating new content such as text, images, and videos. It is a significant shift in computing that will affect every data center globally.

How does the speaker describe the impact of generative AI on data centers?

-The speaker suggests that the transition to generative AI will impact every single data center in the world, necessitating the modernization of the trillion-dollar worth of data centers that are established.

What is the significance of the number '1,000 R' mentioned in the transcript?

-The number '1,000 R' refers to the shipping of 1,000 units of a certain product per month, which is part of Nvidia's efforts to lower power consumption and enable the manufacturing of more AI chips.

How does liquid cooling (DLC) contribute to AI chip manufacturing according to the transcript?

-Liquid cooling (DLC) is being shipped in production to lower power consumption, allowing for the manufacturing of more AI chips and contributing to the efficiency and performance of data centers.

What is the goal for the year mentioned by the speaker in relation to shipping?

-The goal for the year, as mentioned in the transcript, is to ship more than 10,000 units, indicating a significant increase in production and shipping targets.

What does the speaker mean by 'AI factories'?

-The term 'AI factories' refers to data centers that are directly generating revenues for factories by utilizing AI technologies to create value through processes like token generation.

How does the speaker view the future of computing throughput and its relation to revenue?

-The speaker views computing throughput as directly tied to revenue generation. The faster the generation of tokens (which embed intelligence), the higher the throughput, utilization, and consequently, the revenues.

What is the importance of software compatibility in high-performance computing as discussed in the transcript?

-Software compatibility is crucial in high-performance computing because it allows for the seamless integration of systems and ensures that all components work together efficiently, which is vital for achieving high performance and efficiency in data centers.

What are the three important software stacks mentioned in the transcript?

-The three important software stacks mentioned in the transcript are CUDA, which is famous for parallel computing, a networking stack for creating a computing fabric, and DOA, which is used for distributing workload across the network efficiently.

How does the speaker emphasize the importance of safety in AI?

-The speaker emphasizes the importance of safety in AI by comparing it to autopilot in airplanes, stating that just as many technologies and practices were needed to keep autopilot safe, similar measures will be necessary for AI, including guardrails, monitoring systems, and good policies.

Outlines

هذا القسم متوفر فقط للمشتركين. يرجى الترقية للوصول إلى هذه الميزة.

قم بالترقية الآنMindmap

هذا القسم متوفر فقط للمشتركين. يرجى الترقية للوصول إلى هذه الميزة.

قم بالترقية الآنKeywords

هذا القسم متوفر فقط للمشتركين. يرجى الترقية للوصول إلى هذه الميزة.

قم بالترقية الآنHighlights

هذا القسم متوفر فقط للمشتركين. يرجى الترقية للوصول إلى هذه الميزة.

قم بالترقية الآنTranscripts

هذا القسم متوفر فقط للمشتركين. يرجى الترقية للوصول إلى هذه الميزة.

قم بالترقية الآنتصفح المزيد من مقاطع الفيديو ذات الصلة

NVIDIA's CEO Just Confirmed: AI is 100X Bigger Than We Thought ($100T)

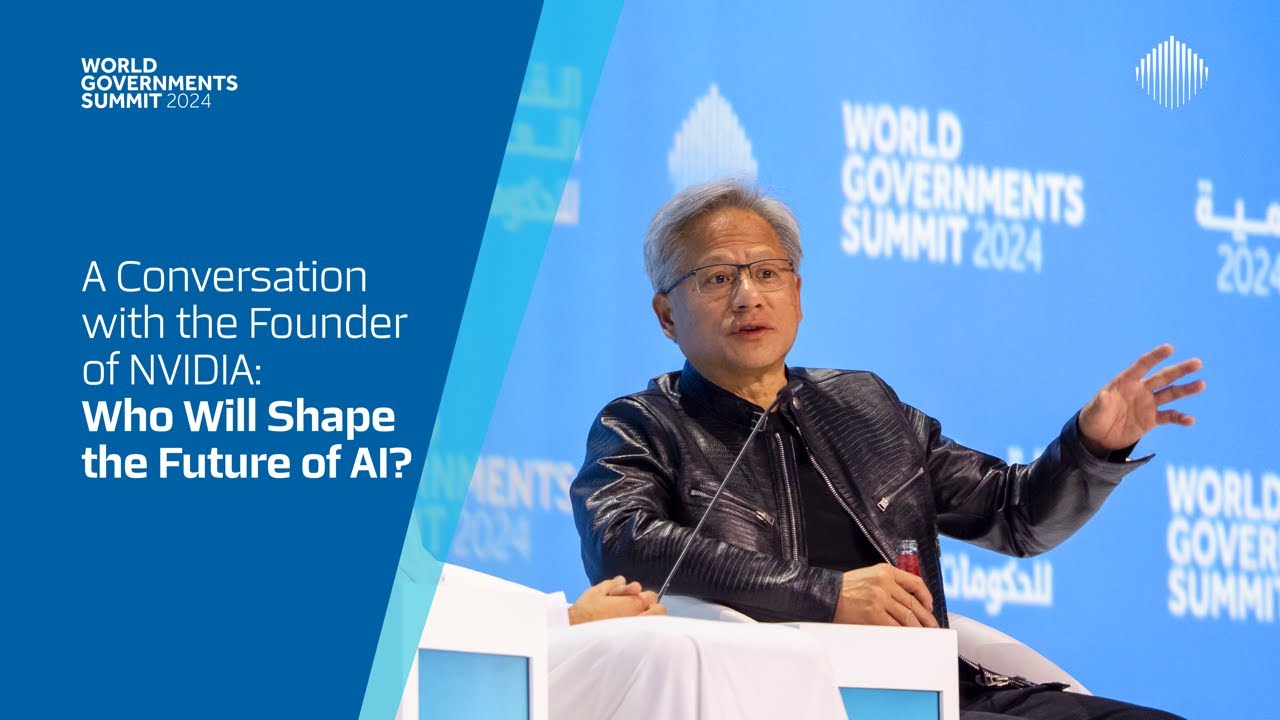

A Conversation with the Jensen Huang of Nvidia: Who Will Shape the Future of AI? (Full Interview)

A Conversation with the Founder of NVIDIA: Who Will Shape the Future of AI?

I Was The FIRST To Game On The RTX 5090 - NVIDIA 50 Series Announcement

NVIDIA CEO Jensen Huang Reveals Keys to AI, Leadership

Jensen Huang of Nvidia on the Future of A.I. | DealBook Summit 2023

5.0 / 5 (0 votes)