The Remarkable Story Behind The Most Important Algorithm Of All Time

Summary

TLDRThe script explores the significance of the Fast Fourier Transform (FFT), a pivotal algorithm in signal processing used in technologies from WiFi to nuclear detection. It delves into the history of nuclear arms control efforts and how FFT could have influenced them. The video also highlights the discovery and application of FFT in various fields, emphasizing its importance in modern society and the potential impact of career choices on global issues.

Takeaways

- 🌟 The Fast Fourier Transform (FFT) is a crucial algorithm used in various applications including signal processing, radar, sonar, 5G, and WiFi.

- 🔍 FFT's significance extends to its role in potentially curbing the nuclear arms race, as it was instrumental in detecting covert nuclear weapons tests.

- 💡 The algorithm was discovered by scientists during efforts to detect nuclear tests, and its earlier discovery could have influenced the course of nuclear disarmament.

- 🕊️ Post-World War II, the U.S. proposed the Baruch Plan for international control of nuclear materials, but it was rejected by the Soviets, leading to the nuclear arms race.

- ⏳ The Partial Test Ban Treaty of 1963 banned nuclear tests in the atmosphere, underwater, and space, but not underground, due to difficulties in verifying compliance.

- 🌐 The challenge in detecting underground nuclear tests lies in distinguishing between seismic activity from natural earthquakes and man-made explosions.

- 📊 A Fourier transform decomposes a signal into its constituent frequencies, aiding in the analysis of seismometer signals to identify nuclear tests.

- 🔢 The Fast Fourier Transform algorithm reduces the computational complexity from N squared to NlogN, making it feasible to process large datasets quickly.

- 📚 Carl Friedrich Gauss had discovered a form of the FFT in 1805, but it wasn't published in a widely accessible form, delaying its impact until the 20th century.

- 🛰️ The FFT is fundamental to modern signal processing, including image and audio compression, and has been called the most important numerical algorithm of our lifetime by mathematician Gilbert Strang.

- 🚀 The video also promotes the nonprofit 80,000 Hours, which helps individuals find careers that can make a significant positive impact on pressing global issues.

Q & A

What is the Fast Fourier Transform (FFT)?

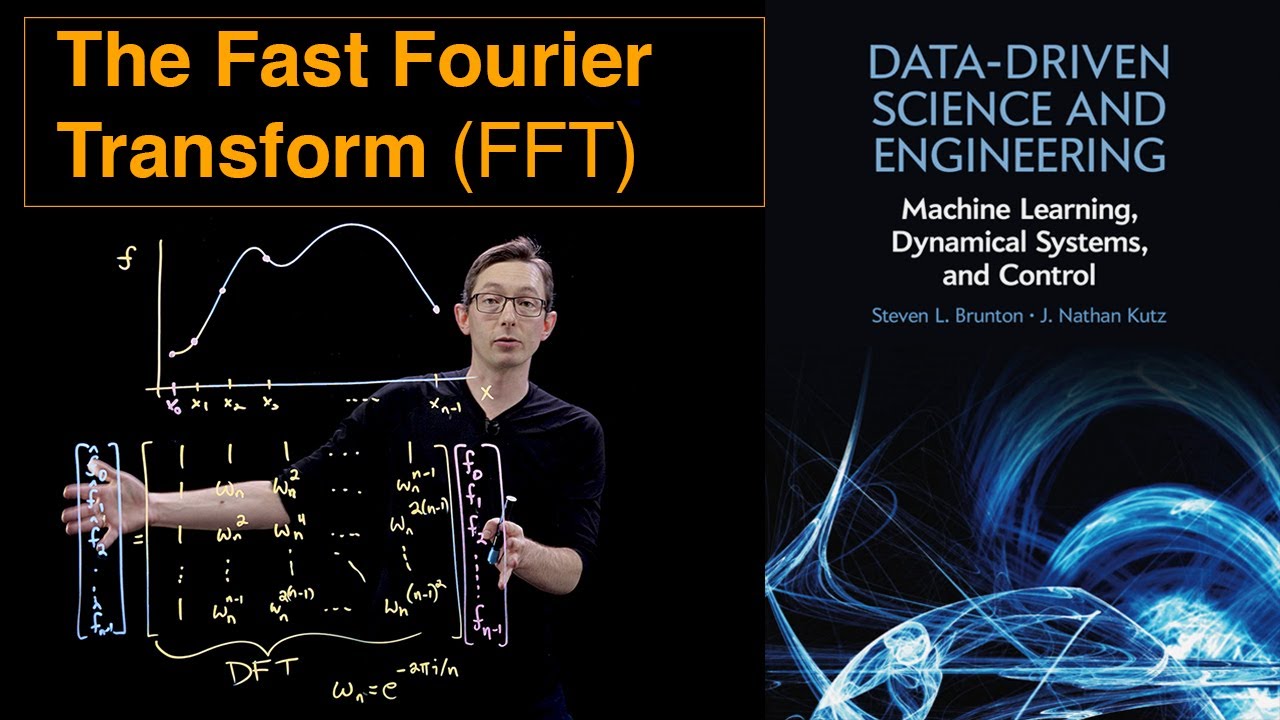

-The Fast Fourier Transform (FFT) is an algorithm that efficiently computes the Discrete Fourier Transform (DFT) of a sequence and its inverse. It breaks down a signal into its constituent frequencies, simplifying the analysis and processing of various signals, and is widely used in signal processing, including audio, image, and data compression.

Why is the FFT considered so important?

-The FFT is considered important because it is foundational in many areas of technology and science, including signal processing, radar, sonar, 5G, WiFi, and even in the detection of nuclear tests. Its efficiency in handling large amounts of data has made it a critical tool for modern computing and communication systems.

What was the Baruch plan and why was it significant?

-The Baruch Plan was a proposal by the United States after World War II to decommission all their nuclear weapons if other nations pledged never to make them. It aimed to prevent the proliferation of nuclear weapons and was significant because it showed the U.S.'s willingness to engage in disarmament discussions, although it was ultimately rejected by the Soviets.

How did the nuclear arms race begin?

-The nuclear arms race began after the rejection of the Baruch Plan by the Soviet Union. They saw it as an attempt to maintain American dominance in nuclear weapons. This led to both superpowers developing and testing new nuclear weapons, escalating the arms race.

What was the purpose of the 1958 Geneva Conference on the Discontinuance of Nuclear Weapon Tests?

-The purpose of the 1958 Geneva Conference was to negotiate a comprehensive test ban to prevent any state from testing nuclear weapons. It was an attempt to reduce the proliferation of nuclear weapons and the associated risks.

Why was it difficult to detect underground nuclear tests?

-Underground nuclear tests are difficult to detect because their radiation is mostly contained within the earth, and the seismic activity produced is similar to natural earthquakes. Additionally, the Soviets refused onsite compliance visits, making it challenging to verify compliance with a test ban.

What role did the FFT play in the detection of covert nuclear tests?

-The FFT played a crucial role in analyzing seismometer signals to distinguish between natural earthquakes and underground nuclear tests. By decomposing the signals into their frequency components, scientists could identify the unique signatures of nuclear explosions.

Who discovered the Fast Fourier Transform and when was it rediscovered?

-The Fast Fourier Transform was originally discovered by Carl Friedrich Gauss in 1805, but it was not widely adopted due to its publication posthumously in a non-standard notation. It was rediscovered in the 20th century by James Cooley and John Tukey, who published the algorithm in 1965, which then gained widespread use.

How did the FFT contribute to the field of image compression?

-The FFT contributes to image compression by transforming images into the frequency domain, where most natural images have a lot of high-frequency components that are close to zero. By discarding these high-frequency components and inverting the transform, a compressed version of the image can be obtained with minimal loss of quality.

What is the significance of the 80,000 Hours organization mentioned in the script?

-80,000 Hours is a nonprofit organization that helps people find fulfilling careers that make a positive impact on the world. They provide resources such as research-backed guides, a job board, and a podcast to assist individuals in making career choices that align with their desire to do good.

Outlines

此内容仅限付费用户访问。 请升级后访问。

立即升级Mindmap

此内容仅限付费用户访问。 请升级后访问。

立即升级Keywords

此内容仅限付费用户访问。 请升级后访问。

立即升级Highlights

此内容仅限付费用户访问。 请升级后访问。

立即升级Transcripts

此内容仅限付费用户访问。 请升级后访问。

立即升级浏览更多相关视频

La destacable historia detrás del algoritmo más importante de todos los tiempos

The Discrete Fourier Transform (DFT)

Understanding the Discrete Fourier Transform and the FFT

The Fast Fourier Transform (FFT)

Relation between Laplace transform, Fourier transform, z-transform, DTFT, DFT and FFT

But what is a convolution?

5.0 / 5 (0 votes)