Building a Data Warehouse using Matillion ETL and Snowflake | Tutorial for beginners| Part 1

Summary

TLDRThis video provides a detailed walkthrough of setting up a data pipeline in Matillion ETL. It covers creating a project group, connecting to AWS, configuring an RDS database, and integrating Snowflake for data staging. The presenter walks through the process of connecting to various systems, setting up orchestration jobs, and resolving errors related to schema and database configurations. The video also introduces the concept of importing jobs and working with JSON files in Matillion, alongside a promotional segment about an exclusive Snowflake training program to advance users' careers in cloud data solutions.

Takeaways

- 😀 Project setup begins in Matillion ETL by creating a new project group with a unique project name and excluding sample data.

- 😀 AWS connection is configured by specifying the EC2 instance environment, connecting it to Snowflake using the account URL, username, and password.

- 😀 The process includes configuring RDS queries by selecting the appropriate database type (MySQL) and providing connection details (endpoint, username, password, etc.).

- 😀 Data is extracted from an RDS database, specifically targeting the 'airports' data, using a defined SQL query, and is staged in an S3 bucket.

- 😀 After configuring the AWS connection and data extraction parameters, a target table is defined in Snowflake to store the data.

- 😀 A schema must be defined in the environment setup to prevent errors during execution (e.g., 'public' schema in Snowflake).

- 😀 Matillion orchestration job is run by selecting the appropriate environment (e.g., AWS v2) to ensure correct warehouse, database, and schema names are specified.

- 😀 After completing the setup, the orchestration job runs successfully, loading 372 records into the 'training_airports' table in Snowflake.

- 😀 The setup process in Matillion ETL is intuitive, guiding the user through necessary configurations for a smooth data transfer workflow.

- 😀 Future stages involve importing Matillion jobs from JSON files to create and manage additional tables in Snowflake, providing an efficient way to scale data pipelines.

- 😀 The video also promotes an exclusive Snowflake Mastery Program, designed for those looking to master Snowflake and learn practical data solutions in the cloud.

Q & A

What is the first step in setting up a project in Matillion ETL?

-The first step is to create a project group and give it a project name. In this case, the project name is 'demo' and the group is 'mine default'. Then, uncheck the option to include sample data if it's not needed.

How do you connect Matillion to AWS for this demo?

-You need to create an AWS connection by entering the necessary details, such as the connection name (e.g., 'aws'), and the environment name. Matillion will use this connection to interact with AWS services like S3.

How do you connect Matillion to a Snowflake account?

-To connect Matillion to Snowflake, you need to provide the Snowflake account URL, your username, and password. The URL is obtained by taking the part before 'snowflakecomputing.com' from your Snowflake instance URL.

What is the purpose of the 'Orchestration Job' in Matillion?

-An Orchestration Job in Matillion is used to define the flow of tasks for the ETL process. In this case, the orchestration job named 'data warehouse orchestration' will pull data from an RDS database and move it to Snowflake via S3.

How do you configure the RDS Query component in Matillion?

-You configure the RDS Query component by selecting the appropriate database type (e.g., MySQL), entering the RDS endpoint, and providing the database name, username, and password. Then, you add any necessary SQL commands, such as the query to select data from the source database.

What is the role of the S3 staging area in this process?

-The S3 staging area is used to temporarily store the data between RDS and Snowflake. You create a bucket and folder in AWS S3 to store the data before it is loaded into Snowflake.

What error occurred when trying to run the job initially, and how was it resolved?

-The error 'SQL compilation error object does not exist' occurred because no schema was specified in the environment setup. The solution was to define a default schema in the environment settings and rerun the job.

Why is defining a schema important when working with Snowflake in Matillion?

-Defining a schema ensures that Matillion knows where to place the data within Snowflake. If no schema is specified, it can cause errors like the one encountered, where Matillion cannot find the appropriate location for the data.

What is the significance of creating a new environment in Matillion?

-Creating a new environment allows you to specify the correct database, schema, and warehouse to be used during the ETL process. This ensures that Matillion knows exactly where to execute the job and load the data.

What additional feature does the 'Master and Snowflake' program offer?

-The 'Master and Snowflake' program offers in-depth video content, practical demos, hands-on templates, expert advice, and group coaching calls. It is designed to help users master Snowflake and apply real-world solutions to common data challenges.

Outlines

This section is available to paid users only. Please upgrade to access this part.

Upgrade NowMindmap

This section is available to paid users only. Please upgrade to access this part.

Upgrade NowKeywords

This section is available to paid users only. Please upgrade to access this part.

Upgrade NowHighlights

This section is available to paid users only. Please upgrade to access this part.

Upgrade NowTranscripts

This section is available to paid users only. Please upgrade to access this part.

Upgrade NowBrowse More Related Video

How to build and automate your Python ETL pipeline with Airflow | Data pipeline | Python

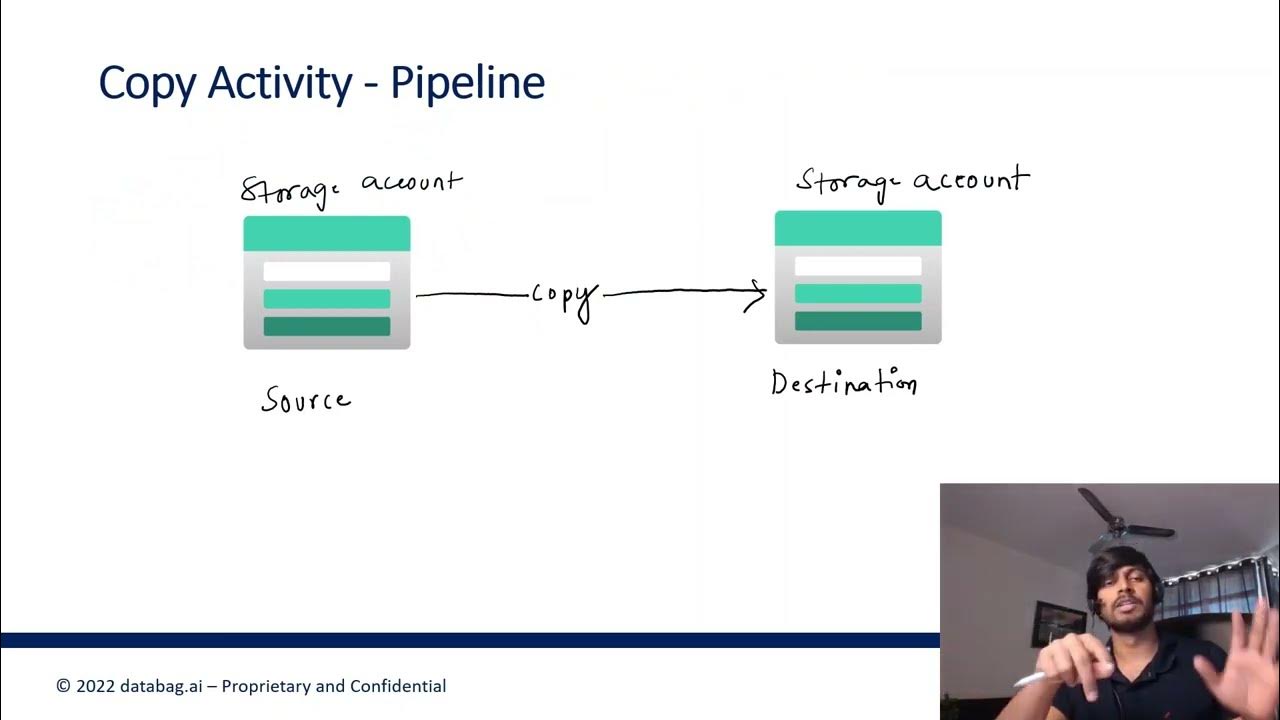

Azure Data Factory Part 3 - Creating first ADF Pipeline

26 DLT aka Delta Live Tables | DLT Part 1 | Streaming Tables & Materialized Views in DLT pipeline

#1 Unlock The Secrets Of Data Analysis: A Comprehensive Tutorial On The Data Analysis Lifecycle

Part 8 - Data Loading (Azure Synapse Analytics) | End to End Azure Data Engineering Project

5-a. Control and Interstage Registers Example 1

5.0 / 5 (0 votes)