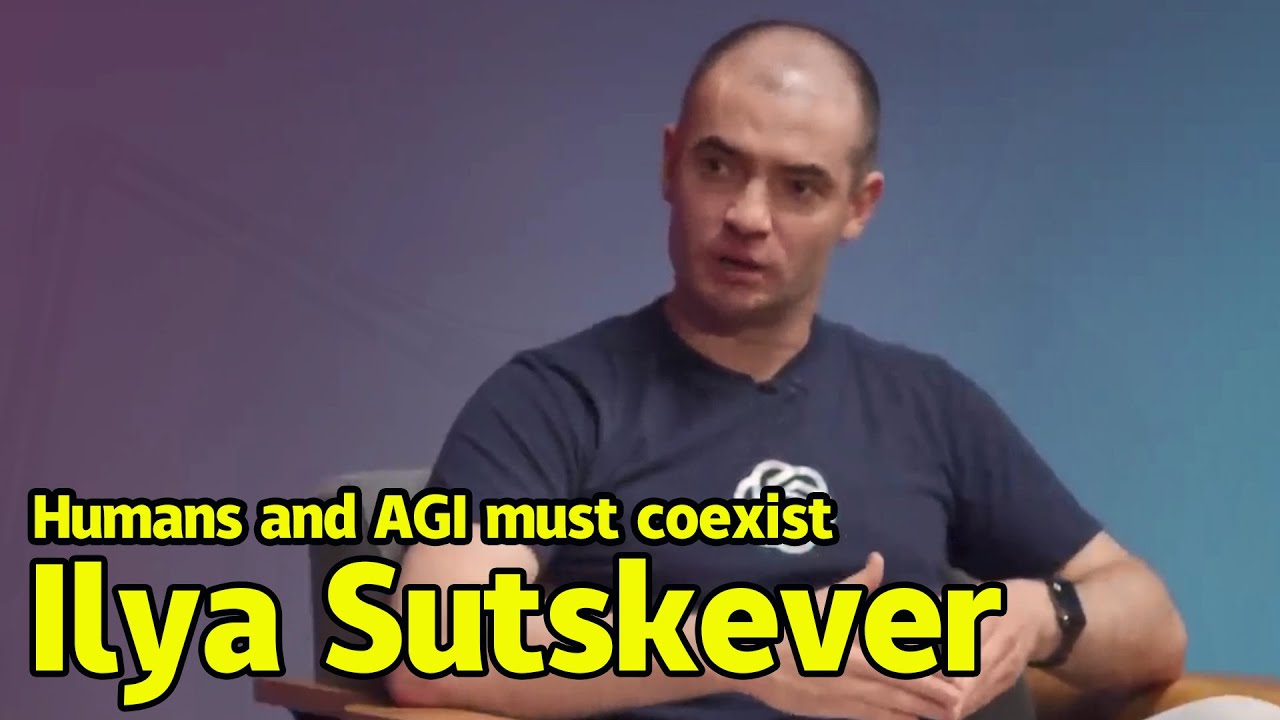

Ilya Sutskever | The birth of AGI will subvert everything |AI can help humans but also cause trouble

Summary

TLDR在这段对话中,OpenAI的代表讨论了通用人工智能(AGI)的定义及其潜在能力。他们探讨了当前的技术,如Transformer和LSTM的优劣,并强调了模型扩展的重要性。安全性问题是另一个关键话题,特别是当AI变得极其强大时的潜在风险和解决方案。此外,他们分享了对未来AI技术的期望,并对使用大型语言模型的企业家提出了实用建议,包括关注独特数据和未来发展趋势。整个讨论充满了对AI未来潜力和挑战的深思与展望。

Takeaways

- 🧠 AGI的定义是能够自动化绝大多数智力劳动的计算机系统,可以被视为与人类智能相当的同事。

- 📈 目前Transformer模型已经非常强大,但未来可能还有更高效或更快的模型出现。

- 🔍 尽管Transformer模型可能不是最终解决方案,但它们已经足够好,并且随着规模的扩大,性能仍在提升。

- 🤖 LSTM与Transformer相比,如果经过适当的改进和训练,仍然可以走得很远,但可能不如Transformer。

- 📊 模型的扩展法则表明,输入神经网络的数据量与简单性能指标之间有很强的关系,但这种关系并不总是适用于更复杂的任务。

- 🚀 神经网络的能力提升,特别是在编程能力方面,是一个令人惊讶的进展,它从几乎无法编程发展到现在能够高效地生成代码。

- 🔐 AI安全是至关重要的,特别是当AI变得极其强大时,需要确保其与人类价值观的一致性,避免潜在的风险。

- 🌐 国际组织在制定AI标准和法规方面可以发挥重要作用,特别是在处理超智能技术时。

- ⏳ 对于构建在大型语言模型之上的产品,重要的是要考虑到技术在未来几年的发展方向,并据此进行规划。

- 🛠️ 利用独特的数据集可以为产品提供竞争优势,同时考虑如何利用模型的当前和潜在能力。

- 🔮 预见模型在未来的可靠性和性能提升,可以帮助企业家和开发者更好地规划他们的产品路线图。

Q & A

什么是AGI,它与普通计算机系统有何不同?

-AGI,即人工通用智能,是一种能够自动化绝大多数智力劳动的计算机系统。与普通计算机系统相比,AGI被认为具有与人类相似的智能水平,能够像人类同事一样工作,对各种问题给出合理的响应。

Transformer模型在实现AGI中扮演了什么角色?

-Transformer模型是当前实现AGI的关键技术之一。它通过注意力机制有效处理序列数据,已经在多个领域展现出强大的能力。尽管Transformer可能不是实现AGI的唯一途径,但它是目前已知的最有效架构之一。

为什么说Transformer模型的好坏并不是二元的?

-Transformer模型的好坏并不是绝对的,而是一个连续的谱系。随着模型规模的增大,它们的表现也会变得更好,但这种提升可能是逐渐放缓的。这意味着,尽管存在改进空间,但现有的Transformer模型已经足够强大。

LSTM与Transformer在AGI中的地位有何不同?

-LSTM是一种循环神经网络,如果对其进行适当的修改和扩展,理论上也可以达到与Transformer相似的效果。但由于目前对LSTM的训练和优化工作较少,因此在实际应用中,Transformer通常表现得更好。

如何理解模型的扩展性(scaling laws)?

-模型的扩展性描述了模型规模与其性能之间的关系。虽然这种关系在某些简单任务上表现得很强,但在更复杂的任务上,如预测模型的新兴能力,这种关系就变得难以预测。

在AGI的发展过程中,哪些新兴能力让你感到惊讶?

-虽然人类大脑能够执行许多复杂任务,但神经网络能够实现这些任务仍然令人惊讶。特别是代码生成能力的发展,从无到有,迅速超越了以往计算机科学领域的期望。

AI安全问题为何重要,它与AI的能力有何关联?

-AI安全问题与AI的能力直接相关。随着AI变得越来越强大,其潜在的风险也相应增加。确保AI的安全性,特别是当它达到超智能水平时,是避免其强大能力被滥用的关键。

什么是超智能(super intelligence)?

-超智能是指远超人类智能水平的AI。这种智能能够解决难以想象的复杂问题,但如果不能妥善管理,也可能带来巨大的风险。

在AI发展中,我们应该如何考虑和应对自然选择的挑战?

-自然选择不仅适用于生物,也适用于思想和组织。即使我们成功地管理了超智能的安全性和伦理问题,也必须考虑技术和社会的长期演变,以及它们如何适应不断变化的环境。

对于使用大型语言模型的企业家,你有哪些实用的建议?

-企业家应该关注两个方面:一是利用独特的数据资源,二是考虑技术的长期发展趋势,并据此规划产品发展。这有助于他们在AI技术不断进步的环境中保持竞争力。

如何看待当前AI技术的不稳定性,它对未来产品开发有何启示?

-当前AI技术的不稳定性提示我们,未来的产品开发需要考虑到技术的成熟度和可靠性。这意味着企业家需要对AI技术的进步保持敏感,并准备好在技术成熟时迅速适应。

Outlines

This section is available to paid users only. Please upgrade to access this part.

Upgrade NowMindmap

This section is available to paid users only. Please upgrade to access this part.

Upgrade NowKeywords

This section is available to paid users only. Please upgrade to access this part.

Upgrade NowHighlights

This section is available to paid users only. Please upgrade to access this part.

Upgrade NowTranscripts

This section is available to paid users only. Please upgrade to access this part.

Upgrade NowBrowse More Related Video

Ray Kurzweil & Geoff Hinton Debate the Future of AI | EP #95

La super-intelligence, le Graal de l'IA ? | Artificial Intelligence Marseille

AGI: solved already?

Ilya Sutskever (OpenAI Chief Scientist) - Building AGI, Alignment, Spies, Microsoft, & Enlightenment

Ilya Sutskever | The future of AGI will be like what you see in the movies

Ilya Sutskever: Deep Learning | Lex Fridman Podcast #94

5.0 / 5 (0 votes)