How I Would Learn Data Science in 2022

Summary

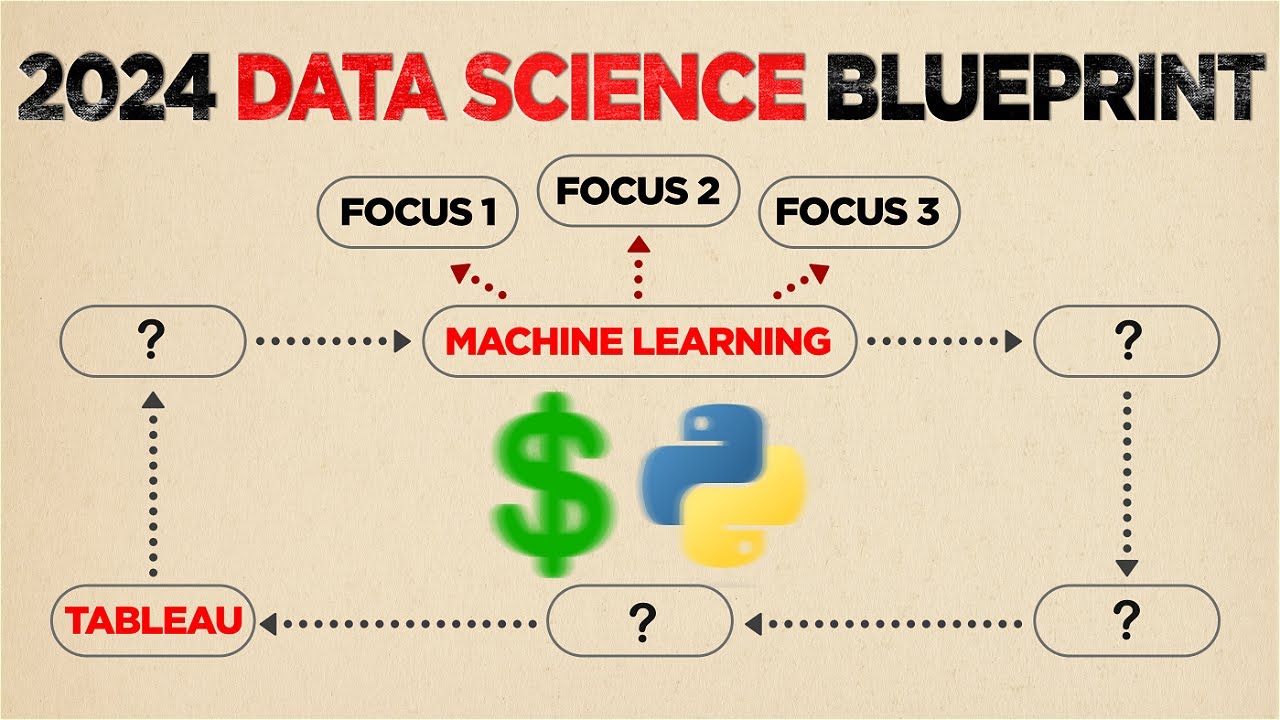

TLDRThe video script provides a practical guide on learning data science in 2022, emphasizing a breadth-first approach centered around project-based learning. It outlines essential topics including coding, statistics, data visualization, exploratory data analysis (EDA), machine learning, data scraping, APIs, databases, and deployment. The speaker recommends starting with Python due to its simplicity and rich data science libraries. The script also advises on learning SQL for database management and highlights the importance of domain knowledge and communication skills for a data scientist. It suggests using interactive platforms like Free Code Camp and resources like Kaggle for practical learning and emphasizes the evolving landscape of data science with automation of repetitive tasks, stressing the need for understanding algorithms and their application in specific contexts.

Takeaways

- 📈 **Practical Guide Focus**: The video emphasizes a practical approach to learning data science, focusing on effective learning methods and persistence.

- 🔍 **Interdisciplinary Nature**: Data science involves coding, math, statistics, and business acumen, necessitating a breadth-first learning approach.

- 🛠️ **Project-Based Learning**: A project-based learning approach is recommended for its effectiveness in encoding information deeply and retaining knowledge.

- 🐍 **Python for Coding**: Python is suggested as the starting language for coding due to its simplicity, great documentation, and data science libraries.

- 📊 **Statistics Fundamentals**: Basic statistical knowledge is crucial, including mean, median, mode, standard deviation, and distributions.

- 📈 **Data Visualization**: Learning a visualization library like seaborn is important for graphically representing data insights.

- 🔬 **Exploratory Data Analysis (EDA)**: EDA is introduced as a method to explore and familiarize oneself with data sets, looking for trends and patterns.

- 📚 **Learning Timeline**: The video provides a suggested timeline for learning each topic, emphasizing the importance of starting with the basics and progressing to projects.

- 🤖 **Machine Learning Algorithms**: Understanding common machine learning algorithms is key, with an intuitive grasp being more important initially than deep mathematical understanding.

- 🌐 **Data Scraping and APIs**: As one progresses, learning to scrape data and work with APIs becomes essential for obtaining and manipulating data sets.

- 💡 **Domain Knowledge**: With automation on the rise, domain knowledge and the ability to communicate the impact of data science work becomes increasingly important.

Q & A

What is the main focus of the video regarding learning data science?

-The main focus of the video is to provide a practical guide on how to effectively learn data science, emphasizing a breadth-first approach centered around project-based learning.

Why is project-based learning recommended for learning data science?

-Project-based learning is recommended because it allows learners to apply theoretical knowledge in practice, which helps in deeper encoding of information into the brain and better retention of knowledge.

What is the recommended first step in learning data science according to the video?

-The recommended first step is learning coding, specifically starting with Python, as it is a general-purpose language with great libraries for data science.

Why is a breadth-first approach preferred over a depth-first approach when learning data science?

-A breadth-first approach is preferred because it helps learners avoid getting overwhelmed by the depth of each subject, allows them to start implementing what they learn sooner, and keeps the learning process engaging.

What are some of the key topics to cover when learning data science?

-Key topics include programming, statistics, data visualization, exploratory data analysis (EDA), machine learning, data scripting, APIs, databases, and deployment, as well as specific niches like NLP and computer vision.

What is the significance of understanding the theory behind machine learning algorithms?

-Understanding the theory behind machine learning algorithms is important for applying them effectively to specific use cases and ensuring they function properly in a given context.

Why is domain knowledge considered crucial for a data scientist?

-Domain knowledge is crucial because it helps a data scientist understand the business context, communicate the value of their work, and ensure that their analyses and models provide real impact and are used by the organization.

What is the recommended timeline for learning the basics of coding in the context of data science?

-The recommended timeline for learning the basics of coding is one to two weeks at four hours per day.

How does the video suggest approaching the learning of statistics for data science?

-The video suggests brushing up on statistics with a focus on high school to first-year university stats, such as mean, median, mode, standard deviation, distributions, central limit theorem, and confidence intervals.

What is the role of accountability in the learning process as discussed in the video?

-Accountability is built into the learning process to maximize the chances of not giving up, especially for those who may not have the strongest willpower and tend to give up easily.

How does the video suggest one should engage with existing projects to enhance their learning?

-The video suggests taking someone else's project and working through it, understanding each line of code and the rationale behind it, rather than just copying code, to gain a practical understanding of how to approach a project.

Outlines

This section is available to paid users only. Please upgrade to access this part.

Upgrade NowMindmap

This section is available to paid users only. Please upgrade to access this part.

Upgrade NowKeywords

This section is available to paid users only. Please upgrade to access this part.

Upgrade NowHighlights

This section is available to paid users only. Please upgrade to access this part.

Upgrade NowTranscripts

This section is available to paid users only. Please upgrade to access this part.

Upgrade NowBrowse More Related Video

Pembelajaran Berbasis Proyek dengan memanfaatkan Platform Teknologi #PembaTIK2023

How I'd Learn Data Science In 2024 (If I Could Restart) - The Ultimate Roadmap

Unit 1 Lessons 1&2 - The Learner Centered Teaching

Film Dokumenter Kurikulum Merdeka: Cerita dari Rote Ndao, Medan dan Makassar | Seri Kedua

Pertemuan 1 - Data Science

Metode Belajar (Learning Method)

5.0 / 5 (0 votes)