AWS re:Invent 2023 - Use RAG to improve responses in generative AI applications (AIM336)

Summary

TLDRこのプレゼンテーションでは、検索を強化した生成(Retrieval Augmented Generation, RAG)を使用して、生成型AIアプリケーションの応答を向上させる方法について説明されています。カスタマイズの重要性、カスタマイズの方法、RAGの具体的な使用例、データ取り込みワークフローから問い合わせワークフローまで、Amazon Bedrockを使用してこれらのプロセスを簡単に実装する方法についても触れられています。また、LangChainのようなオープンソースの生成型AIフレームワークを使用して、Knowledge Bases for Amazon Bedrockを構築する方法も紹介されています。

Takeaways

- 📚 カスタム化された基礎モデルを使用することで、特定のドメイン言語や独特のタスクに適応させ、企業の外部データとの統合を向上させることができます。

- 🔍 検索を補助する生成(Retrieval Augmented Generation: RAG)は、応答の品質と正確さを向上させるために外部知識源を活用する技術です。

- 🌐 Amazon BedrockのKnowledge Basesを使用することで、RAGアプリケーションの構築を簡単かつ管理しやすいようにすることができます。

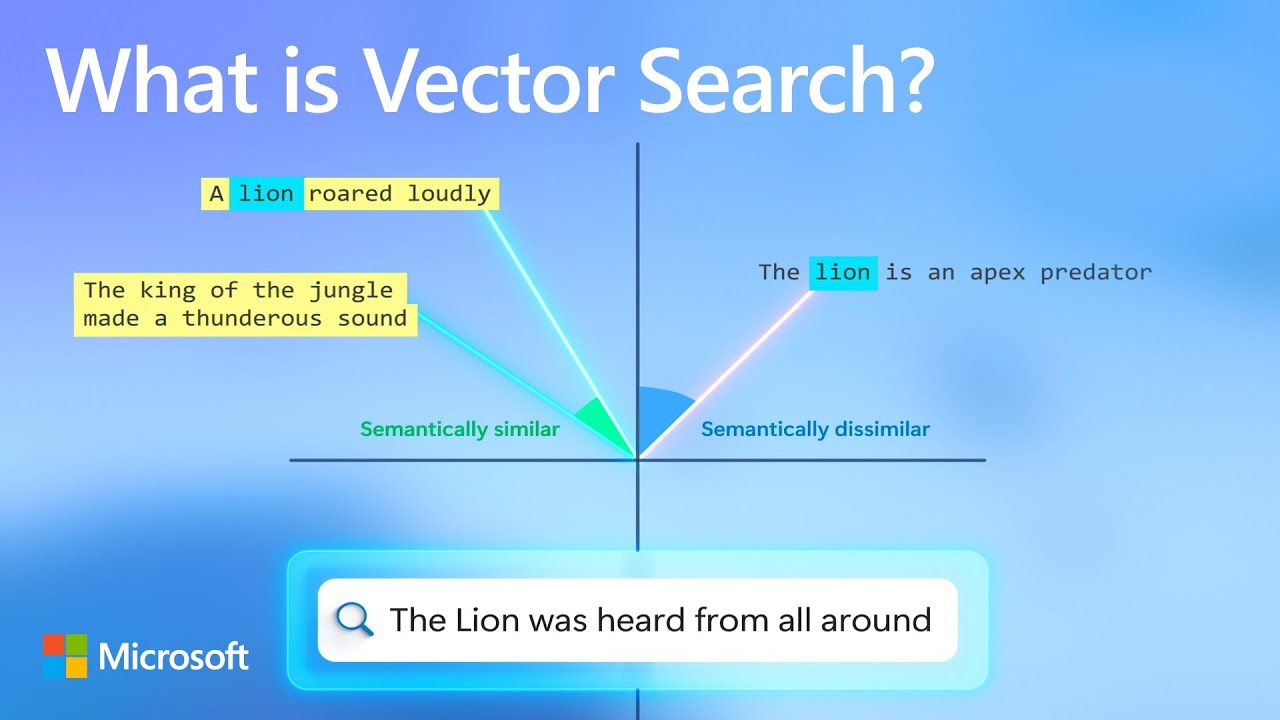

- 📈 データのインジェストワークフローを最適化し、テキストを数値表現に変換することで、意味を保持したセマンティック検索が可能になります。

- 🔧 組み込みのTitan text embeddingsモデルを使用することで、テキストの数値表現を作成し、関連性の高いドキュメントを検索することができます。

- 🛠️ Knowledge Bases for Amazon Bedrockは、データのインジェスト、保存、取得、生成を自動化し、完全に管理されたRAGを実現します。

- 📊 知識ベースとエージェントを組み合わせることで、静的なデータと動的なデータやAPIを統合し、高度なタスクを自動化できます。

- 📈 RAGの使用例には、コンテンツの品質向上、コンテキストベースのチャットボット、パーソナライズド検索、テキスト要約などが含まれます。

- 🔗 OpenSearch Serverless、Redis、Pineconeなどのベクトルデータベースオプションを利用することで、Knowledge Basesのニーズに応じた最適なソリューションを提供できます。

- 📚 LangChainのようなオープンソースの生成型AIフレームワークを使用することで、Knowledge Bases for Amazon Bedrockと簡単に統合できます。

- 💡 データのインジェスト後、同期(Sync)を行うことで、S3にある新しいファイルが自動的に処理され、知識ベースが更新されます。

Q & A

なぜ生成的AIアプリケーションで応答を改善するために検索を強化生成を使用する必要があるのですか?

-検索を強化生成(RAG)を使用することにより、特定のドメイン言語に適応させ、独特のタスクに適応させ、企業の外部データ(FAQやポリシーなど)をモデルに認識させることで、応答の質を向上させることができます。

基礎モデルをカスタマイズする際の一般的なアプローチは何ですか?

-基礎モデルをカスタマイズする一般的なアプローチには、プロンプトエンジニアリング、検索を強化生成、モデルの微調整、およびモデルをスクラッチから訓練するなどがあります。

プロンプトエンジニアリングとは何ですか?

-プロンプトエンジニアリングは、基礎モデルに渡すユーザー入力(プロンプト)を設計し、反復して調整することで、望むべき出力を得る方向性を誘導する技術です。

検索を強化生成(RAG)の基本的なステップは何ですか?

-RAGの基本的なステップは、文書のコーパスからテキストを取得し、それを基礎モデルに渡して企業データに基づく応答を生成することです。

Amazon BedrockのKnowledge Basesとは何ですか?

-Amazon BedrockのKnowledge Basesは、企業のデータソースを自動的にインデックス化し、検索を強化生成(RAG)アプリケーションを簡単に構築できるフルマネージドサービスです。

Knowledge Basesを使用する際のデータインジェストionワークフローは何ですか?

-データインジェストionワークフローは、外部データソース(例:S3にあるドキュメント)を取得し、チャンキングして埋め込みモデル(如:Titan text)を通過させ、最適なベクトルデータベースに保存するプロセスです。

Titan text embeddingsモデルはどのような役割を果たしますか?

-Titan text embeddingsモデルは、テキストを数値表現に変換し、語の関係と意味を維持することで、セマンティック検索やRAGの使用ケースを最適化します。

retrieve and generate APIとは何ですか?

-retrieve and generate APIは、ユーザーの質問に基づいて関連文書を取得し、それをモデルのプロンプトにフィードして最終的な応答を生成するAmazon BedrockのAPIです。

Knowledge BasesとAgents for Amazon Bedrockを組み合わせることの利点は何ですか?

-Knowledge BasesとAgents for Amazon Bedrockを組み合わせることで、静的なドキュメント情報と動的なデータベースやAPIとの情報を統合し、タスクの計画と実行を自動化する高度なアプリケーションを作成できます。

LangChainを使用してKnowledge Basesを構築する方法はどのようになるのですか?

-LangChainを使用してKnowledge Basesを構築するには、Boto3とLangChainの最新バージョンをインストールし、LangChainのラッパーを使用してAmazon Knowledge Base retrieverを初期化し、質問に応じて応答を生成する必要があります。

RAGアプリケーションを構築する際に使用されるembeddingsの重要性は何ですか?

-embeddingsは、テキストを数値表現に変換する際に語の関係と意味を維持するため、セマンティック検索やRAGの精度と正確性を確保する上で非常に重要です。

Outlines

This section is available to paid users only. Please upgrade to access this part.

Upgrade NowMindmap

This section is available to paid users only. Please upgrade to access this part.

Upgrade NowKeywords

This section is available to paid users only. Please upgrade to access this part.

Upgrade NowHighlights

This section is available to paid users only. Please upgrade to access this part.

Upgrade NowTranscripts

This section is available to paid users only. Please upgrade to access this part.

Upgrade NowBrowse More Related Video

RAG Evaluation (Answer Correctness) | LangSmith Evaluations - Part 12

How vector search and semantic ranking improve your GPT prompts

Online Evaluation (RAG) | LangSmith Evaluations - Part 20

Best Practices for GenAI applications on AWS - RAG pipeline Eval - Part 3 | Amazon Web Services

【Copilot活用術 Vol.2】徹底解説Copilot in Word/Excel/PowerPointのビジネス活用法/ポイントはツールの使い分け&組み合わせ/すぐに使えるプロンプトの実用例

RAG Evaluation (Document Relevance) | LangSmith Evaluations - Part 14

5.0 / 5 (0 votes)