Introduction to Large Language Models

Summary

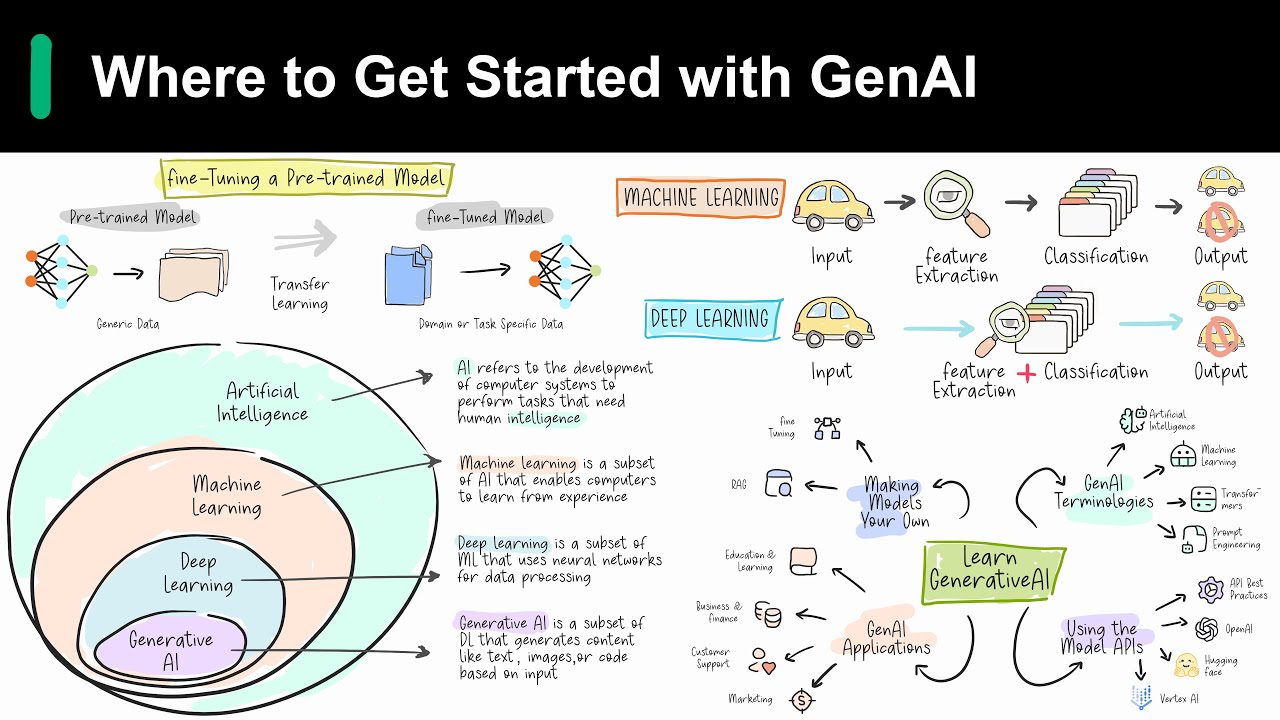

TLDRThis video script offers an insightful exploration into large language models (LLMs), a subset of deep learning capable of pre-training and fine-tuning for specific tasks. The presenter, a Google Cloud engineer, explains the concept of LLMs, their use cases, the process of prompt tuning, and Google's AI development tools. The script delves into the benefits of using LLMs, including their adaptability, minimal training data requirements, and continuous performance improvement. Examples of LLM applications, such as question answering and sentiment analysis, illustrate their practical utility. The video also introduces Google's generative AI development tools, including AI Studio, Vertex AI, and Model Garden, highlighting their role in enhancing LLM capabilities and accessibility.

Takeaways

- 🧠 Large Language Models (LLMs) are a subset of deep learning designed for pre-training on vast datasets and fine-tuning for specific tasks.

- 🐶 The concept of pre-training and fine-tuning in LLMs is likened to training a dog with basic commands and then adding specialized training for specific roles.

- 📚 LLMs are characterized by their large size, referring to both the extensive training data and the high number of parameters in the model.

- 🔧 LLMs are 'general purpose', meaning they are trained to handle common language tasks across various industries before being fine-tuned for specific applications.

- 🔑 The benefits of LLMs include their versatility in handling multiple tasks, minimal requirement for field training data, and continuous performance improvement with more data and parameters.

- 🌐 Generative AI, which includes LLMs, can produce new content like text, images, audio, and synthetic data, extending beyond traditional programming and neural networks.

- 📈 Google's release of Palm, a 540 billion parameter model, demonstrates the state-of-the-art performance in language tasks and the efficiency of the Pathways AI architecture.

- 🤖 The Transformer model, which includes an encoder and decoder, is fundamental to how LLMs process input sequences and generate relevant tasks.

- 🛠️ LLM development contrasts with traditional machine learning, requiring less expertise and training data, focusing instead on prompt design for natural language processing.

- 📊 Prompt design and engineering are crucial for optimizing LLM performance, with differences between creating task-specific prompts and improving system accuracy.

- 🔄 There are three types of LLMs: generic, instruction-tuned, and dialogue-tuned, each requiring different prompting strategies to achieve optimal results.

- 🔧 Google Cloud provides tools like Vertex AI, AI Platform, and generative AI Studio to help developers leverage and customize LLMs for various applications.

Q & A

What are large language models (LLMs)?

-Large language models (LLMs) are a subset of deep learning that refers to large general-purpose language models that can be pre-trained and then fine-tuned for specific purposes.

How do large language models intersect with generative AI?

-LLMs and generative AI intersect as they are both part of deep learning. Generative AI is a type of artificial intelligence that can produce new content including text, images, audio, and synthetic data.

What does 'pre-trained' and 'fine-tuned' mean in the context of large language models?

-Pre-trained means that the model is initially trained for general purposes to solve common language problems. Fine-tuned refers to the process of tailoring the model to solve specific problems in different fields using a smaller dataset.

What are the two meanings indicated by the word 'large' in the context of LLMs?

-The word 'large' indicates the enormous size of the training dataset and the high parameter count in machine learning, which are the memories and knowledge the machine learned from the model training.

Why are large language models considered 'general purpose'?

-Large language models are considered 'general purpose' because they are trained to solve common language problems across various industries, making them versatile for different tasks.

What are the benefits of using large language models?

-The benefits include the ability to use a single model for different tasks, requiring minimal field training data for specific problems, and continuous performance improvement as more data and parameters are added.

Can you provide an example of a large language model developed by Google?

-An example is Palm, a 540 billion parameter model released by Google in April 2022, which achieves state-of-the-art performance across multiple language tasks.

What is a Transformer model in the context of LLMs?

-A Transformer model is a type of neural network architecture that consists of an encoder and a decoder. The encoder encodes the input sequence and passes it to the decoder, which learns to decode the representations for a relevant task.

What is the difference between prompt design and prompt engineering in natural language processing?

-Prompt design is the process of creating a prompt tailored to a specific task, while prompt engineering involves creating a prompt designed to improve performance, which may include using domain-specific knowledge or providing examples of desired output.

How does the development process of LLMs differ from traditional machine learning development?

-LLM development requires thinking about prompt design rather than needing expertise, training examples, compute time, and hardware, making it more accessible for non-experts.

What are the three types of large language models mentioned in the script and how do they differ?

-The three types are generic language models, instruction-tuned models, and dialogue-tuned models. Generic models predict the next word based on training data, instruction-tuned models predict responses to given instructions, and dialogue-tuned models are trained for conversational interactions.

What is Chain of Thought reasoning and why is it important for models?

-Chain of Thought reasoning is the observation that models are better at getting the right answer when they first output text that explains the reason for the answer, which helps in improving the accuracy of the model's responses.

What are Parameter Efficient Tuning Methods (PETM) and how do they benefit LLMs?

-PETM are methods for tuning a large language model on custom data without altering the base model. Instead, a small number of add-on layers are tuned, which can be swapped in and out at inference time, making the tuning process more efficient.

Can you explain the role of generative AI Studio in developing LLMs?

-Generative AI Studio allows developers to quickly explore and customize generative AI models for their applications on Google Cloud, providing tools and resources to facilitate the creation and deployment of these models.

What is Vertex AI and how can it assist in building AI applications?

-Vertex AI is a tool that helps developers build generative AI search and conversation applications for customers and employees with little or no coding and no prior machine learning experience.

What are the capabilities of the multimodal AI model Gemini?

-Gemini is a multimodal AI model capable of analyzing images, understanding audio nuances, and interpreting programming code, allowing it to perform complex tasks that were previously impossible for AI.

Outlines

このセクションは有料ユーザー限定です。 アクセスするには、アップグレードをお願いします。

今すぐアップグレードMindmap

このセクションは有料ユーザー限定です。 アクセスするには、アップグレードをお願いします。

今すぐアップグレードKeywords

このセクションは有料ユーザー限定です。 アクセスするには、アップグレードをお願いします。

今すぐアップグレードHighlights

このセクションは有料ユーザー限定です。 アクセスするには、アップグレードをお願いします。

今すぐアップグレードTranscripts

このセクションは有料ユーザー限定です。 アクセスするには、アップグレードをお願いします。

今すぐアップグレード5.0 / 5 (0 votes)