FREE Local LLMs on Apple Silicon | FAST!

Summary

TLDRこのビデオスクリプトでは、Apple silicon GPUを使用してMacBookでChad GPTのようなものを実行し、より高速に動作するプロセスについて説明しています。スクリプトでは、オープンソースのLLM(Large Language Model)を使用し、AMAというツールを使ってローカルマシンでモデルをダウンロード・管理する方法を紹介しています。また、オープンWeb UIを使用して、PythonとJavaScriptで構築されたバックエンドとフロントエンドを持つチャットボットを作成し、カスタマイズ可能なUIでモデルを切り替えて対話することができるデモンストレーションを行います。さらに、Dockerを使用したデプロイの簡単さも触れており、開発者がコードの背後にある詳細を理解し、より強力な知識と力を持つことができると結論づけています。

Takeaways

- 🚀 AppleシリコンGPUを使用して、MacBookでChad GPTのようなものを実行できるようになり、設定が簡単になりました。

- 💻 個人のマシン上で独自のGPTを実行することができ、Chad GPTと同様に見栄え良くなっています。

- 📚 ソフトウェア開発者として、コードを確認して裏側の動作を知ることができます。

- 🔍 open web UIを使用して、AMAと組み合わせてセットアップします。

- 📥 GitHubリポジトリをクローンし、ローカルNode環境やPython環境のセットアップが必要です。

- 🐐 AMAは、マシン上で実行され、LLMモデルを自動的にダウンロード管理するエージェントです。

- 📚 オープンソースのモデルをダウンロードしてローカルで実行できます。

- 🔧 複数のモデルを試して、ユースケースに最適なものを見つけることができます。

- ⚙️ GPUで完全に実行され、設定なしで透過的に動作します。

- 🌐 open web UIプロジェクトを使用して、美しいUIを構築し、ユーザーインターフェースからモデルを管理できます。

- 🔗 Dockerを使用して、開発環境を構築し、コンテナで実行することも可能です。

- 📝 コミュニティから共有されたプロンプトを使用したり、独自のプロンプトを保存・インポート・エクスポートすることが可能です。

Q & A

AppleシリコンGPUを使用してChad GPTのようなものをMacBookで実行することの利点は何ですか?

-AppleシリコンGPUを使用することで、すべての処理が高速化され、設定や設定手順を省くことができます。また、ローカルマシン上でGPTのようなものを実行できるため、より良い外観と高速な機能性が得られます。

オープンウェブUIとは何ですか?

-オープンウェブUIは、説明されているプロジェクトで使用される名前で、フロントエンドがJavaScriptで書かれており、バックエンドはPythonで構成されています。また、Docker設定も含まれており、コンテナ化して実行することが可能です。

AMAとは何ですか?なぜダウンロードする必要がありますか?

-AMAは、ローカルマシン上で実行され、LLM(Large Language Model)モデルを自動的にダウンロード・管理するエージェントです。様々なオープンソースのLLMモデルを提供し、ローカルで実行できるように設計されています。

なぜ複数のLLMモデルをダウンロードし、ローカルで実行するのですか?

-異なるLLMモデルはそれぞれ異なる機能とトレーニングを持ち、ユーザーは自分の使用ケースに最適な結果を得るために、様々なモデルを試してみることができます。また、モデルを組み合わせることもできるため、柔軟性が高まります。

オープンウェブUIのバックエンドに必要なPythonのバージョンは何ですか?

-オープンウェブUIのバックエンドには、Python 3.11が必要です。ただし、condaを使用して仮想環境を設定し、必要なライブラリをインストールすることができます。

オープンウェブUIのフロントエンドには何が必要ですか?

-オープンウェブUIのフロントエンドは、Node.jsとnpm(Node Package Manager)が必要です。また、必要なパッケージをpackage.jsonからインストールするために、npm installを使用します。

オープンウェブUIを実行するために必要なDockerの設定ファイルは何ですか?

-オープンウェブUIを実行するためには、Dockerfileとdocker-compose.ymlという2つの主要な設定ファイルが必要です。これらは、プロジェクトのドキュメントセクションで見つけることができます。

オープンウェブUIで使用されるモデルはどのようにして取得・削除・組み合わせることができますか?

-UI上から直接モデルをダウンロードしたり、既存のモデルを削除したり、複数のモデルを組み合わせて使用することができます。これにより、ユーザーは自分のニーズに応じて柔軟に操作することが可能です。

オープンウェブUIの設定で、どのようなカスタマイズが行えますか?

-オープンウェブUIの設定では、テーマの選択、システムプロンプトの設定、高度なパラメータの調整などができる他、独自のプロンプトを保存・インポート・エクスポートすることもできます。

オープンウェブUIのコミュニティ機能とは何ですか?

-オープンウェブUIのコミュニティ機能を通じて、他のユーザーが共有しているプロンプトにアクセスしたり、独自のモデルを追加・共有することができます。これにより、より幅広い機能を利用することが可能です。

Dockerを使用してオープンウェブUIを実行する際の利点は何ですか?

-Dockerを使用することで、プロジェクトの依存関係をコンテナ化し、実行環境を簡単に構築・管理することができます。また、開発環境のセットアップを簡素化し、同じ環境を簡単に再現できる利点があります。

オープンウェブUIで提供される独自のモデル機能とは何ですか?

-オープンウェブUIでは、ユーザーが独自のモデルを追加できる機能が提供されています。これにより、特定のタスクに特化したモデルを実行することができ、より高度な機能を利用することが可能です。

Outlines

このセクションは有料ユーザー限定です。 アクセスするには、アップグレードをお願いします。

今すぐアップグレードMindmap

このセクションは有料ユーザー限定です。 アクセスするには、アップグレードをお願いします。

今すぐアップグレードKeywords

このセクションは有料ユーザー限定です。 アクセスするには、アップグレードをお願いします。

今すぐアップグレードHighlights

このセクションは有料ユーザー限定です。 アクセスするには、アップグレードをお願いします。

今すぐアップグレードTranscripts

このセクションは有料ユーザー限定です。 アクセスするには、アップグレードをお願いします。

今すぐアップグレード関連動画をさらに表示

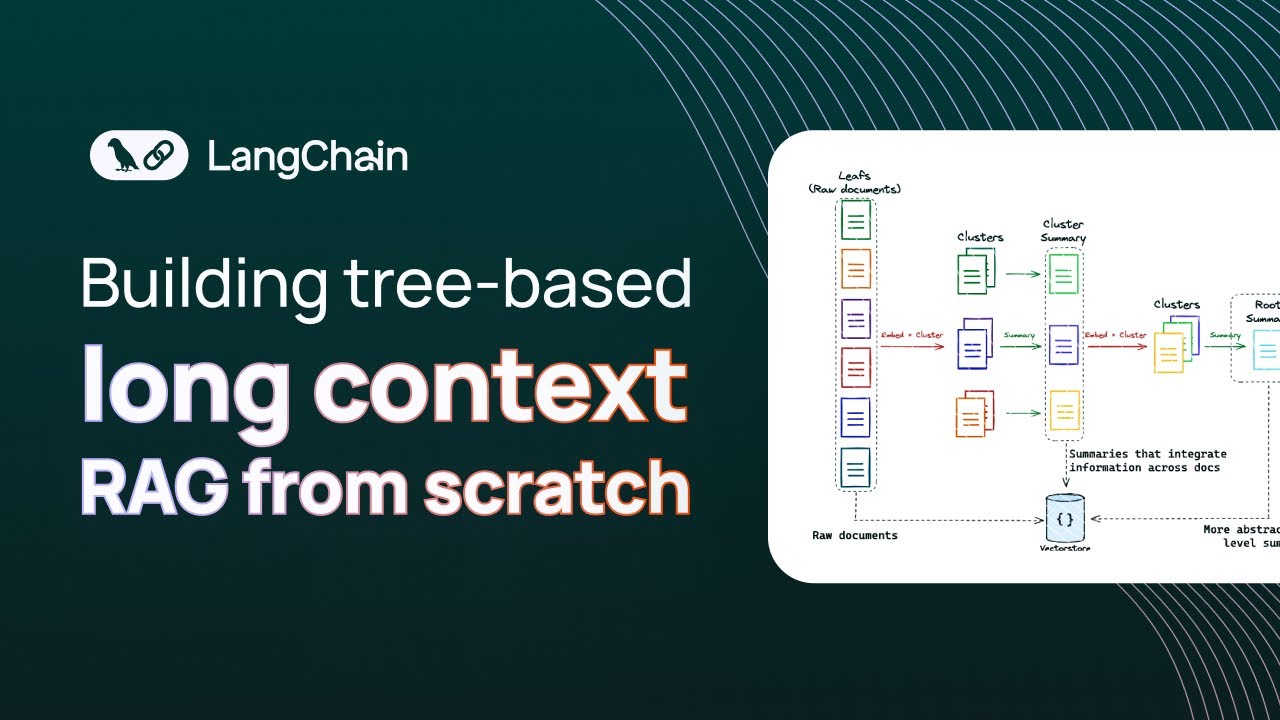

Building long context RAG with RAPTOR from scratch

【バイナリー初心者】初心者が月100万以上稼げる勝率96 8%の最先端型!史上最強手法を実践!!!

Enhance Your Workflow with Superpower ChatGPT Pro: Introducing the Right-Click Menu Feature!

[Lesson 38] Policies and Self Service - Jamf 100 Course

each & underscore_ in Power Query Explained

How to Learn Blender Part 16 | Make a Conveyor Belt with Rigid Bodies and Particle Physics #blender

5.0 / 5 (0 votes)