How to Ingest Data into InfluxDB

Summary

TLDRIn this video, Rick and Barbara from InfluxData discuss the importance of data ingestion in time series databases like InfluxDB. They explore various methods for ingesting data, including direct API integration, client libraries, and the flexible telegraph agent. They also cover how data from diverse sources, such as heart rate monitors and ferries, can be processed and transformed into a format suitable for InfluxDB. With a focus on scalability, they explain how these solutions handle large volumes of data and ensure efficient data transmission even in challenging environments.

Takeaways

- 😀 InfluxDB is a time-series database that collects data from a variety of devices and systems, including everything from health monitors to large-scale industrial machines like oil rigs and ferries.

- 😀 Data can be ingested directly from devices to InfluxDB or through a gateway that adds processing, filtering, and annotations before sending it to InfluxDB.

- 😀 Devices sending data can be either on the edge (like IoT devices) or in the cloud, with data transmitted from the cloud directly to InfluxDB in some cases.

- 😀 Developers can use a REST API exposed directly in front of InfluxDB for simple data ingestion, but this requires familiarity with the expected data format.

- 😀 To simplify data ingestion, InfluxDB provides client libraries in 12 different programming languages that handle format conversion and other necessary tasks.

- 😀 InfluxData also offers a powerful tool called 'Telegraf,' an agent-based solution that allows flexible and scalable data ingestion from a variety of sources and protocols.

- 😀 Telegraf can run on devices or gateways, transforming incoming data into the appropriate format for InfluxDB before sending it up.

- 😀 Telegraf includes processor plugins and logic to perform data transformation, such as converting MQTT data into the required format for InfluxDB.

- 😀 InfluxDB’s ingestion methods are designed to handle very large-scale data, including support for batching data in cases of poor connectivity or large volumes of data.

- 😀 Both Telegraf and the client libraries have built-in mechanisms to manage delays or interruptions in data transmission, ensuring that data is queued and sent when connectivity is restored.

Q & A

What is the primary focus of the discussion in the video?

-The video focuses on data ingestion in InfluxDB, particularly how data from various devices can be sent to and processed by InfluxDB.

What types of devices are considered data sources for InfluxDB?

-Devices that can serve as data sources for InfluxDB range from small devices like heart rate monitors and blood pressure cuffs to large ones like oil rigs and ferries.

What are the two main approaches for sending data to InfluxDB?

-The two main approaches for sending data to InfluxDB are direct device-to-database communication and using a gateway that processes the data before sending it to InfluxDB.

How does the gateway approach differ from direct device-to-database communication?

-The gateway approach involves a device writing data to a gateway first, where additional processing like annotation or filtering can take place, before the data is sent to InfluxDB. In direct communication, the device sends data straight to the database.

What are some of the tools InfluxDB provides to help developers write data from devices or gateways into the database?

-InfluxDB offers a REST API for direct communication with the cloud, client libraries in 12 languages to simplify data writing, and the Telegraph agent for data transformation and management.

What is the role of the Telegraph agent in data ingestion?

-Telegraph is an agent-based solution that pipelines metrics from various input formats to multiple outputs, handling data transformations and sending it to InfluxDB efficiently.

What types of transformations can the Telegraph agent perform on incoming data?

-The Telegraph agent can transform data from different native formats or protocols, such as MQTT, to the format required by InfluxDB, using processor plugins and logic built into the input plugins.

How does InfluxDB handle data ingestion in environments with unreliable connectivity, such as ferries or remote devices?

-InfluxDB's software is designed to handle large volumes of data and manage unreliable connectivity. For instance, the Telegraph agent can batch data for later transmission if connectivity is lost, and client libraries can hold onto data and retry sending it when possible.

What makes InfluxDB's data ingestion solutions scalable?

-InfluxDB's solutions are designed with scalability in mind, featuring the ability to batch data for efficient transfer, back off in case of delays, and handle large volumes of data from various sources.

Why is data transformation an important feature of InfluxDB's ingestion process?

-Data transformation is crucial because devices and systems often generate data in various formats and protocols. InfluxDB’s ingestion tools, like Telegraph, help ensure the data is correctly formatted and processed for seamless integration into the database.

Outlines

Cette section est réservée aux utilisateurs payants. Améliorez votre compte pour accéder à cette section.

Améliorer maintenantMindmap

Cette section est réservée aux utilisateurs payants. Améliorez votre compte pour accéder à cette section.

Améliorer maintenantKeywords

Cette section est réservée aux utilisateurs payants. Améliorez votre compte pour accéder à cette section.

Améliorer maintenantHighlights

Cette section est réservée aux utilisateurs payants. Améliorez votre compte pour accéder à cette section.

Améliorer maintenantTranscripts

Cette section est réservée aux utilisateurs payants. Améliorez votre compte pour accéder à cette section.

Améliorer maintenantVoir Plus de Vidéos Connexes

Extending FactoryTalk View SE with Non-Rockwell Data Products - ES&E Automation Expo 2025

Database Design Tips | Choosing the Best Database in a System Design Interview

Google SWE teaches systems design | EP28: Time Series Databases

Choosing a Database for Systems Design: All you need to know in one video

What is Elasticsearch?

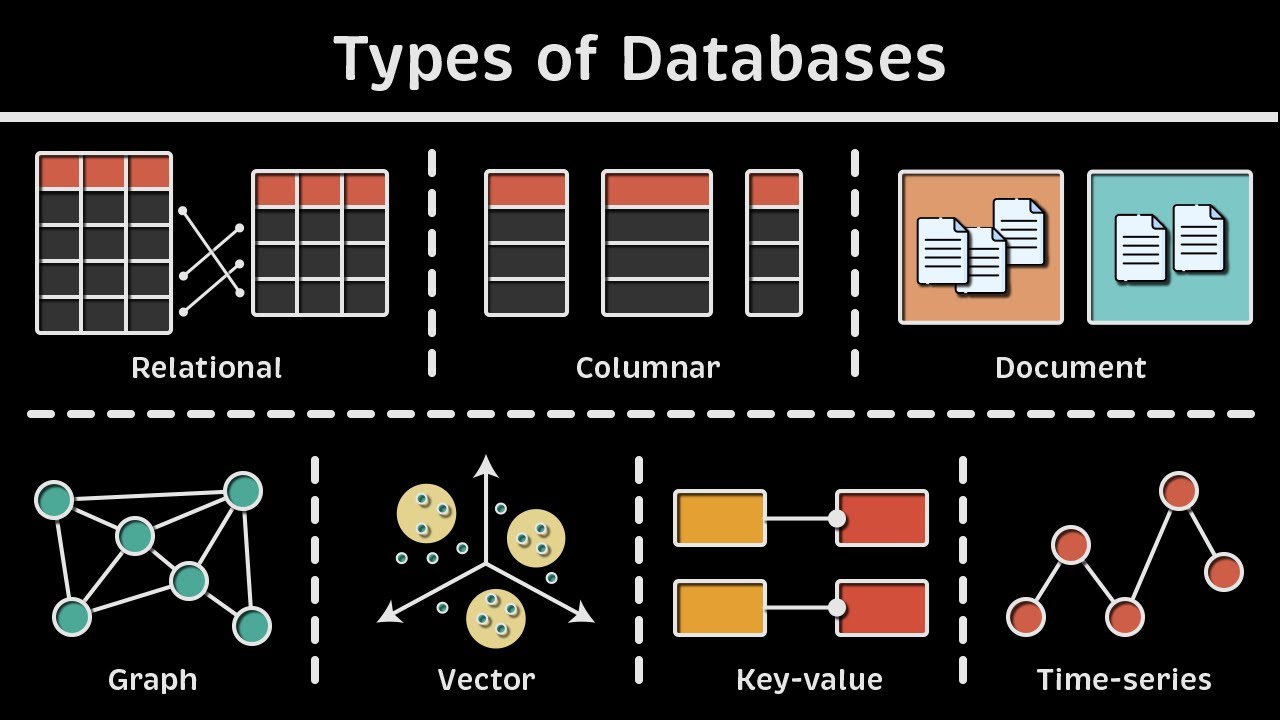

Types of Databases: Relational vs. Columnar vs. Document vs. Graph vs. Vector vs. Key-value & more

5.0 / 5 (0 votes)