Reliabilitas: Technical Error of Measurement (TEM)

Summary

TLDRThis video discusses essential concepts of measurement validity and reliability in research. It explains how to ensure measurements are accurate and consistent, covering various tools like Cronbach's alpha for internal consistency and ICC for inter-rater reliability. The importance of using the correct statistical tests based on data type (nominal, numeric) is highlighted, alongside methods like one-sample t-tests and non-parametric tests. The tutorial also addresses common errors, such as systematic distortions in data, and emphasizes the significance of reliable instruments in obtaining valid results for scientific studies.

Takeaways

- 😀 Validity measures whether a tool accurately measures what it is supposed to measure, and high validity reflects consistent, real-world results.

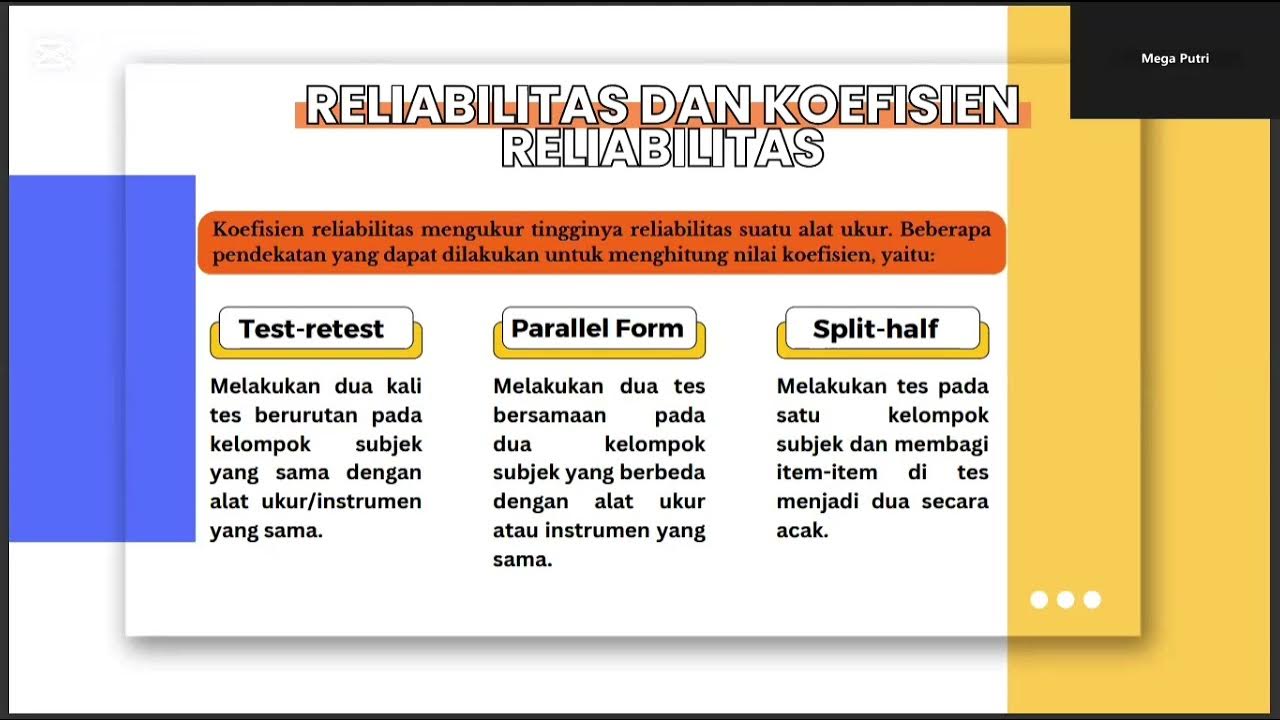

- 😀 Reliability assesses the consistency of measurements over time or between different observers or instruments.

- 😀 Internal consistency for questionnaires can be measured using Cronbach's alpha, while test-retest reliability can be evaluated with intra-class correlation (IC).

- 😀 Categorical data reliability can be measured using Kappa or Cohen's Kappa statistics.

- 😀 Numerical data reliability can be assessed using IC, Delta calculations, and one-sample t-tests depending on data distribution.

- 😀 Before choosing a statistical test, it is essential to check data normality to decide between parametric and nonparametric tests.

- 😀 Delta is calculated as the difference between measurements, and a Delta close to zero indicates high reliability.

- 😀 Reliability testing typically requires 5–10% of the sample or a minimum of 25 participants to provide meaningful results.

- 😀 One-sample t-tests are used for parametric analysis of Delta, while nonparametric median tests are used if data are not normally distributed.

- 😀 Consistency across repeated measurements ensures that instruments or examiners produce dependable results, which is critical for research publication or clinical application.

- 😀 Interpreting reliability requires careful consideration of systematic errors and environmental factors that may influence measurements.

Q & A

What is the main topic discussed in the transcript?

-The main topic revolves around validity, reliability, and statistical tests in measurements, especially in the context of using questionnaires and comparing different measurement tools.

What is validity in the context of testing and measurement?

-Validity refers to the extent to which a test measures what it is intended to measure. A valid test produces results that accurately reflect the real-world variable being measured.

What is the difference between validity and reliability?

-Validity is about the accuracy of the measurement, while reliability refers to the consistency of the results. A test can be reliable without being valid, but for a test to be useful, it must be both valid and reliable.

How can the reliability of a questionnaire be tested?

-Reliability of a questionnaire can be tested using methods like Cronbach's alpha (for internal consistency), Intraclass Correlation (IC), or by checking stability over time with test-retest methods.

What is Cronbach's alpha, and when is it used?

-Cronbach's alpha is a measure of internal consistency or reliability of a questionnaire, used when the items in the questionnaire are related or assess the same construct.

What is the role of technical error in measurement?

-Technical errors refer to variations in measurement caused by the tools or instruments being used. These errors can affect the reliability of results, making them inconsistent or less trustworthy.

What is a systematic error, and how does it affect reliability?

-A systematic error occurs when the measurement consistently deviates from the true value in a predictable manner. This can significantly reduce reliability by causing repeated inaccuracies in results.

How do you compare two measurement tools for reliability?

-To compare two measurement tools for reliability, you can measure the same variable with both tools and check for consistency. Techniques such as correlation or comparing the variance between measurements can help assess reliability.

What is the difference between parametric and non-parametric tests in reliability testing?

-Parametric tests assume that the data follows a certain distribution (usually normal), while non-parametric tests do not. Parametric tests are used when the data is normally distributed, while non-parametric tests are used when the data doesn't meet this assumption.

Why is it important to check the normality of data before performing statistical tests?

-Checking the normality of data is important because many statistical tests assume a normal distribution of data. If the data is not normally distributed, using parametric tests can lead to inaccurate conclusions, and non-parametric tests may be more appropriate.

Outlines

Esta sección está disponible solo para usuarios con suscripción. Por favor, mejora tu plan para acceder a esta parte.

Mejorar ahoraMindmap

Esta sección está disponible solo para usuarios con suscripción. Por favor, mejora tu plan para acceder a esta parte.

Mejorar ahoraKeywords

Esta sección está disponible solo para usuarios con suscripción. Por favor, mejora tu plan para acceder a esta parte.

Mejorar ahoraHighlights

Esta sección está disponible solo para usuarios con suscripción. Por favor, mejora tu plan para acceder a esta parte.

Mejorar ahoraTranscripts

Esta sección está disponible solo para usuarios con suscripción. Por favor, mejora tu plan para acceder a esta parte.

Mejorar ahora5.0 / 5 (0 votes)