Introduction to Generative AI n explainable AI

Summary

TLDRAnnie Thomas discusses advancements in AI, focusing on machine learning and natural language processing. She introduces generative AI, capable of creating new data like text or images, and explainable AI, which counters the 'black box' nature of ML by providing transparent decision-making processes. Thomas also touches on large language models like GPT and Transformer architecture, highlighting their applications in various tasks. She emphasizes the importance of local language processing and suggests research opportunities in summarization, spelling correction, and sentiment analysis.

Takeaways

- 🌟 Annie Thomas introduces herself as a speaker from India, focusing on advancements in AI, particularly in machine learning and natural language processing.

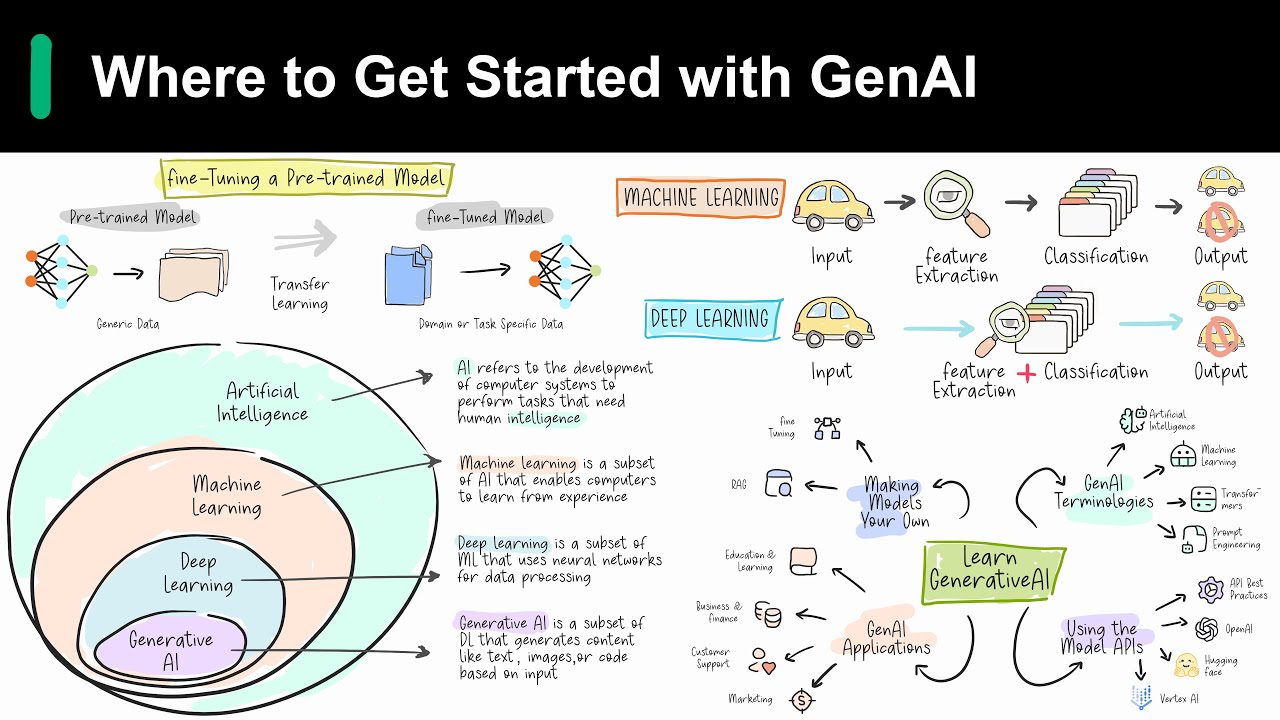

- 📈 She discusses the importance of generative AI, which can create new content like text, images, or audio based on training data, and discriminative models that classify and predict from existing data.

- 💡 Generative AI, also known as chain AI, operates on unstructured data and is exemplified by predictive text features on mobile devices.

- 🧠 Large language models are highlighted as a significant development in AI, capable of understanding context and generating human-like text.

- 🌐 The talk covers the rise of Transformers, a deep learning architecture that has revolutionized various AI applications beyond just language processing.

- 🔍 Explainable AI is introduced as a response to the 'black box' issue in machine learning, aiming to make AI decisions understandable and trustworthy, especially in critical fields like medicine and finance.

- 📊 Annie emphasizes the four principles of explainable AI: meaningful explanation, accuracy, knowledge limitation, and user assistance.

- 🔎 The script mentions the challenges of balancing interpretability and accuracy in explainable AI and the need for clear abstractions in explanations.

- 📚 Research opportunities in natural language processing are explored, including text summarization, spell checkers, and news article classification, with a focus on local languages.

- 📊 The importance of differentiating between extractive and abstractive summarization is highlighted, where the former extracts parts of the original text and the latter generates new text.

- 📈 The script concludes with the speaker's encouragement for researchers to explore various summarization techniques and semantic analysis to improve AI models.

Q & A

Who is Annie Thomas and what is her background?

-Annie Thomas is the speaker of the keynote session. She is from the Line Street of Technology dirt, from Sattisgarh State, and from the country of India.

What is the main topic of Annie Thomas' keynote session?

-The main topic of the keynote session is research prospects in the field of machine learning and natural language processing.

What are the two new aspects of AI in natural language processing mentioned by Annie Thomas?

-The two new aspects of AI in natural language processing mentioned are generative AI and explainable AI.

What is the difference between supervised and unsupervised machine learning models?

-Supervised models have a set of labels that are fixed and always existing, allowing for checking predictions for correctness. Unsupervised models do not have a class of labels and predict based on the model-generated data, which can be unstructured.

What is generative AI and how does it differ from discriminative models?

-Generative AI generates new data based on the training provided and understands the distribution of data. Discriminative models, on the other hand, are used to classify, predict, and cluster and are trained on labeled data.

Can you provide an example of generative AI mentioned in the script?

-An example of generative AI is the predictive text feature on mobile phones, which suggests the next word in a sentence based on patterns learned from previous inputs.

What are Foundation models in the context of generative AI?

-Foundation models are large language models that work on unstructured data to generate new patterns and can generate new content such as text, images, or audio based on the training provided.

What is the importance of explainable AI in industries?

-Explainable AI counters the 'Black Box' tendency of machine learning by providing explanations for decisions, which is crucial in domains like medicine, defense, finance, and law to build trust in the algorithms.

What are the four principles of explainable AI?

-The four principles of explainable AI are providing meaningful explanations, ensuring accuracy, having a high knowledge limit, and assisting users in determining appropriate trust in the system.

What are the challenges faced in explainable AI?

-Challenges in explainable AI include contrasting interpretability and accuracy, the need for abstractions to clarify explanations, and the difficulty of providing explanations that meet human accuracy levels.

What are some applications of natural language processing mentioned in the script?

-Some applications of natural language processing mentioned are text summarization, spell checkers, news article classification, and semantic analysis of reviews.

Outlines

Esta sección está disponible solo para usuarios con suscripción. Por favor, mejora tu plan para acceder a esta parte.

Mejorar ahoraMindmap

Esta sección está disponible solo para usuarios con suscripción. Por favor, mejora tu plan para acceder a esta parte.

Mejorar ahoraKeywords

Esta sección está disponible solo para usuarios con suscripción. Por favor, mejora tu plan para acceder a esta parte.

Mejorar ahoraHighlights

Esta sección está disponible solo para usuarios con suscripción. Por favor, mejora tu plan para acceder a esta parte.

Mejorar ahoraTranscripts

Esta sección está disponible solo para usuarios con suscripción. Por favor, mejora tu plan para acceder a esta parte.

Mejorar ahora5.0 / 5 (0 votes)