Has Generative AI Already Peaked? - Computerphile

Summary

TLDR本文探讨了使用生成式人工智能(AI)来理解图像和文本的CLIP嵌入技术。作者质疑了通过增加数据和模型规模就能实现通用智能的观点,指出要达到零样本学习性能,所需的数据量可能是巨大的。文章通过实验发现,对于困难问题,现有模型在数据量不足的情况下表现不佳。此外,数据集中类别的分布不均也影响了模型对稀有类别的识别能力。尽管大型科技公司可能通过增加GPU和使用人类反馈来改进模型,但作者认为,若要处理在互联网文本和搜索中不常见的难题,可能需要寻找新的方法。

Takeaways

- 🧠 讨论了使用生成式AI来生成新句子、新图像等,并理解图像和文本的能力。

- 🔍 通过大量图像和文本配对学习,可以提炼图像内容的语言表示。

- 🚀 有观点认为,随着训练数据和网络规模的增加,AI将发展出跨领域的通用智能。

- 🔬 科学方法强调实验验证而非假设,对于AI性能提升的乐观预测持谨慎态度。

- 📉 近期论文指出,实现通用零样本性能所需的数据量可能非常庞大,难以实现。

- 📈 论文通过实验数据展示了数据量与模型性能之间的关系,通常呈现对数增长而非线性。

- 📊 论文分析了约4000个概念在数据集中的分布,以及它们在下游任务中的表现。

- 🌐 讨论了CLIP嵌入(图像和文本的共享嵌入空间)及其在分类、推荐系统等任务中的应用。

- 🚧 论文指出,对于困难问题,现有数据量不足以有效训练模型,导致性能受限。

- 📚 强调了数据集中类别和概念的不均匀分布问题,常见类别(如猫)过度表示,而特定类别(如某些树种)则表示不足。

- 🔑 暗示了除了收集更多数据之外,可能需要新的数据表示方法或机器学习策略来提升对困难任务的性能。

Q & A

什么是CLIP嵌入(clip embeddings)?

-CLIP嵌入是一种通过大量图像和文本对训练得到的表示方法,能够将图像和文本映射到共享的嵌入空间中,使得描述相同内容的图像和文本在嵌入空间中彼此接近。

为什么有人认为通过增加更多的数据和更大的模型就能实现通用智能(general intelligence)?

-这种观点基于观察到的现象:随着数据和模型规模的增加,AI在图像识别等领域的表现逐渐提升。因此,一些人认为,只要继续增加数据量和模型规模,AI最终能够处理所有类型的任务。

为什么说实验验证比假设更重要?

-在科学领域,实验验证是检验假设正确性的关键。仅仅提出假设而不通过实验来证明它们,无法确保这些假设在实际应用中的有效性。

这篇论文为什么反对仅通过增加数据量和模型规模就能解决所有问题的观点?

-这篇论文通过实验发现,要实现在新任务上的零样本(zero-shot)表现,所需的数据量是极其庞大的,以至于实际上无法实现。这表明仅靠增加数据和模型规模并不能无限提升AI的性能。

什么是下游任务(downstream tasks)?

-下游任务是指在训练好基本模型后,利用这些模型进行的特定应用任务,如分类、推荐系统等。

为什么说在困难问题上应用下游任务需要大量的数据支持?

-因为困难问题往往涉及到更具体的概念,这些概念在数据集中可能非常少见,导致模型无法学习到足够的特征来进行有效识别或分类。

这篇论文是如何测试不同概念在数据集中的分布和模型性能的关系的?

-论文中定义了约4000个不同的概念,并分析了这些概念在数据集中的分布情况,然后测试了在这些概念上的下游任务性能,并将其与对应概念的数据量进行对比。

为什么说数据集中的类别分布不均匀会影响模型性能?

-如果数据集中某些类别(如猫)过度表示,而其他类别(如特定树种)表示不足,模型在常见类别上的性能会很好,但在不常见类别上的性能会较差,因为模型没有足够的数据来学习这些类别的特征。

为什么说仅靠增加数据量可能无法实现AI性能的大幅提升?

-论文中的实验结果表明,随着数据量的增加,模型性能的提升会逐渐趋于平缓,即达到一个平台期,这意味着继续增加数据量可能无法带来预期的性能提升。

这篇论文对于未来AI发展的意义是什么?

-这篇论文提供了对当前AI发展模式的批判性思考,提示我们可能需要寻找新的方法或策略来提升AI的性能,而不仅仅是依赖于数据量的增加。

Outlines

此内容仅限付费用户访问。 请升级后访问。

立即升级Mindmap

此内容仅限付费用户访问。 请升级后访问。

立即升级Keywords

此内容仅限付费用户访问。 请升级后访问。

立即升级Highlights

此内容仅限付费用户访问。 请升级后访问。

立即升级Transcripts

此内容仅限付费用户访问。 请升级后访问。

立即升级浏览更多相关视频

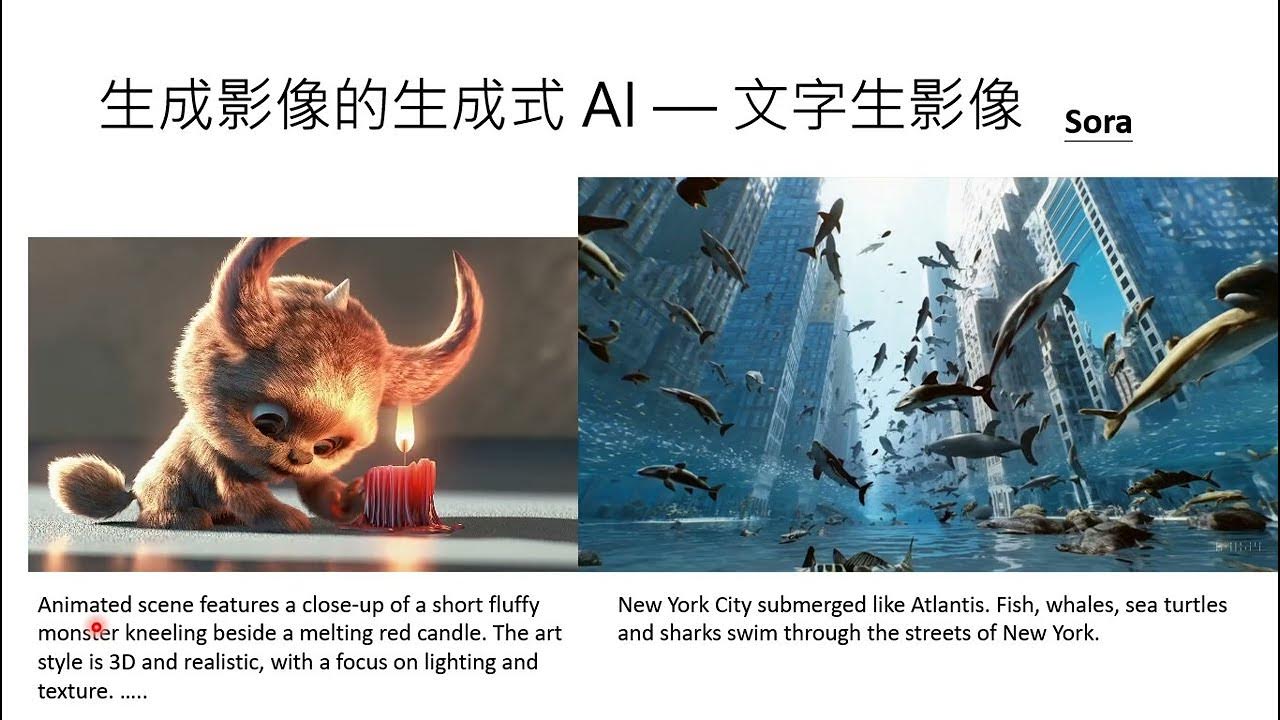

【生成式AI導論 2024】第17講:有關影像的生成式AI (上) — AI 如何產生圖片和影片 (Sora 背後可能用的原理)

The Next Revolution in AI: Multimodal Models

【生成式AI導論 2024】第18講:有關影像的生成式AI (下) — 快速導讀經典影像生成方法 (VAE, Flow, Diffusion, GAN) 以及與生成的影片互動

Playlab Asynch Module 1: Introduction to AI

【生成式AI導論 2024】第2講:今日的生成式人工智慧厲害在哪裡?從「工具」變為「工具人」

GPT-4o 背後可能的語音技術猜測

Stylar AI Tutorial - New AI for Text to Image & Image to Image

5.0 / 5 (0 votes)