The evolution of CAPTCHA

Summary

TLDRThe video script discusses the evolution of CAPTCHAs, starting with their creation by Luis Von Ahn in 2000 to combat spam bots on Yahoo. It explains how the initial success of CAPTCHAs was due to computers' poor optical character recognition, but over time, with advancements in AI, CAPTCHAs became easier for bots to solve. reCAPTCHA was introduced to digitize books and improve services like Google Maps. The script highlights the ongoing battle between bot makers and website security, with Google's reCAPTCHA version three monitoring user behavior for more accurate human-bot differentiation.

Takeaways

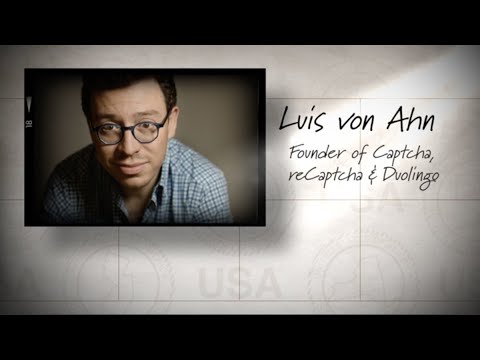

- 🧩 CAPTCHAs were created to distinguish humans from bots, with the first version introduced in 2000 by Luis Von Ahn to combat spam email accounts.

- 🔍 The original CAPTCHA relied on the human ability to read distorted text, which computers at the time struggled with due to poor optical character recognition (OCR) technology.

- ⏱ Luis Von Ahn was concerned about the time wasted by users typing CAPTCHAs, estimating 500,000 hours daily in solving them.

- 📚 reCAPTCHA was introduced as an innovative solution to digitize old books by using CAPTCHAs for two words, one as a control and the other from uncertain scans.

- 💡 Google acquired reCAPTCHA in 2009 and utilized it for digitizing books and archives, demonstrating its practical application beyond just security measures.

- 🤖 The rise of online services that solve CAPTCHAs for bots and the use of CAPTCHAs on certain websites to deter bots highlighted the ongoing battle between bots and website security.

- 📈 Advances in A.I. led to computers being able to solve CAPTCHAs more accurately than humans, which flipped the original purpose of CAPTCHAs and made them a challenge for humans.

- 🔒 reCAPTCHA version two introduced the 'I'm not a robot' checkbox, collecting additional data on user behavior to improve the accuracy of distinguishing humans from bots.

- 🕵️♂️ reCAPTCHA version three monitors user behavior in the background without the need for interaction, using machine learning to determine if the user is human or a bot.

- 🛑 The evolution of CAPTCHAs has been a continuous arms race, with bot makers adapting to new challenges and developing strategies to bypass security measures.

- 🌐 The internet's evolution ensures that the battle between bot makers and website security will continue, necessitating ongoing innovation in CAPTCHA technology.

Q & A

What problem did Yahoo face in 2000 that led to the creation of CAPTCHA?

-In 2000, Yahoo faced a problem where automatic bots were creating millions of free email accounts and using them to send spam.

Who developed the concept of CAPTCHA and what does it stand for?

-Luis Von Ahn, a Ph.D. student in computer science, developed the concept of CAPTCHA, which stands for Completely Automated Public Turing test to tell Computers and Humans Apart.

Why were CAPTCHAs initially successful in preventing bots?

-CAPTCHAs were initially successful because computers at the time were not proficient in optical character recognition (OCR), while humans could easily read distorted characters.

What was Luis Von Ahn's concern regarding the use of CAPTCHAs?

-Luis Von Ahn was concerned that solving CAPTCHAs was wasting a significant amount of human time and脑力, as it took an average of 10 seconds per user to type in a CAPTCHA.

How does reCAPTCHA improve upon the original CAPTCHA concept?

-reCAPTCHA improved upon CAPTCHA by using the process of solving CAPTCHAs to help digitize old books by having users type in words from scanned documents that the system was unsure about.

Why did Google purchase reCAPTCHA in 2009?

-Google purchased reCAPTCHA in 2009 to use its technology for digitizing books for Google Books and the archives of the New York Times, and later to improve Google Maps services.

What is the role of online services that solve CAPTCHAs in the context of bot activities?

-Online services that solve CAPTCHAs allow bots to bypass these tests by forwarding CAPTCHAs to human solvers, thus enabling bots to continue their activities on the internet.

How have advances in AI impacted the effectiveness of CAPTCHAs?

-Advances in AI have made it easier for computers to solve CAPTCHAs, to the point where they can solve them with higher accuracy than humans, thus undermining the purpose of CAPTCHAs to distinguish between humans and bots.

What changes were introduced in reCAPTCHA version two to better identify users?

-reCAPTCHA version two introduced a 'I'm not a robot' checkbox that, when clicked, allowed Google to collect additional data about the user's behavior and context, which was then analyzed by a machine learning model to determine if the user was human or a robot.

How does reCAPTCHA version three differ from its predecessors?

-reCAPTCHA version three does not require users to click a box or solve puzzles. Instead, it continuously monitors user behavior in the background to determine if the user is human or a robot, and can take actions such as blocking, requesting email verification, or holding for human review based on the assessment.

What is the current challenge for bot makers in relation to reCAPTCHA version three?

-The current challenge for bot makers is to develop AI systems that can mimic human behavior closely enough to fool reCAPTCHA version three's behavior monitoring system, despite its advanced capabilities.

Outlines

此内容仅限付费用户访问。 请升级后访问。

立即升级Mindmap

此内容仅限付费用户访问。 请升级后访问。

立即升级Keywords

此内容仅限付费用户访问。 请升级后访问。

立即升级Highlights

此内容仅限付费用户访问。 请升级后访问。

立即升级Transcripts

此内容仅限付费用户访问。 请升级后访问。

立即升级浏览更多相关视频

Why Is Procreate Folio Ending? Here’s What You Need To Know!

Duolingo founder Luis von Ahn on education and his journey through the tech industry

Membongkar dan Mengatasi Spam Komentar Judol di Youtube

Is Twitter over? Can the community fix the problems?

¿Cuenta esa puntita del CAPTCHA? (y por qué Google quiere que dudes) • #Datazo

How to Make Learning as Addictive as Social Media | Luis Von Ahn | TED

5.0 / 5 (0 votes)