I Suck At SQL, Now My DB Tells Me How To Fix It

Summary

TLDRThis script showcases an enthusiastic developer's reaction to PlanetScale's newly introduced 'Schema Recommendations' feature. The developer highlights how this innovative tool automatically suggests ways to enhance database performance, reduce memory and storage consumption, and optimize schema design based on real-time production data analysis. With genuine excitement, they explore the feature's capabilities, testing it on their own database and explaining its inner workings. The script effectively conveys the developer's amazement at PlanetScale's groundbreaking approach, seamlessly integrating database management into the development workflow while empowering even non-experts with expert-level database experiences.

Takeaways

- ✨ PlanetScale introduced a new feature called 'Schema Recommendations' that automatically suggests schema improvements based on production database traffic to optimize performance, reduce memory/storage, and enhance the schema.

- 🔑 Schema Recommendations can suggest adding indexes for inefficient queries, removing redundant indexes, preventing primary key ID exhaustion, and dropping unused tables.

- 🌐 PlanetScale uses Kafka to process schema changes and trigger background jobs to examine the schema and query performance for potential recommendations.

- 🔍 The recommendations are based on query-level telemetry and analysis of column cardinalities, leveraging tools like VesSQL's query parser and MySQL's histogram analysis.

- 🛠️ Recommendations can be applied directly to a database branch for testing and safe migration, following PlanetScale's Git-like branching model for schema changes.

- 📈 An example showcased how adding an index based on a recommendation significantly improved query performance from nearly a second to instantaneous.

- 💰 PlanetScale's pricing model no longer charges based on rows read/written, addressing a previous issue where inefficient queries led to high costs.

- 🌐 The hobby tier of PlanetScale is no longer globally available, prompting the need for alternative free options in certain regions for future tutorials.

- 🤖 While not technically AI, Schema Recommendations acts as a co-pilot for databases, guiding users towards optimized schemas and performance.

- 🎯 PlanetScale aims to provide an expert-level database experience for non-experts through features like Schema Recommendations.

Q & A

What is the new feature introduced by PlanetScale that the video is discussing?

-The new feature introduced by PlanetScale is called 'Schema Recommendations'. It automatically provides recommendations to improve database performance, reduce memory and storage usage, and optimize the schema based on the production database traffic.

How does PlanetScale generate schema recommendations?

-PlanetScale uses a system called the 'Schema Adviser' which analyzes the schema and recent query performance statistics to generate tailored recommendations. It employs techniques like Query parsing, semantic analysis, and column cardinality extraction to identify inefficient queries and redundant indexes.

What are the different types of schema recommendations supported by PlanetScale?

-The four types of schema recommendations supported initially are: 1) Adding indexes for inefficient queries, 2) Removing redundant indexes, 3) Preventing primary key ID exhaustion, and 4) Dropping unused tables.

Why are indexes crucial for relational database performance?

-Indexes are crucial for relational database performance because without optimal indexes, the database may need to scan a large number of rows to satisfy queries that only match a few records, leading to performance issues.

What is the significance of the example discussed in the video where a lack of indexing led to high costs?

-The example illustrates the importance of proper indexing. In the example, a missing index on a 'vendor ID' column caused the database to read millions of rows for each query, leading to high costs of around $1,000 per day, even though the queries were fast. Adding the appropriate index drastically reduced the costs.

How does PlanetScale handle redundant indexes?

-PlanetScale scans the schema for redundant indexes every time it is changed. It suggests removing two types of redundant indexes: 1) Exact duplicate indexes, and 2) Left prefix duplicate indexes, where one index contains the same columns as the prefix of another index.

What is the purpose of the 'Preventing primary key ID exhaustion' recommendation?

-This recommendation aims to prevent auto-incremented primary keys from exceeding the maximum allowable value for the underlying column type. If a column is above 60% of the maximum allowable type, PlanetScale recommends changing the column to a larger type.

How does PlanetScale handle unused tables?

-If a table has not been queried for more than 4 weeks, PlanetScale will recommend dropping that unused table.

What is the significance of the 'p50' metric mentioned in the video?

-The 'p50' is the 50th percentile in a set of queries. It represents the time by which 50% of the queries were faster. It is used as a base metric to measure average query performance.

What is the relationship between PlanetScale and VesS (ViteSS)?

-PlanetScale is the lead maintainer and effective owner of VesS (ViteSS), which is a system built to scale MySQL databases more efficiently. PlanetScale maintains a fork of MySQL that works seamlessly with VesS and provides improved scalability.

Outlines

此内容仅限付费用户访问。 请升级后访问。

立即升级Mindmap

此内容仅限付费用户访问。 请升级后访问。

立即升级Keywords

此内容仅限付费用户访问。 请升级后访问。

立即升级Highlights

此内容仅限付费用户访问。 请升级后访问。

立即升级Transcripts

此内容仅限付费用户访问。 请升级后访问。

立即升级浏览更多相关视频

Claude 3.5 MIGLIORE AI in circolazione [Tutorial Artifacts]

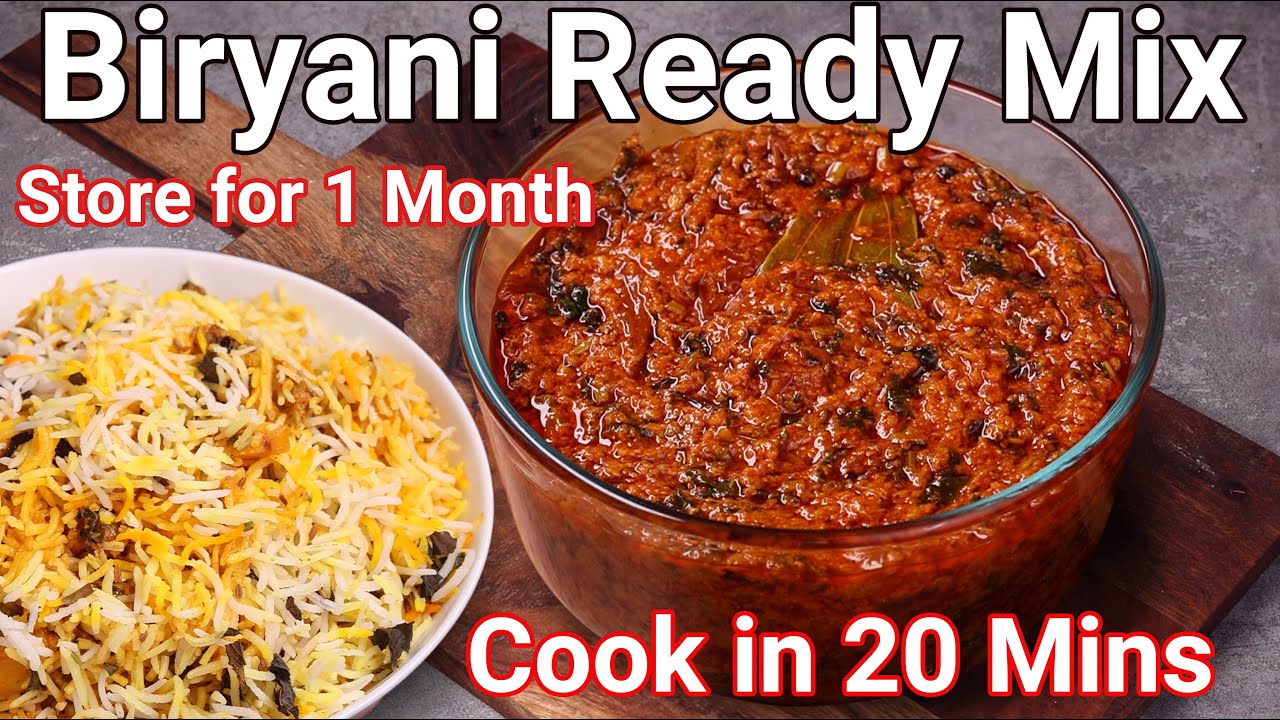

Instant Biriyani Gravy Mix Recipe - Cook Rice Dum Biryani in 20 Mins | Biryani Curry - Store 1 Month

Deferrable Views - New Feature in Angular 17

Ed's Heinz Ad

Getting Started with Amazon Q Developer Customizations

BOB HAIRCUT (graduation) by SANJA KARASMAN

5.0 / 5 (0 votes)