COS 333: Chapter 2, Part 1

Summary

TLDRThis lecture delves into the evolution of high-level programming languages, focusing on historical context and key features. It covers early languages like Plan Calcul and FORTRAN, highlighting FORTRAN's impact on computing and its versions up to FORTRAN 90. The discussion then shifts to Lisp, the first functional programming language, emphasizing its influence on AI and the significance of its dialects, Scheme and Common Lisp, in modern programming.

Takeaways

- 📚 The lecture series will cover the evolution of major high-level programming languages, focusing on their history and main features rather than lower-level details.

- 🧐 Students often find this chapter overwhelming due to the breadth of languages covered, but the focus should be on the purpose, environment, influences, and main features of each language.

- 🔍 The textbook provides a detailed history lesson with a figure illustrating the development timeline and influence of various programming languages.

- 🌐 Plan Calcul, developed by Conrad Zuse, introduced advanced concepts despite never being implemented, showcasing the early theoretical development in programming languages.

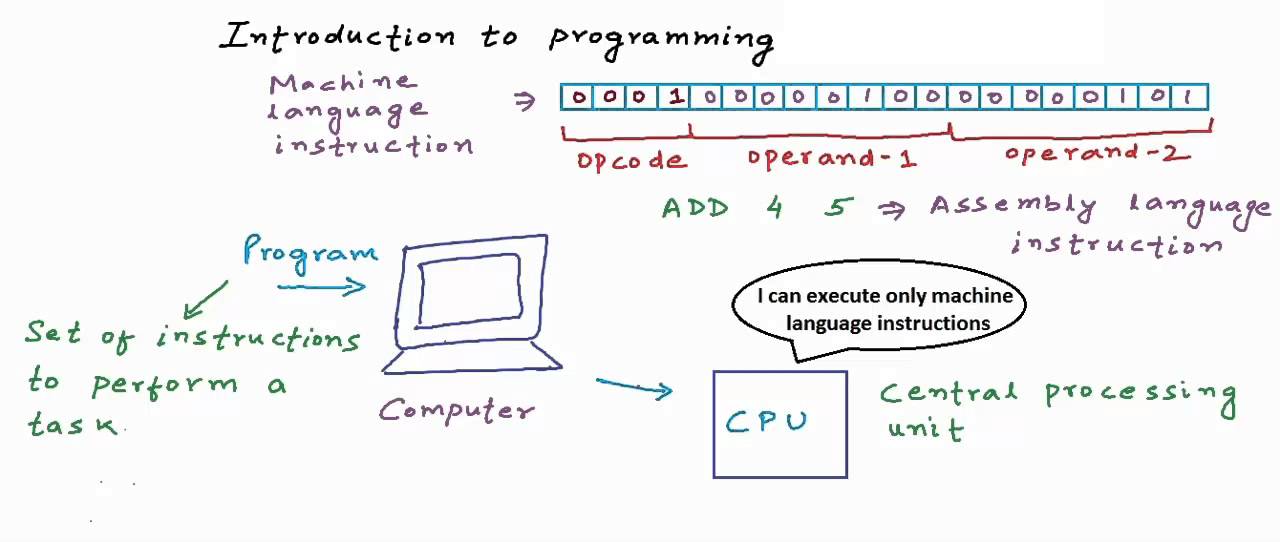

- 🔢 Pseudocode languages served as an intermediary step between machine code and high-level languages, improving readability and writability without full features of high-level languages.

- 🚀 FORTRAN (Formula Translating System) was the first proper high-level programming language, developed for scientific computing and designed to work with the IBM 704 computer.

- 🔄 FORTRAN's development was influenced by the limitations and capabilities of the IBM 704, emphasizing the need for efficient compiled code and good support for array handling and counting loops.

- 🛠 Subsequent versions of FORTRAN introduced features like independent compilation, explicit type declarations, and support for more sophisticated programming constructs like character strings and logical loops.

- 📈 FORTRAN's evolution reflects a shift from highly efficient scientific computing to a more flexible and user-friendly language with features suitable for a wider range of applications.

- 📝 LISP (List Processing) was the first functional programming language, designed to support symbolic computation and list processing, which is integral to artificial intelligence research.

- 🗃️ LISP's simplicity and use of lambda calculus for its syntax highlight its focus on functional programming concepts, distinguishing it from imperative programming paradigms.

Q & A

What is the main focus of Chapter Two in the textbook?

-Chapter Two focuses on the evolution of major high-level programming languages, providing a historical overview and discussing their main features and influences on subsequent languages.

Why might students often feel overwhelmed when studying this chapter?

-Students may feel overwhelmed because the chapter covers a large number of programming languages and includes a lot of lower-level detail, which can be challenging to grasp.

What are the four key aspects to focus on when studying each high-level programming language in the chapter?

-The four key aspects are the purpose of the language, the environment it was developed in, the languages that influenced it, and the main features it introduced that influenced later languages.

What is Plan Calcul and why is it significant?

-Plan Calcul is an early theoretical programming language developed by Conrad Zuse. It is significant because it introduced concepts like advanced data structures and invariants, which were later implemented in more developed high-level programming languages.

What were the limitations of machine code that pseudocode languages sought to address?

-Machine code was difficult to write, understand, and modify due to its low-level nature and the need for absolute addressing. Pseudocode languages aimed to provide a higher-level abstraction while still being close to the hardware for efficiency.

What is the significance of Fortran in the history of programming languages?

-Fortran is significant as it was the first proper high-level programming language, designed to work with the IBM 704 computer, and it introduced the concept of a compilation system for efficient execution.

What are the two main data types in the original Lisp programming language?

-The two main data types in the original Lisp programming language are atoms, representing symbolic concepts, and lists, which are used for list processing.

Why was dynamic storage handling important for Lisp?

-Dynamic storage handling was important for Lisp because it supported the language's core concept of list processing, which required the ability to dynamically grow and shrink data structures.

What is the difference between Scheme and Common Lisp as dialects of Lisp?

-Scheme is a simple, small dialect of Lisp with a focus on clarity and simplicity, often used in educational settings. Common Lisp, on the other hand, is a feature-rich dialect that combines useful features from various Lisp dialects and is sometimes used in larger industrial applications.

How did Fortran 90 change the direction of the Fortran language?

-Fortran 90 introduced significant changes such as dynamic arrays, support for recursion, and parameter type checking, making the language more flexible and less focused solely on high performance.

Outlines

This section is available to paid users only. Please upgrade to access this part.

Upgrade NowMindmap

This section is available to paid users only. Please upgrade to access this part.

Upgrade NowKeywords

This section is available to paid users only. Please upgrade to access this part.

Upgrade NowHighlights

This section is available to paid users only. Please upgrade to access this part.

Upgrade NowTranscripts

This section is available to paid users only. Please upgrade to access this part.

Upgrade Now5.0 / 5 (0 votes)