Introduction to Machine Learning, Lecture-7 ( 2022 version) ( Linear Regression, Normal Equations)

Summary

TLDRThis lecture delves into linear regression model development, emphasizing its application in data prediction. The speaker explains the core concept of linear regression analysis, highlighting that 'linear' refers to the equations' parameters, not necessarily a straight line. The process involves deriving equations to solve for parameters, such as w0 and w1, and validating the model using test data. The discussion also covers the use of mean squared error (MSE) as an optimization function, its role in error calculation, and how it ensures a unique parameter set for the predictor. The lecture emphasizes the simplicity, elegance, and effectiveness of linear regression in solving real-world problems like weather prediction, stock market trends, and housing prices.

Takeaways

- 😀 Linear regression doesn't just fit a straight line but can also model exponential, quadratic, or cubic curves depending on the data's trend.

- 😀 The term 'linear regression' refers to the linearity of the parameters, not the form of the curve being fitted (e.g., linear, quadratic).

- 😀 Linear regression provides a way to predict unknown data points by fitting a model to existing data and using it for forecasting.

- 😀 The goal of testing the model is to minimize the error between predicted values and actual values, confirming the model's effectiveness.

- 😀 The method of solving the regression equations involves simple linear algebra (substitution, elimination), which can be done manually for small datasets.

- 😀 The model parameters (w₀, w₁, etc.) can be determined using regression analysis, which involves solving for these parameters in linear equations.

- 😀 The Mean Squared Error (MSE) is used to measure the difference between predicted values and actual ground truth, serving as the error function.

- 😀 MSE function is quadratic, ensuring the error is always positive, and it is used to optimize the regression parameters to minimize the error.

- 😀 Even though regression is termed 'linear', it allows for fitting more complex curves (polynomial, exponential) to data.

- 😀 The error function is modified (dividing by m and adding a factor of ½) for mathematical convenience during optimization to simplify differentiation.

- 😀 The objective function used in linear regression (MSE) is convex in nature, ensuring that the optimization algorithm can find a unique, optimal solution for model parameters.

Q & A

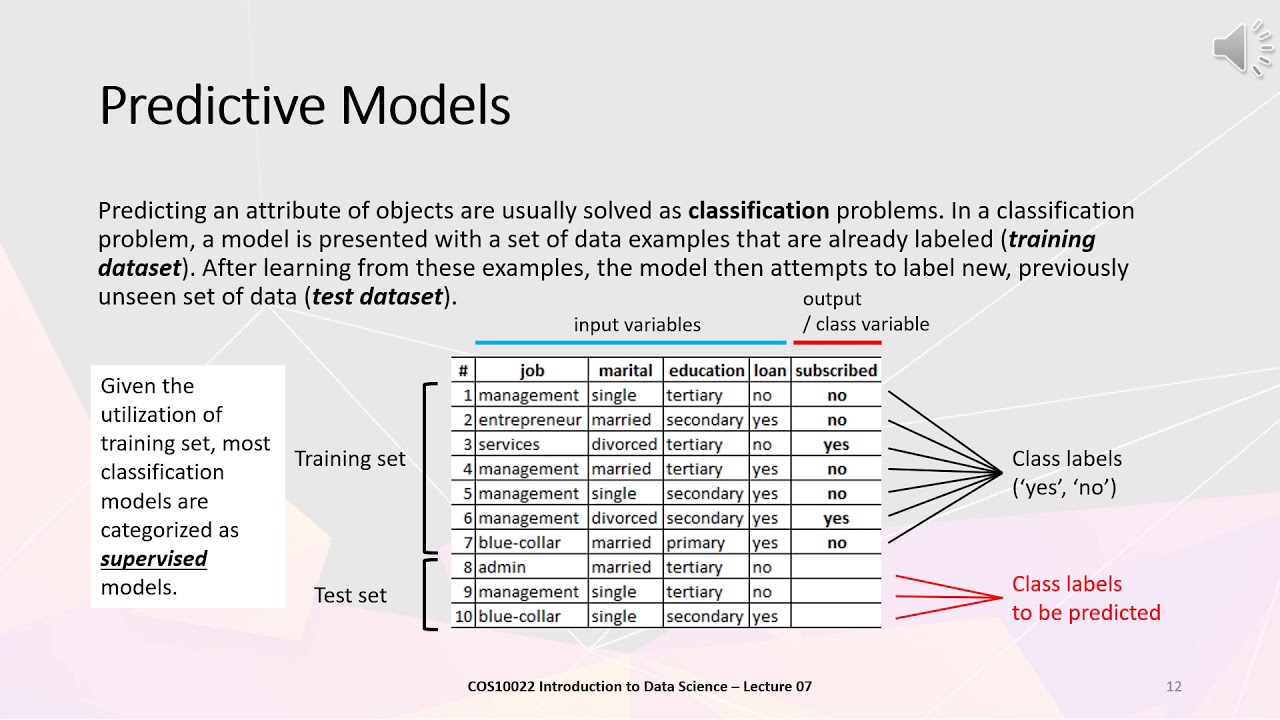

What does linear regression analysis aim to accomplish?

-Linear regression analysis aims to develop a model that predicts outcomes based on input features by finding a linear relationship between the input data and the target variable. It calculates parameters that minimize the error between the predicted and actual values.

Why is linear regression referred to as 'linear' even when the model involves curves like exponential or quadratic?

-Linear regression is called 'linear' not because it always fits a straight line, but because the equations derived from the model are linear in the parameters (such as weights). For example, an exponential model can still be considered linear as long as the parameters appear linearly in the equation.

What is the 'superposition principle' in the context of linear regression?

-The superposition principle in linear regression refers to combining multiple features, each with a corresponding weight, to create a final prediction. For example, when fitting a quadratic curve, each term (like x, x²) is treated as a separate feature, and their weighted sum forms the overall prediction.

How do you solve the linear equations derived from linear regression?

-To solve the linear equations, substitution and elimination methods are commonly used. The system of linear equations is simplified by substituting values, leading to the calculation of the weights (parameters) that minimize the error.

What role does the 'mean squared error' (MSE) function play in linear regression?

-The mean squared error function is used to quantify the error in the model's predictions by comparing predicted values with actual values. The goal of linear regression is to minimize the MSE, which is achieved by optimizing the model's parameters.

How is the error in predictions calculated in linear regression?

-The error is calculated by subtracting the predicted value from the actual value (ground truth) and then squaring the result. This squared difference is summed over all samples and averaged to compute the mean squared error.

What happens when you use a quadratic or cubic curve in regression analysis?

-When using a quadratic or cubic curve, the model includes higher-degree terms like x², x³, etc., allowing it to fit more complex patterns in the data. Despite the complexity, the regression technique remains linear because the parameters are still linear in the equation.

Why is it important to use a convex error function in linear regression?

-A convex error function ensures that there is a single, unique minimum, making it easier to find the optimal parameters. Non-convex error functions may lead to multiple local minima, making it difficult for optimization algorithms to find the correct solution.

What does the term 'normal equations' refer to in the context of linear regression?

-Normal equations refer to the set of equations derived during the process of minimizing the error function in linear regression. These equations are linear in the model parameters and can be solved directly to determine the optimal parameter values.

How do you test the effectiveness of a linear regression model?

-The effectiveness of a linear regression model is tested by evaluating its performance on a separate test dataset that was not used during the training phase. The error on the test data is calculated, and if the error is within an acceptable range, the model is considered effective.

Outlines

This section is available to paid users only. Please upgrade to access this part.

Upgrade NowMindmap

This section is available to paid users only. Please upgrade to access this part.

Upgrade NowKeywords

This section is available to paid users only. Please upgrade to access this part.

Upgrade NowHighlights

This section is available to paid users only. Please upgrade to access this part.

Upgrade NowTranscripts

This section is available to paid users only. Please upgrade to access this part.

Upgrade Now5.0 / 5 (0 votes)