Recent breakthroughs in AI: A brief overview | Aravind Srinivas and Lex Fridman

Summary

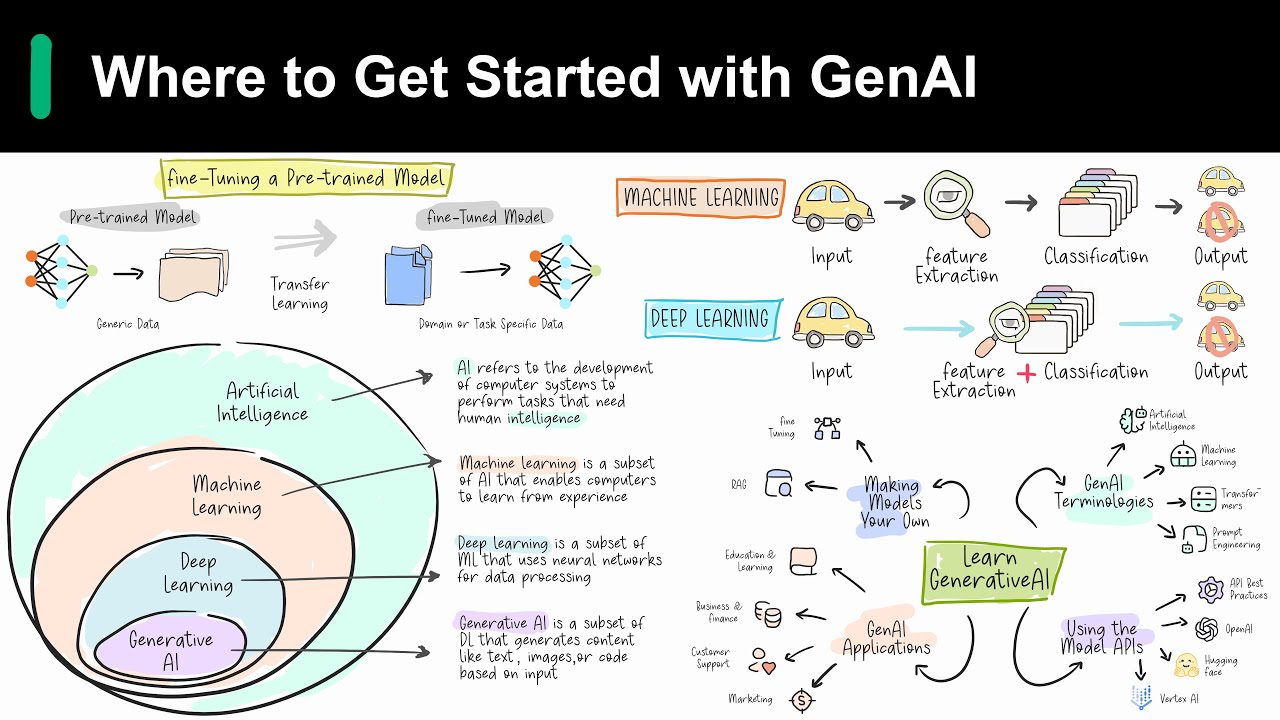

TLDRThe transcript discusses the evolution of AI, focusing on the pivotal role of self-attention and the Transformer model in advancing natural language processing. It highlights how innovations like parallel computation and efficient hardware utilization have been crucial. The conversation also touches on the importance of unsupervised pre-training with large datasets and the refinement of models through post-training phases. The discussion suggests future breakthroughs may lie in decoupling reasoning from memorization and the potential of small, specialized models for efficient reasoning.

Takeaways

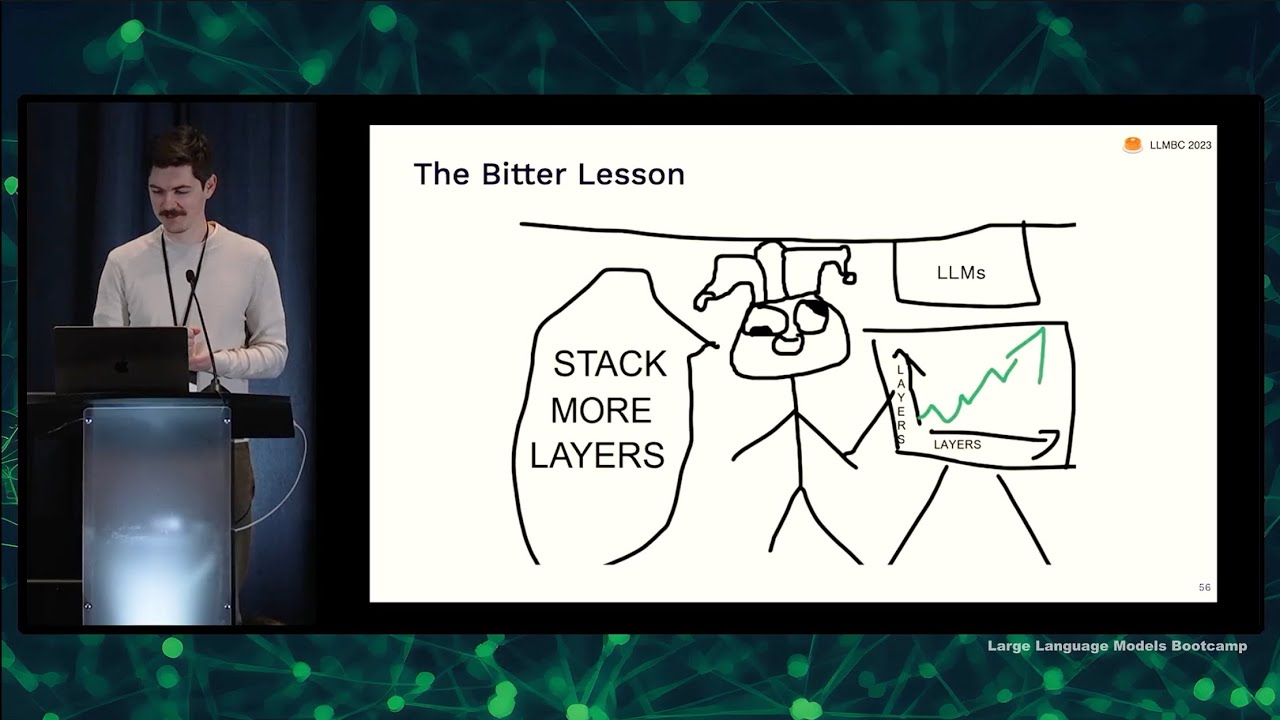

- 📈 The concept of self-attention was pivotal in the development of the Transformer model, leading to significant advancements in AI.

- 🧠 Attention mechanisms allowed for more efficient computation compared to RNNs, enabling models to learn higher-order dependencies.

- 💡 Masking in convolutional models was a key innovation that allowed for parallel training, vastly improving computational efficiency.

- 🚀 Transformers combined the strengths of attention mechanisms and parallel processing, becoming a cornerstone of modern AI architectures.

- 📚 Unsupervised pre-training with large datasets has been fundamental in training language models like GPT, leading to models with impressive natural language understanding.

- 🔍 The importance of data quality and quantity in training AI models cannot be overstated, with larger and higher-quality datasets leading to better model performance.

- 🔄 The iterative process of pre-training and post-training, including reinforcement learning and fine-tuning, is crucial for developing controllable and effective AI systems.

- 🔧 The post-training phase, including data formatting and tool usage, is essential for creating user-friendly AI products and services.

- 💡 The idea of training smaller models (SLMs) on specific reasoning-focused data sets is an emerging research direction that could revolutionize AI efficiency.

- 🌐 Open-source models provide a valuable foundation for experimentation and innovation in the post-training phase, potentially leading to more specialized and efficient AI systems.

Q & A

What was the significance of the paper 'Soft Attention' in the development of AI models?

-The paper 'Soft Attention' was significant as it introduced the concept of attention mechanisms, which were first applied in the paper 'Align and Translate'. This concept of attention was pivotal in the development of models that could better handle dependencies in data, leading to improvements in machine translation systems.

How did the idea of using simple RNN models scale up and influence AI development?

-The idea of scaling up simple RNN models was initially brute force, requiring significant computational resources. However, it demonstrated that by increasing model size and training data, performance could be improved, which was a precursor to the development of more efficient models like the Transformer.

What was the key innovation in the paper 'Pixel RNNs' that influenced subsequent AI models?

-The key innovation in 'Pixel RNNs' was the realization that an entirely convolutional model could perform autoregressive modeling with masked convolutions. This allowed for parallel training instead of sequential backpropagation, significantly improving computational efficiency.

How did the Transformer model combine the best elements of previous models to create a breakthrough?

-The Transformer model combined the power of attention mechanisms, which could handle higher-order dependencies, with the efficiency of fully convolutional models that allowed for parallel processing. This combination led to a significant leap in performance and efficiency in handling sequential data.

What was the importance of the insight that led to the development of the Transformer model?

-The insight that led to the Transformer model was recognizing the value of parallel computation during training to efficiently utilize hardware. This was a significant departure from sequential processing in RNNs, allowing for faster training times and better scalability.

How did the concept of unsupervised learning contribute to the evolution of large language models (LLMs)?

-Unsupervised learning allowed for the training of large language models on vast amounts of text data without the need for labeled examples. This approach enabled models to learn natural language and common sense, which was a significant step towards more human-like AI.

What was the impact of scaling up the size of language models on their capabilities?

-Scaling up the size of language models, as seen with models like GPT-2 and GPT-3, allowed them to process more complex language tasks and generate more coherent and contextually relevant text. It also enabled them to handle longer dependencies in text.

How did the approach to data and tokenization evolve as language models became more sophisticated?

-As language models became more sophisticated, the focus shifted to the quality and quantity of the data they were trained on. There was an increased emphasis on using larger datasets and ensuring the tokens used were of high quality, which contributed to the models' improved performance.

What is the role of reinforcement learning from human feedback (RLHF) in refining AI models?

-Reinforcement learning from human feedback (RLHF) plays a crucial role in making AI models more controllable and well-behaved. It allows for fine-tuning the models to better align with human values and expectations, which is essential for creating usable and reliable AI products.

How does the concept of pre-training and post-training relate to the development of AI models?

-Pre-training involves scaling up models on large amounts of compute to acquire general intelligence and common sense. Post-training, which includes RLHF and supervised fine-tuning, refines these models to perform specific tasks. Both stages are essential for creating AI models that are both generally intelligent and task-specifically effective.

What are the potential benefits of training smaller models on specific data sets that require reasoning?

-Training smaller models on specific reasoning-focused data sets could lead to more efficient and potentially more effective models. It could reduce the computational resources required for training and allow for more rapid iteration and improvement, potentially leading to breakthroughs in AI reasoning capabilities.

Outlines

This section is available to paid users only. Please upgrade to access this part.

Upgrade NowMindmap

This section is available to paid users only. Please upgrade to access this part.

Upgrade NowKeywords

This section is available to paid users only. Please upgrade to access this part.

Upgrade NowHighlights

This section is available to paid users only. Please upgrade to access this part.

Upgrade NowTranscripts

This section is available to paid users only. Please upgrade to access this part.

Upgrade NowBrowse More Related Video

5.0 / 5 (0 votes)