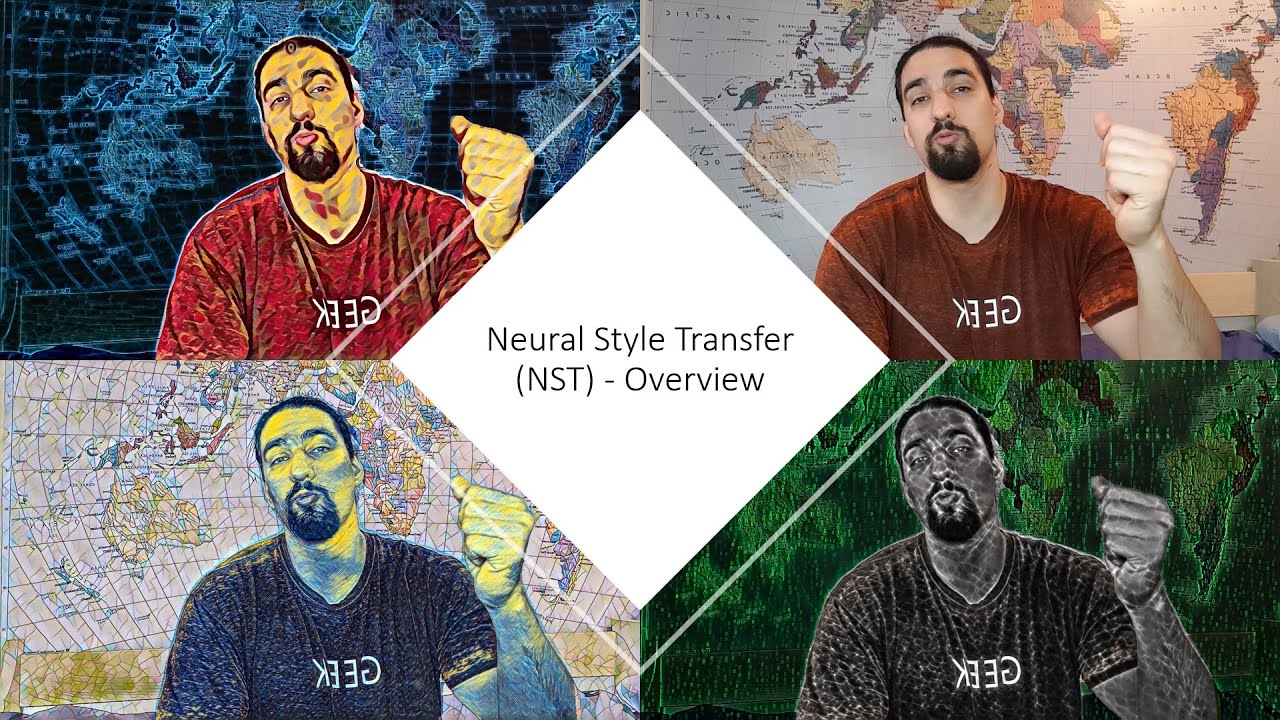

Advanced Theory | Neural Style Transfer #4

Summary

TLDRDieses Video präsentiert die fortgeschrittenen Techniken der neuronalen Stilübertragung, beginnend mit dem berühmten ImageNet-Klassifikationswettbewerb 2012 und der Einführung von AlexNet. Es folgen die Entwicklungen von ZF-Net und VGG, die die Architektur von AlexNet weiterentwickelten. Ein wichtiger Schritt war die Veröffentlichung des Papers 'Understanding the Image Representations by Inverting them', das den ersten Ansatz zur Rekonstruktion von Bildern aus tiefen Codes vorstellte. Daraus entstand der Deep Dream Algorithmus und schließlich die neurale Stilübertragung. Das Video diskutiert verschiedene Algorithmen, die Geschwindigkeit, Qualität und Flexibilität verbessern, und zeigt, wie die Forschung in dieser Richtung weiterentwickelt wurde, einschließlich der Anwendung auf Videos, 3D-Modelle und Audio. Es hebt auch die Herausforderungen hervor, die die Gemeinschaft noch zu lösen hat, und schließt mit einer spannenden Tatsache über eine künstliche Intelligenz-gestaltete Kunst, die für fast halbe Million Dollar versteigert wurde.

Takeaways

- 🎨 Die Entwicklung von neuronalen Stilübertragungsalgorithmen begann 2012 mit dem ImageNet-Klassifikationswettbewerb und der Einführung von Convolutional Neural Networks (CNNs) mit der AlexNet-Architektur.

- 📈 Die Arbeit von AlexNet markierte einen Wendepunkt in der Bildklassifizierung, da sie die bestehenden Methoden signifikant übertraf und die Effizienz von CNNs aufzeigte.

- 🔍 Die Forschung nach der AlexNet-Ära konzentrierte sich auf die Verbesserung der CNN-Architekturen, wie ZF-Net und VGG, die die kombinatorische Raum, die von AlexNet gesetzt wurde, weiter erforschten.

- 👀 Ein wichtiger Schritt zur Verständlichkeit von CNNs war die Publikation des Papers 'Visualizing and Understanding Convolutional Networks', das die visuelle Struktur von Bildmustern, die bestimmte Feature Maps auslösen, aufzeigte.

- 🖼 Die Arbeit 'Understanding the Image Representations by Inverting Them' aus dem Jahr 2014 war ein bahnbrechender Beitrag zur Rekonstruktion von Eingabebildern aus tiefen Feature Maps und führte zur Entwicklung des Deep Dream-Algorithmus.

- 🎭 Die Einführung des neuronalen Stilübertragungsalgorithmus verbindet die Techniken der Bildrekonstruktion aus tiefen Codes mit der Textursynthese, um Stil und Inhalt zu einem neuen Bild zu kombinieren.

- 🚀 Die Weiterentwicklung der Algorithmen zielt darauf ab, die Geschwindigkeit zu erhöhen, die Qualität zu verbessern und die Flexibilität in der Anzahl der übertragbaren Stile zu erhöhen.

- 🌟 Einige der innovativesten Ansätze zur Verbesserung der Algorithmen beinhalten die Verwendung von Instanznormalisierung und bedingter Instanznormalisierung, um die Qualität und Flexibilität zu steigern.

- 🎭 Die Kontrolle über den Stilübertragungsprozess, einschließlich räumlicher Steuerung, Farbkontrolle und Skalensteuerung, ermöglicht es Künstlern und Entwicklern, die Ausgabe des Netzwerks oder des Algorithmus zu beeinflussen.

- 🌐 Die Anwendung des neuronalen Stilübertragungsalgorithmus wurde auf verschiedene Medien erweitert, einschließlich 3D-Modellen, Fotorealismus, Audio und Storyboards, was zeigt, wie vielfältig und anpassungsfähig die Technik ist.

Q & A

Was ist das grundlegende Konzept hinter dem neuronalen Stilübertragungsverfahren?

-Das grundlegende Konzept des neuronalen Stilübertragungsverfahrens ist es, die visuelle Struktur eines Inhaltsbildes zu erhalten, während die Farb- und Texturmerkmale eines Stilbildes übertragen werden.

Welches war das bahnbrechende Werk von 2012, das die Forschung in Richtung neuronaler Netze beeinflusste?

-Das bahnbrechende Werk von 2012 war die Einführung von Convolutional Neural Networks mit der Architektur namens AlexNet, das bei der ImageNet-Klassifikationsherausforderung alle Mitbewerber übertraf.

Wie nennen Sie die Methode, die das Netzwerk dazu bringt, in bestimmten Feature Maps mehr von etwas zu sehen, was es bereits erkennt?

-Diese Methode wird als Deep Dream bezeichnet und sie nutzt die Pareidolie-Effekte, indem sie die Feature-Maps durch Gradientenascension maximiert, um psychedelische Bilder zu erzeugen.

Was ist die Bedeutung von Gram-Matrizen in der Stilübertragung?

-Gram-Matrizen werden verwendet, um die Korrelationen zwischen verschiedenen Feature-Maps zu erfassen, was für die Übertragung des Stils von einem Bild auf ein anderes wesentlich ist.

Wie unterscheidet sich die Methode von Johnson von der ursprünglichen Methode von Gatys et al.?

-Johnsons Methode optimiert die Transformation des Bildes im Raum der Transformationsgewichte anstatt der Pixel im Bildraum und verwendet die gleiche Verlustfunktion wie Gatys et al., aber sie ist schneller und unterstützt nur einen Stil.

Was ist die Bedeutung von Instanznormalisierung in der neuronalen Stilübertragung?

-Instanznormalisierung ermöglicht es, die Qualität und Flexibilität der Stilübertragung zu erhöhen, indem sie die statistischen Parameter (Mittelwert und Varianz) für jedes Feature-Map in einem Mini-Batch separat berechnet, anstatt sie über alle Trainingsbeispiele zu generalisieren.

Wie können mehrere Stilarten in der neuronalen Stilübertragung kombiniert werden?

-Mehrere Stilarten können kombiniert werden, indem für jeden Stil ein eigener Satz von Normalisierungsparametern (Beta und Gamma) verwendet wird, die auf die Feature-Maps des Inhaltsbildes angewendet werden, um mehrere stilisierte Bilder mit verschiedenen Stilen zu erzeugen.

Welche Technik wird verwendet, um die räumliche Kontrolle bei der neuronalen Stilübertragung zu erreichen?

-Die räumliche Kontrolle wird erreicht, indem man Segmentierungsmasken verwendet, um bestimmte Regionen des Inhaltsbildes mit Stilmerkmalen aus dem Stilbild zu kombinieren, wobei Morphologische Operatoren wie Erosion verwendet werden, um die Übergänge zwischen den Regionen zu glätten.

Wie kann man die Farbeingabe bei der neuronalen Stilübertragung kontrollieren?

-Die Farbeingabe kann kontrolliert werden, indem man die Farb- und Intensitätsinformationen in einem Farbraum trennen, in dem sie getrennt sind, und dann die Farbkanäle des Inhaltsbildes mit den Farbkanälen des Stilbildes zu kombinieren.

Was ist der Hauptunterschied zwischen klassischer und photorealistischer neuronaler Stilübertragung?

-Bei der photorealistischen neuronalen Stilübertragung wird nur die Farbinformation auf das Inhaltsbild übertragen, wohingegen bei der klassischen neuronalen Stilübertragung auch Textur- und Strukturmerkmale des Stilbildes übertragen werden.

Outlines

This section is available to paid users only. Please upgrade to access this part.

Upgrade NowMindmap

This section is available to paid users only. Please upgrade to access this part.

Upgrade NowKeywords

This section is available to paid users only. Please upgrade to access this part.

Upgrade NowHighlights

This section is available to paid users only. Please upgrade to access this part.

Upgrade NowTranscripts

This section is available to paid users only. Please upgrade to access this part.

Upgrade NowBrowse More Related Video

Manipulation made easy: 3 Tricks für direkten Einfluss // Prof. Dr. Jack Nasher

Amerikanische Geschichte erklärt: Besiedelung & Unabhängigkeitskrieg (1/2)

Optimization method | Neural Style Transfer #3

Basic Theory | Neural Style Transfer #2

Eine kurze Geschichte des Theaters

Überblick über die Geschichte Roms, Teil 1: Die römische Republik – Einfach Antike

5.0 / 5 (0 votes)