Explainable AI using SHAP | Explainable AI for deep learning | Explainable AI for machine learning

Summary

TLDRThis video introduces SHAP (Shapley Additive Explanations), a powerful tool for explainable AI that quantifies the contribution of each feature in a machine learning model’s prediction. Grounded in game theory, SHAP uses Shapley values to calculate marginal contributions of features, providing a detailed, feature-level breakdown beyond traditional coefficients. The video explains the underlying theory with simple examples, then demonstrates practical Python implementation using XGBoost and visualizations like force and summary plots. Emphasizing data storytelling, it highlights how clear visual explanations help communicate complex model insights effectively to stakeholders, making AI outputs interpretable and actionable.

Takeaways

- 🤖 SHAP stands for Shapley Additive Explanations, a method for making machine learning predictions interpretable.

- 🎲 SHAP is based on game theory, using Shapley values to fairly distribute 'credit' for predictions among model features.

- 👥 Features in a model are treated like players in a game, and SHAP calculates their marginal contribution to each prediction.

- 📊 SHAP helps break down individual predictions into feature contributions, unlike linear regression coefficients which only explain overall trends.

- 🧮 The marginal contribution of a feature is calculated by comparing predictions with and without that feature in different combinations.

- 💻 In Python, SHAP can be implemented using the `shap` library with models like XGBoost or linear regression.

- 📈 Visualizations such as Force plots and waterfall charts show base values, individual feature contributions, and final predictions.

- 🔴 Red in SHAP plots indicates features increasing the prediction, while 🔵 blue shows features decreasing it.

- 🌐 SHAP values can be summarized across all test data to understand global feature importance and model behavior.

- 🗣️ SHAP enhances data storytelling, enabling clear communication of model predictions to business stakeholders.

- 📚 Understanding SHAP and model explainability is crucial for differentiating yourself in AI and machine learning fields.

- 🎨 Effective use of SHAP plots can create impactful presentations that combine human storytelling with AI insights.

Q & A

What does SHAP stand for and what is its main purpose?

-SHAP stands for Shapley Additive Explanations. Its main purpose is to explain the output of machine learning models by assigning each feature a contribution value for a given prediction, helping to make AI models interpretable.

Who originally developed the concept behind SHAP?

-The concept behind SHAP is based on Shapley values, developed by the mathematician Lloyd Shapley as part of game theory.

What is the key idea of Shapley values in game theory?

-Shapley values in game theory calculate the fair contribution of each player in a cooperative game, based on their marginal contribution to different coalitions of players.

How are 'players' and 'coalitions' in game theory related to machine learning in SHAP?

-In SHAP, 'players' represent model features, and 'coalitions' represent different combinations of features. The marginal contribution of each feature to a coalition reflects its impact on the model's prediction.

Why can't linear regression coefficients fully explain individual predictions?

-Linear regression coefficients only describe the expected change in the target variable when one feature changes, assuming other features are constant. They don't break down the contribution of each feature for a specific prediction, which SHAP does.

What are the components of a SHAP explanation for a single prediction?

-The components are: the base value (expected prediction without any feature), individual SHAP values for each feature, and the final prediction, which is the sum of the base value and all SHAP values.

What Python libraries are typically used when implementing SHAP?

-The typical Python libraries used are SHAP, pandas, numpy, and a machine learning library such as XGBoost or scikit-learn.

What types of visualizations can SHAP produce to explain predictions?

-SHAP can produce visualizations like Force Plots, Waterfall Charts, Summary Plots, and various other plots that illustrate how each feature increases or decreases a prediction.

How does SHAP handle the explanation of feature contributions at the model level?

-SHAP aggregates feature contributions across all predictions in a dataset to provide a global view of which features are most influential and how they impact predictions overall.

Why is SHAP valuable for business and AI stakeholders?

-SHAP provides transparency and interpretability in AI models, allowing stakeholders to understand, trust, and act on model predictions. It makes complex models explainable and improves communication of insights.

What is a 'marginal coalition' in the context of SHAP?

-A marginal coalition is the additional contribution of a feature when added to a combination of other features. It is the difference between the prediction with the feature included and the prediction without it.

How does SHAP relate to storytelling and data presentation?

-SHAP values and visualizations help convey complex model predictions in an intuitive way, making it easier to tell a data-driven story to non-technical audiences and highlight feature importance clearly.

Outlines

Этот раздел доступен только подписчикам платных тарифов. Пожалуйста, перейдите на платный тариф для доступа.

Перейти на платный тарифMindmap

Этот раздел доступен только подписчикам платных тарифов. Пожалуйста, перейдите на платный тариф для доступа.

Перейти на платный тарифKeywords

Этот раздел доступен только подписчикам платных тарифов. Пожалуйста, перейдите на платный тариф для доступа.

Перейти на платный тарифHighlights

Этот раздел доступен только подписчикам платных тарифов. Пожалуйста, перейдите на платный тариф для доступа.

Перейти на платный тарифTranscripts

Этот раздел доступен только подписчикам платных тарифов. Пожалуйста, перейдите на платный тариф для доступа.

Перейти на платный тарифПосмотреть больше похожих видео

SHAP values for beginners | What they mean and their applications

4 Significant Limitations of SHAP

Explainable AI explained! | #1 Introduction

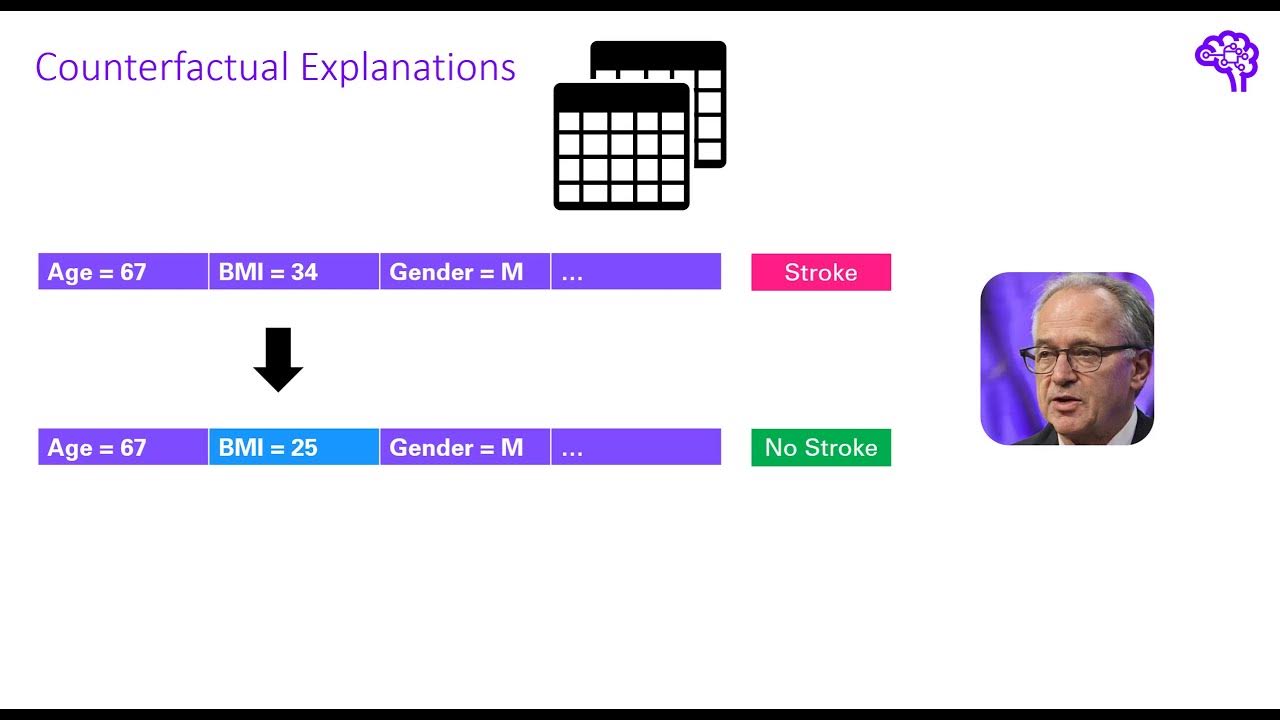

Explainable AI explained! | #5 Counterfactual explanations and adversarial attacks

What is Vertex AI?

Machine Learning vs Deep Learning vs Artificial Intelligence | ML vs DL vs AI | Simplilearn

5.0 / 5 (0 votes)