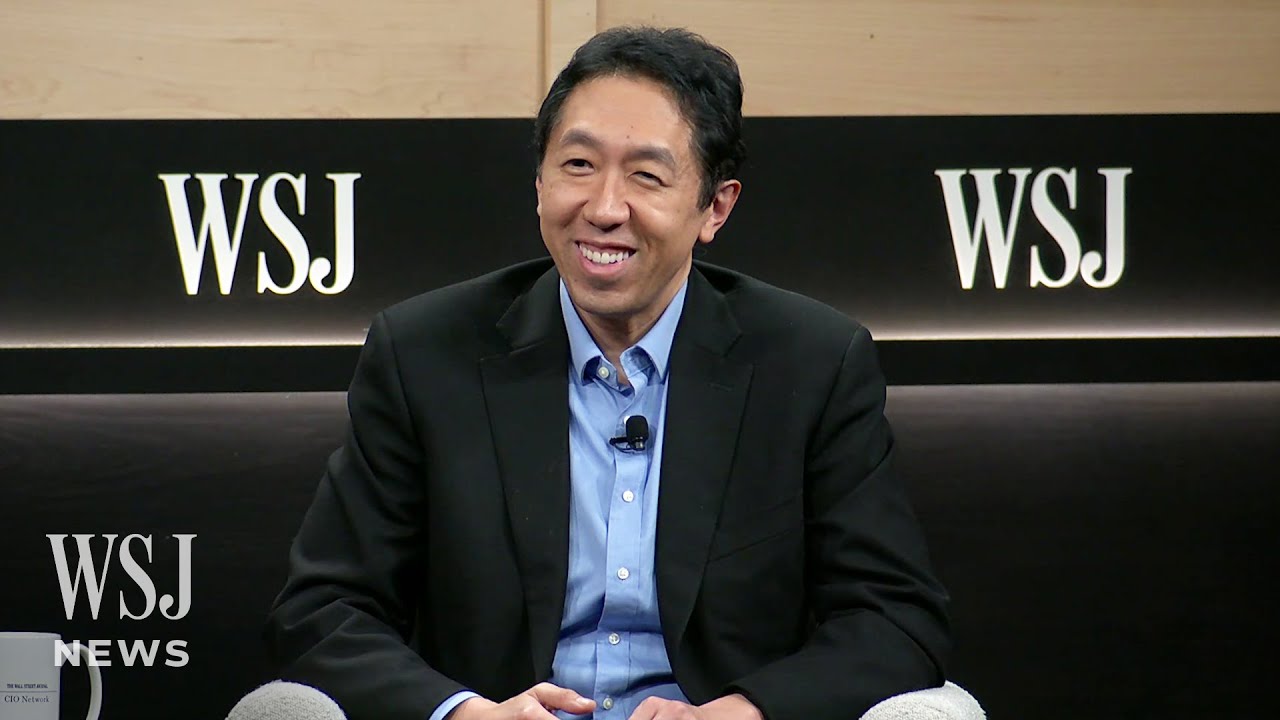

Andrew Ng: Opportunities in AI - 2023

Summary

TLDRAndrew Ng, a leader in AI, shares his insights on the technology landscape. He sees supervised learning and generative AI as key tools creating opportunities across industries. Though AI adoption is still early outside of tech, Ng believes new low/no code tools will enable customization, spreading AI's impact. Despite risks like job displacement, Ng feels accelerating AI progress is imperative to reduce extinction threats like climate change.

Takeaways

- π‘ Supervised learning and generative AI are the most important AI tools currently.

- π Supervised learning is already massively valuable, generative AI will grow rapidly.

- π€ AI is a general purpose technology with many diverse use cases.

- π Finding and executing on concrete AI use cases is key.

- π§βπ» Low/no code tools will enable wider AI adoption across industries.

- π AI opportunities exist at the application layer, with lighter competition.

- π€ Subject matter experts + AI build partners enable unique opportunities.

- π Validate ideas quickly, then recruit specialized leaders early on.

- β οΈ AI risks include job disruption - we must care for affected people.

- π€π ̈βπΌ AGI is decades away. Faster AI progress helps solve real risks.

Q & A

What are the two most important AI tools that Andrew Ng discussed?

-According to Andrew Ng, the two most important AI tools currently are supervised learning, which is good for labeling things, and generative AI, which can generate text and images.

How does generative AI like ChatGPT work at its core?

-Generative AI like ChatGPT works by using supervised learning and input-output mappings to repeatedly predict the next word in a sequence of text.

What major trends does Andrew Ng see in AI currently?

-The two major trends are: 1) AI as a general purpose technology with many use cases still to be realized. 2) Easy-to-use, low-code/no-code tools to customize AI, enabling more widespread adoption.

How can incumbent companies take advantage of AI opportunities?

-Incumbent companies can leverage their distribution advantages to efficiently integrate AI into their existing products and services.

What is the process Andrew Ng uses to build AI startups?

-He validates the idea, recruits a CEO early on, builds a prototype in 2-week sprints, gets early customers, then provides funding for the startup to scale.

Why does Andrew Ng prefer to start with concrete ideas rather than just a general problem area?

-Concrete ideas can be validated/invalidated much more quickly. Many experts have already deeply explored a problem and have concrete ideas to share.

What does Andrew Ng see as one of the biggest risks of AI?

-One of the biggest risks is disruption to jobs, especially higher-wage jobs. As AI creates tremendous value, we must ensure people disrupted are still well taken care of.

When does Andrew Ng predict artificial general intelligence (AGI) will arrive?

-Andrew Ng believes AGI that can do anything a human can do is still decades away, at least 30-50 years or even longer.

Why does Andrew Ng believe AI does not pose an extinction risk to humanity?

-AI develops gradually, can be controlled like other powerful entities (nations, corporations), and more intelligence in the world helps address real extinction risks like climate change.

What opportunities does Andrew Ng see ahead with AI?

-Finding and executing on the many concrete use cases across industries, as AI is a general purpose technology with many applications still to be realized.

Outlines

Этот раздел доступен только подписчикам платных тарифов. Пожалуйста, перейдите на платный тариф для доступа.

Перейти на платный тарифMindmap

Этот раздел доступен только подписчикам платных тарифов. Пожалуйста, перейдите на платный тариф для доступа.

Перейти на платный тарифKeywords

Этот раздел доступен только подписчикам платных тарифов. Пожалуйста, перейдите на платный тариф для доступа.

Перейти на платный тарифHighlights

Этот раздел доступен только подписчикам платных тарифов. Пожалуйста, перейдите на платный тариф для доступа.

Перейти на платный тарифTranscripts

Этот раздел доступен только подписчикам платных тарифов. Пожалуйста, перейдите на платный тариф для доступа.

Перейти на платный тарифПосмотреть больше похожих видео

The Possibilities of AI [Entire Talk] - Sam Altman (OpenAI)

Andrew Ng on AI's Potential Effect on the Labor Force | WSJ

期待已久!特斯拉新一代V4超充 年終加碼特賣 新政府優先為全自動駕駛鋪路!Trump makes FSD easier to achieve

【人工智能】AI智能体工作流 | Agentic Reasoning | 吴恩达Andrew Ng | 红杉AI Ascent 2024分享 | Agent 4大设计模式

Tally Solutions: A Journey of Innovation and Growth

AI Pioneer Shows The Power of AI AGENTS - "The Future Is Agentic"

5.0 / 5 (0 votes)