VGA - CompTIA A+ 220-1101 – 1.21

Summary

TLDRThis video script delves into the history and technical aspects of Video Graphics Array (VGA), a once-popular analog video standard introduced in 1987. Now considered obsolete, VGA requires signal conversions that degrade quality, making it less preferred in the digital age. The script explains the 15-pin D-Sub connector and its diminishing presence in modern devices, except for some projectors and adapters. It also touches on VGA's association with lower resolutions and the use of basic graphics adapters in Windows when drivers are absent, emphasizing the importance of updating to manufacturer-specific drivers for optimal performance.

Takeaways

- 🔍 VGA, or Video Graphics Array, was released in 1987 and is now considered obsolete, used only for compatibility reasons.

- 📚 It's unlikely that the exam will cover VGA due to its age, but CompTIA still lists it as an objective, so understanding its limitations is important.

- 🔌 VGA uses an analog signal, which was suitable for the CRT monitors of the past but is less efficient with modern digital LCD screens.

- 🔄 The process of converting digital signals to analog and back to digital for display on LCD screens can degrade the signal quality, making VGA less preferred.

- 🔩 The VGA connector is a 15-pin D-Subminiature connector, recognizable by its D-shaped metal casing, and should be used as a last resort.

- 💻 Newer computers and graphics cards no longer include VGA connectors, favoring digital options, but it can still be found on projectors for legacy support.

- 🎥 Projectors often retain VGA connectors to avoid losing sales due to the lack of an old legacy connector, despite the trend towards digital.

- 🔗 Adapters with VGA connectors, such as USB to HDMI, are available, but since HDMI is digital, it is the preferable choice.

- 🖥️ Modern monitors typically do not have VGA connectors, and if they do, they should be avoided unless absolutely necessary.

- 📏 The term 'VGA' is sometimes used to refer to lower graphics resolutions, including SVGA or XGA, which are technically different but often grouped under VGA.

- 🛠️ In older Windows versions, the Standard VGA Graphics Adapter is used when no device driver is present, providing basic graphics support but performing poorly.

- 🚀 Later Windows versions use the 'Microsoft Basic Display Adapter', which offers better performance than the VGA adapter, but still not as good as a manufacturer-specific driver.

Q & A

What does VGA stand for?

-VGA stands for Video Graphics Array.

When was VGA technology released?

-VGA was released in 1987.

Why is VGA considered obsolete in modern technology?

-VGA is considered obsolete because it uses an analog signal instead of a digital signal, which is less efficient and can reduce the quality of the display, especially with modern digital devices.

Why was VGA once a good choice for computer graphics?

-VGA was once a good choice because it used an analog signal that was compatible with the CRT monitors of the time, requiring only one conversion from digital to analog for display.

What type of signal conversion is required when using VGA with an LCD monitor?

-When using VGA with an LCD monitor, two signal conversions are required: one from digital to analog by the graphics card, and another from analog back to digital by the monitor.

What is the technical name for the 15 pin connector used by VGA?

-The 15 pin connector used by VGA is technically known as a D-Subminiature connector, or D-Sub for short.

Why might you still find VGA connectors on projectors?

-You might still find VGA connectors on projectors because projectors tend to support a wide range of connectors for compatibility, including legacy connectors like VGA, to avoid losing sales due to a lack of connection options.

Why are VGA connectors no longer included with new computers and graphics cards?

-VGA connectors are no longer included with new computers and graphics cards because there are now many digital alternatives available that are more efficient and better suited for modern devices.

What is the difference between the Standard VGA Graphics Adapter and the Microsoft Basic Display Adapter in terms of performance?

-The Microsoft Basic Display Adapter offers better performance than the Standard VGA Graphics Adapter, but neither provides the same level of performance as a device driver from the graphics card manufacturer.

Why should you update the device driver when you see the Standard VGA Graphics Adapter or the Microsoft Basic Display Adapter in use?

-You should update the device driver to the manufacturer's version to ensure optimal performance and compatibility with your specific graphics hardware.

What does the term 'VGA' sometimes refer to in terms of graphics resolutions?

-The term 'VGA' is sometimes used to refer to lower graphics resolutions, even though some of these resolutions have specific names like SVGA or XGA.

Outlines

Cette section est réservée aux utilisateurs payants. Améliorez votre compte pour accéder à cette section.

Améliorer maintenantMindmap

Cette section est réservée aux utilisateurs payants. Améliorez votre compte pour accéder à cette section.

Améliorer maintenantKeywords

Cette section est réservée aux utilisateurs payants. Améliorez votre compte pour accéder à cette section.

Améliorer maintenantHighlights

Cette section est réservée aux utilisateurs payants. Améliorez votre compte pour accéder à cette section.

Améliorer maintenantTranscripts

Cette section est réservée aux utilisateurs payants. Améliorez votre compte pour accéder à cette section.

Améliorer maintenantVoir Plus de Vidéos Connexes

Video Cables - CompTIA A+ 220-1101 - 3.1

Kenali Jenis-Jenis VGA Sebelum Kamu Membelinya

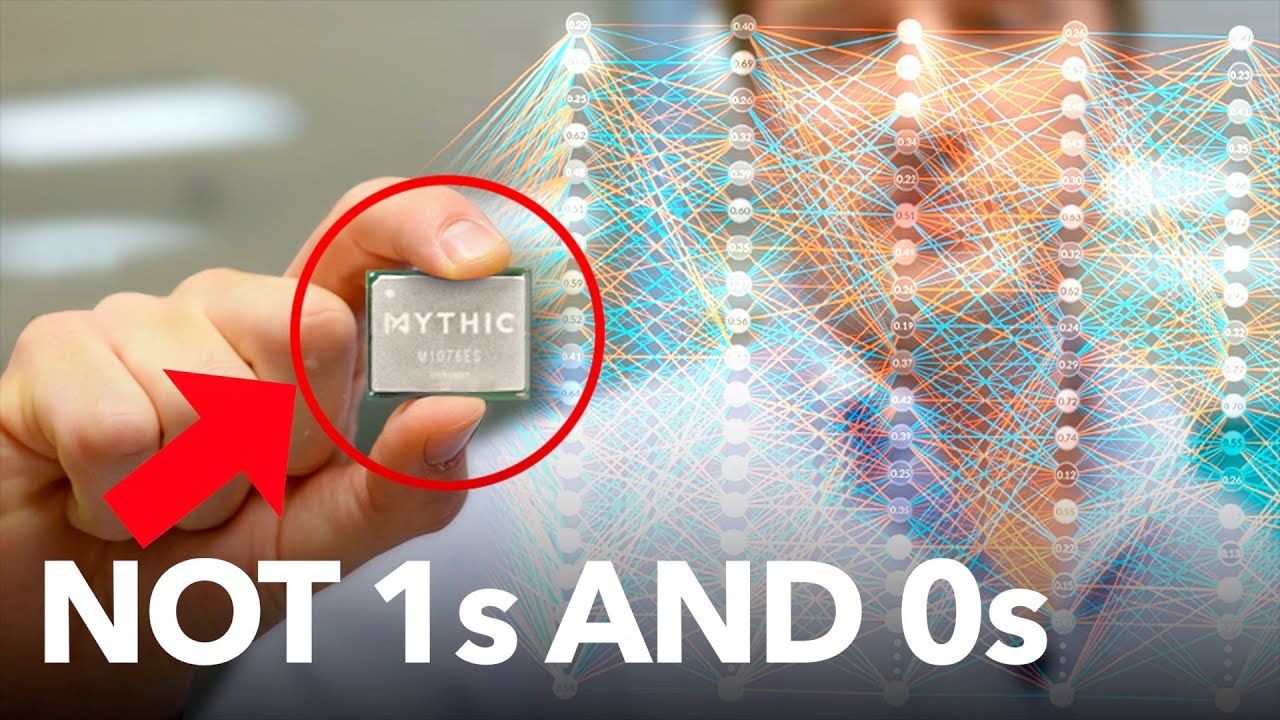

Future Computers Will Be Radically Different (Analog Computing)

The (Old) History of Walt Disney Animation Studios 6/14 - Animation Lookback

Classic Synths for FREE???

A strange collection of analog horror tapes featuring @spangerlookrey

5.0 / 5 (0 votes)