CS 285: Lecture 4, Part 1

Summary

TLDRThis lecture delves into the foundations of reinforcement learning (RL), starting with key concepts like policies, states, actions, and rewards. It contrasts imitation learning and RL, emphasizing how the latter uses reward functions to optimize actions without expert data. The lecture explores Markov decision processes (MDPs) and partially observed MDPs, defining the mathematical models behind state transitions and actions. It also introduces the concept of optimizing expected rewards using smooth, differentiable functions, even for seemingly discontinuous rewards. The overall focus is on understanding how to train effective RL models through policy optimization and the interplay of state-action dynamics.

Takeaways

- 😀 The core concept of reinforcement learning is learning a policy that maximizes the expected future rewards.

- 😀 A **policy** in reinforcement learning is a distribution over actions given the state (or observation), which can be parameterized using a vector theta.

- 😀 The **Markov property** defines that the state at time t+1 is independent of the state at time t-1, given the current state at time t.

- 😀 In reinforcement learning, **state** and **observation** are related but differ in that the observation may not fully convey the state due to partial observability.

- 😀 A **reward function** is central to RL, as it assigns real-valued scores to state-action pairs, guiding the agent's behavior towards higher rewards.

- 😀 **Markov Decision Processes (MDPs)** form the foundation of reinforcement learning, adding actions and rewards to the Markov chain framework for decision-making.

- 😀 In MDPs, the **transition function** defines the probability distribution of moving to the next state based on the current state and action.

- 😀 The **Partially Observable Markov Decision Process (POMDP)** extends MDPs by including an observation space, useful when the agent does not have full access to the state.

- 😀 The objective in reinforcement learning is to find a policy that maximizes the **expected sum of rewards** over the trajectory of an agent's actions.

- 😀 **Stationary distributions** exist for Markov chains under ergodicity and aperiodicity, meaning that over time, the system reaches a stable distribution of states.

- 😀 Reinforcement learning uses smooth optimization methods like **gradient descent** to optimize objectives, even when rewards are discontinuous, due to the smooth nature of **expected rewards**.

Q & A

What is the primary distinction between a state and an observation in reinforcement learning?

-The primary difference is that the state satisfies the Markov property, meaning that the state at time t+1 is independent of the state at time t-1, given the current state at time t. In contrast, the observation may not contain all the necessary information to fully infer the state, and it does not need to satisfy the Markov property.

What is the significance of the reward function in reinforcement learning?

-The reward function defines which states and actions are considered better or worse. It provides a scalar value that helps guide the agent's decisions, allowing it to choose actions that lead to higher cumulative rewards. It not only evaluates immediate rewards but also considers future rewards when making decisions.

How does a Markov Decision Process (MDP) differ from a Markov Chain?

-A Markov Chain consists of a set of states and a transition function that describes the probability of transitioning between states. An MDP adds an action space and a reward function, which allows for decision-making based on actions taken at each time step, making it more suitable for reinforcement learning.

What is the role of the transition operator in a Markov Chain?

-The transition operator, denoted as T, describes the probability of transitioning from one state to another. In a Markov Chain, it is represented as a matrix where each entry indicates the probability of moving from one state to another. This operator is key to modeling the dynamics of the system.

What does the term 'policy' refer to in reinforcement learning, and how is it represented?

-A policy in reinforcement learning is a mapping from states (or observations) to actions. It can be represented as a probability distribution over actions conditioned on a given state, often parameterized by a vector theta. In deep reinforcement learning, the policy is frequently represented by a neural network.

Why is the Markov property crucial for reinforcement learning?

-The Markov property ensures that future states depend only on the current state and not on the sequence of events that led to it. This property simplifies the modeling of dynamic systems and makes reinforcement learning algorithms more tractable, as it allows for efficient planning and decision-making.

What is the difference between fully observed and partially observed reinforcement learning?

-In fully observed reinforcement learning, the agent has access to the complete state, which directly influences its decision-making process. In partially observed reinforcement learning, the agent only has access to an observation, which is a potentially incomplete or noisy representation of the state, making the problem more challenging.

What does the trajectory distribution represent in reinforcement learning?

-The trajectory distribution represents the sequence of states and actions (a trajectory) generated by following a policy. It describes how likely different state-action sequences are, given the policy's parameters. The goal in reinforcement learning is to find the policy that maximizes the expected sum of rewards over these trajectories.

What is the significance of the stationary distribution in reinforcement learning?

-The stationary distribution represents a distribution over states that remains unchanged over time when the system is run under the Markov decision process dynamics. It is important for infinite-horizon reinforcement learning as it helps define the long-term behavior of the agent, allowing the agent's actions to be optimized over an indefinite period.

How does the smoothness of expected rewards help in applying gradient-based optimization methods in reinforcement learning?

-Even if the reward function appears discontinuous (like binary rewards for win/lose), the expected reward, which is a probability-weighted average, is smooth and differentiable with respect to the policy's parameters. This allows the use of smooth optimization methods such as gradient descent to optimize reinforcement learning objectives, despite the apparent discontinuities in the underlying reward function.

Outlines

Cette section est réservée aux utilisateurs payants. Améliorez votre compte pour accéder à cette section.

Améliorer maintenantMindmap

Cette section est réservée aux utilisateurs payants. Améliorez votre compte pour accéder à cette section.

Améliorer maintenantKeywords

Cette section est réservée aux utilisateurs payants. Améliorez votre compte pour accéder à cette section.

Améliorer maintenantHighlights

Cette section est réservée aux utilisateurs payants. Améliorez votre compte pour accéder à cette section.

Améliorer maintenantTranscripts

Cette section est réservée aux utilisateurs payants. Améliorez votre compte pour accéder à cette section.

Améliorer maintenantVoir Plus de Vidéos Connexes

Reinforcement Learning - Computerphile

An introduction to Reinforcement Learning

The LLM's RL Revelation We Didn't See Coming

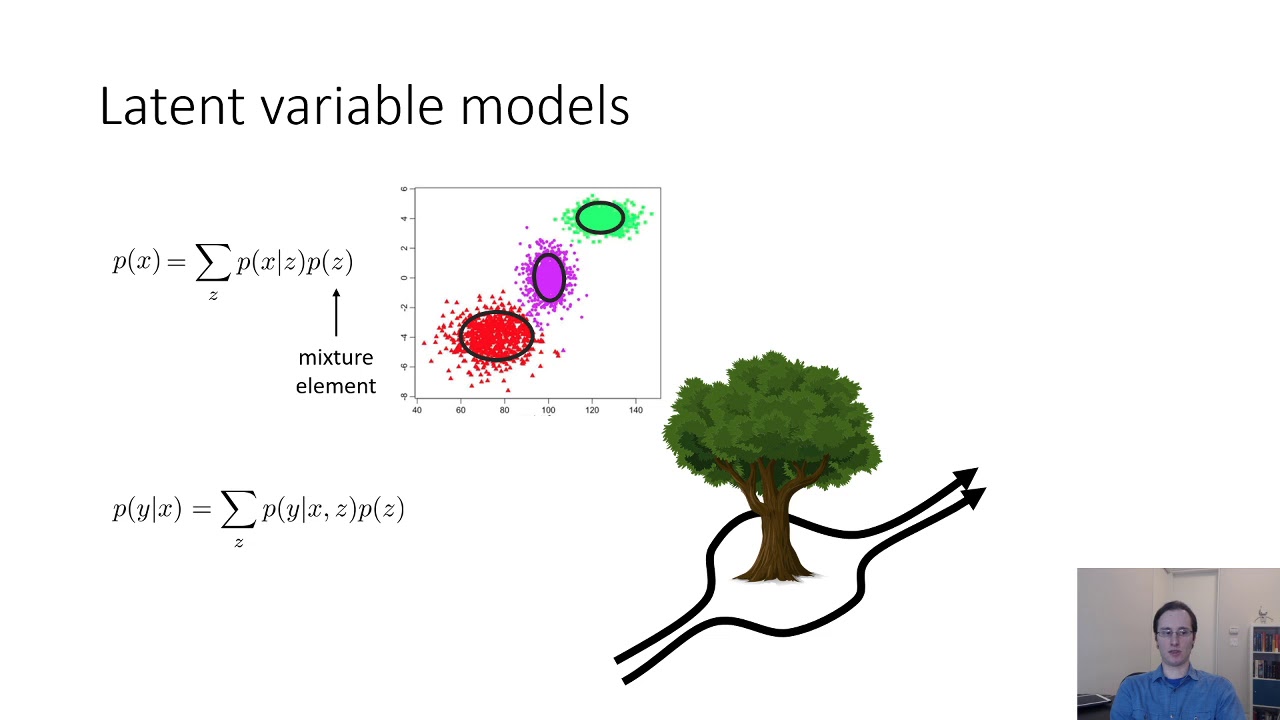

CS 285: Lecture 18, Variational Inference, Part 1

What is Operant Conditioning (Reinforcement Learning)?

o1 - What is Going On? Why o1 is a 3rd Paradigm of Model + 10 Things You Might Not Know

5.0 / 5 (0 votes)