What is Recurrent Neural Network (RNN)? Deep Learning Tutorial 33 (Tensorflow, Keras & Python)

Summary

TLDRThis video tutorial covers the basics of Recurrent Neural Networks (RNNs) and their applications in natural language processing (NLP). It explains why RNNs are suitable for sequence modeling tasks, such as language translation, sentiment analysis, and named entity recognition, due to their ability to handle sequential data and remember context. The tutorial also highlights the limitations of using traditional neural networks for these tasks, emphasizing the importance of sequence in language. Practical examples, including Google autocomplete and translation, illustrate RNNs' effectiveness in real-life scenarios.

Takeaways

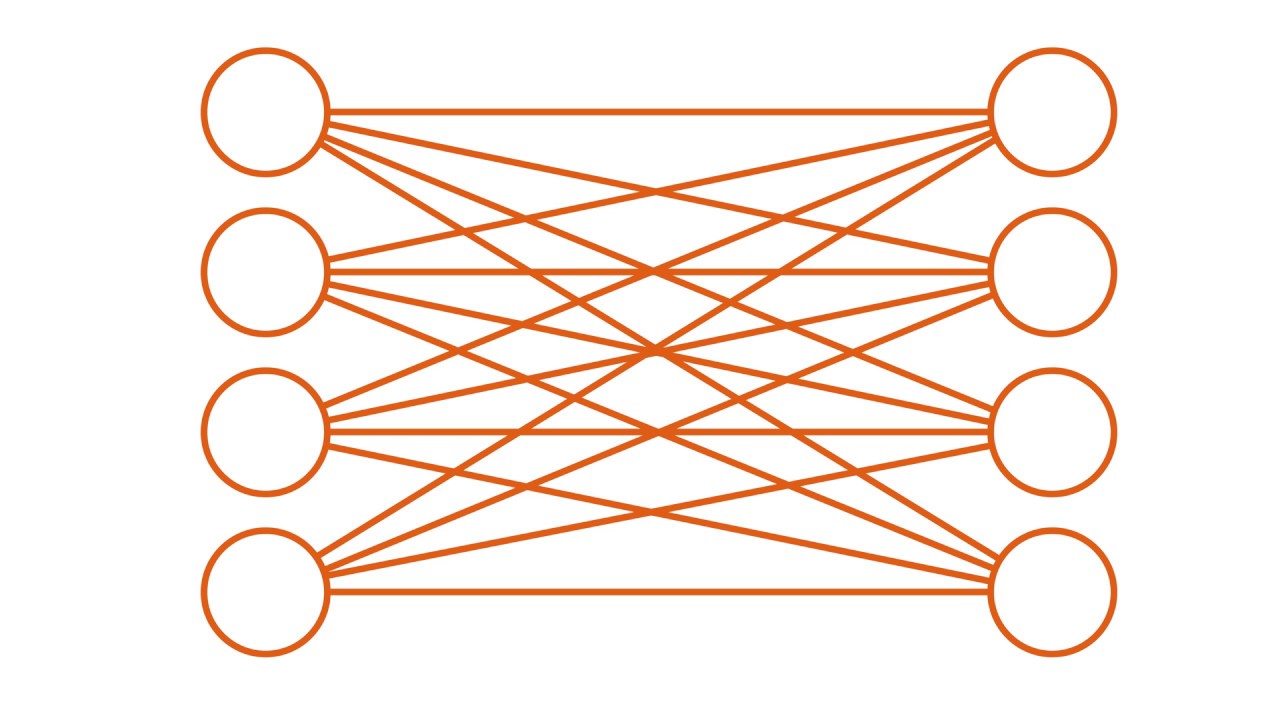

- 🧠 Recurrent Neural Networks (RNNs) are primarily used for natural language processing (NLP) tasks, in contrast to Convolutional Neural Networks (CNNs) which are mainly used for image processing.

- 🔄 RNNs are designed to handle sequence data where the order of elements matters, as opposed to traditional Artificial Neural Networks (ANNs) which do not consider sequence order.

- 💡 Google's Gmail auto-complete feature is an example of an application of RNNs, demonstrating their ability to predict and complete sentences based on the context of previous words.

- 🌐 Google Translate is another application of RNNs, showcasing their use in translating sentences from one language to another, highlighting the importance of sequence in language translation.

- 🔍 Named Entity Recognition (NER) is a use case for RNNs where the network identifies and categorizes entities in a text, such as names of people, companies, and places.

- 📊 Sentiment Analysis is a task where RNNs can determine the sentiment of a product review, classifying it into categories like one star to five stars.

- 📈 One of the limitations of using ANNs for sequence modeling is the fixed size of input and output layers, which does not accommodate variable sentence lengths.

- 🔢 The computational inefficiency of ANNs in sequence tasks is due to the need for one-hot encoding of words, which can lead to a very large input layer with many neurons.

- 🔗 The lack of parameter sharing in ANNs when dealing with different sentence structures that convey the same meaning is another drawback, as RNNs can share parameters through their recurrent connections.

- 🔄 RNNs maintain a 'memory' of previous inputs through their recurrent structure, allowing them to understand and process language with context, unlike ANNs.

- 🔧 Training an RNN involves passing all training samples through the network multiple times (epochs), adjusting weights based on loss to minimize errors and improve predictions.

Q & A

What is the main focus of the video script?

-The video script focuses on explaining Recurrent Neural Networks (RNNs), their applications in natural language processing (NLP), and how they differ from other types of neural networks like CNNs and ANNs.

What are the primary use cases for Recurrent Neural Networks mentioned in the script?

-The primary use cases for RNNs mentioned are auto-completion in Gmail, language translation using Google Translate, Named Entity Recognition (NER), and Sentiment Analysis.

Why are Recurrent Neural Networks particularly suited for natural language processing tasks?

-RNNs are suited for NLP tasks because they can handle sequence data where the order of elements is important, unlike traditional neural networks which do not consider the sequence of inputs.

How does the script describe the process of auto-completion in Gmail using RNNs?

-The script describes the auto-completion process as the user types a sentence, and the RNN embedded in Gmail predicts and completes the sentence based on the context provided by the user's input.

What are the challenges with using a simple neural network for sequence modeling problems?

-The challenges include the need for a fixed sentence size, high computational cost due to one-hot encoding, and the inability to share parameters across different sentences with the same meaning.

How does the script explain the concept of one-hot encoding in the context of neural networks?

-One-hot encoding is explained as a method of converting words into vectors where each word is represented by a vector with a '1' at its corresponding position in the vocabulary and '0's elsewhere.

What is the significance of the sequence in language translation according to the script?

-The sequence is significant because changing the order of words in a sentence can completely alter its meaning, which is a key aspect that RNNs can handle but simple neural networks cannot.

How does the script describe the architecture of an RNN?

-The script describes an RNN as having a single hidden layer that processes words one by one, carrying the context from the previous word to the next, thus providing a memory of the sequence.

What is the purpose of Named Entity Recognition (NER) as explained in the script?

-The purpose of NER is to identify and classify entities in text, such as recognizing 'Dhaval' and 'baby yoda' as person names in the given example.

How does the script explain the training process of an RNN for Named Entity Recognition?

-The script explains the training process as initializing the network weights, processing each word in the training samples, calculating the predicted output, comparing it with the actual output, and adjusting the weights to minimize the loss through backpropagation.

What are the components of the language translation process using RNNs as described in the script?

-The components include an encoder that processes the input sentence and a decoder that translates it into the target language, with the process requiring all words to be supplied before translation can occur.

Outlines

Cette section est réservée aux utilisateurs payants. Améliorez votre compte pour accéder à cette section.

Améliorer maintenantMindmap

Cette section est réservée aux utilisateurs payants. Améliorez votre compte pour accéder à cette section.

Améliorer maintenantKeywords

Cette section est réservée aux utilisateurs payants. Améliorez votre compte pour accéder à cette section.

Améliorer maintenantHighlights

Cette section est réservée aux utilisateurs payants. Améliorez votre compte pour accéder à cette section.

Améliorer maintenantTranscripts

Cette section est réservée aux utilisateurs payants. Améliorez votre compte pour accéder à cette section.

Améliorer maintenantVoir Plus de Vidéos Connexes

Natural Language Generation at Google Research

Introduction to Transformer Architecture

Deep Learning(CS7015): Lec 1.6 The Curious Case of Sequences

Transformers, explained: Understand the model behind GPT, BERT, and T5

Complete Road Map To Prepare NLP-Follow This Video-You Will Able to Crack Any DS Interviews🔥🔥

What is a convolutional neural network (CNN)?

5.0 / 5 (0 votes)