第一篇: 先进的RAG管道(Advanced RAG Pipeline) 中英文字幕

Summary

TLDR本课程详细介绍了如何使用Llama Index建立基础和高级的检索增强生成(RAG)管道。课程首先解释了基础RAG管道的工作机制,包括摄取、检索和合成三个部分。随后,通过使用True Lens定义一组评估指标,我们可以对高级RAG技术与基础管道进行基准测试。课程还探讨了如何通过使用句子窗口检索和自动合并检索等高级技术来提升性能,并利用True Lens进行评估和基准测试。

Takeaways

- 📚 介绍了如何使用Llama Index建立基础和高级的检索增强生成(RAG)管道。

- 🔍 RAG管道由三个组件组成:摄取(ingestion)、检索(retrieval)和合成(synthesis)。

- 📈 通过True Lens定义一组指标,用于基准测试高级RAG技术与基础管道的性能。

- 📊 展示了如何使用Llama Index和OpenAI的LM来创建一个简单的RAG应用程序。

- 📝 讨论了如何对文档进行分块、嵌入和索引化处理。

- 🔑 介绍了如何使用GBT 3.5 Turbo和Hugging Face BG Small模型进行文档的嵌入和检索。

- 💡 通过True Lens模块初始化反馈函数,创建了RAG评估三元组,包括答案相关性、上下文相关性和基础性。

- 📌 强调了自动化评估(如True Lens)在大规模评估生成AI应用时的重要性。

- 🌟 比较了基础RAG管道和高级检索技术(如句子窗口检索和自动合并检索)的性能。

- 🚀 展示了如何设置和评估句子窗口检索和自动合并检索这两种高级检索技术。

- 📈 提供了一个综合的排行榜,展示了不同检索技术在评估指标上的表现和效率。

Q & A

什么是RAG(Retrieval-Augmented Generation)管道?

-RAG管道是一种结合了信息检索和文本生成的技术,它包含三个部分:信息摄取、检索和合成。通过这种管道,可以对用户查询生成更加丰富和准确的回答。

RAG管道中的'摄取'阶段是做什么的?

-在RAG管道的'摄取'阶段,首先加载一组文档,然后将每个文档分割成多个文本块,并为每个文本块生成嵌入向量,最后将这些嵌入向量存储到索引中。

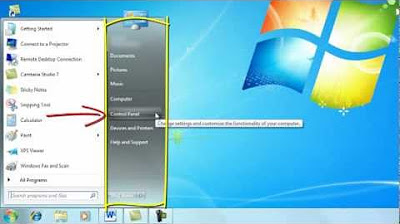

如何使用Llama Index建立RAG管道?

-通过使用Llama Index,我们可以创建一个简单的LLM应用程序,它内部使用OpenAI的LM。首先,我们需要创建一个服务上下文对象,指定使用的LM和嵌入模型,然后使用Llama Index的Vector Store Index对文档进行索引。

如何评估RAG管道的性能?

-使用True Lens初始化反馈函数,创建一个RAG评估三元组,包括查询、响应和上下文之间的成对比较。这样可以创建三个不同的评估模块:回答相关性、上下文相关性和基础性。

什么是句子窗口检索?

-句子窗口检索是一种先进的检索技术,通过嵌入和检索单个句子来工作,检索后将句子替换为原始检索句子周围的更大句子窗口。这样可以为AI提供更多的上下文信息,以更好地回答查询。

自合并检索器是如何工作的?

-自合并检索器通过构建一个层级结构,其中较大的父节点包含引用父节点的较小子节点。在检索过程中,如果父节点的大部分子节点被检索出来,那么子节点就会被父节点替换,从而实现检索节点的层级合并。

如何设置和使用自合并检索器?

-使用自合并检索器需要构建一个层级索引,并从这个索引中获取查询引擎。可以使用辅助函数来构建句子窗口索引,并在接下来的课程中深入了解其工作原理。

在评估RAG管道时,True Lens的作用是什么?

-True Lens是一个标准机制,用于大规模评估生成性AI应用程序。它允许我们根据特定领域的需求和应用程序的动态变化来评估应用程序,而不必依赖昂贵的人工评估或设置基准。

在RAG管道中,如何提高上下文相关性?

-通过使用更先进的检索技术,如句子窗口检索和自合并检索,可以提高检索到的上下文相关性。这些技术能够提供更多的上下文信息,从而在合成最终回答时,使得回答更加相关和准确。

RAG管道中的'合成'阶段是什么?

-在RAG管道的'合成'阶段,将检索到的上下文块与用户查询结合起来,放入LM的提示窗口中,从而生成最终的回答。

在RAG管道中,如何优化总成本?

-通过提高检索和合成的性能,可以在保持高相关性的同时降低总成本。例如,使用句子窗口检索和自合并检索技术可以提高基础性和上下文相关性,从而在不增加总成本的情况下提高性能。

Outlines

Cette section est réservée aux utilisateurs payants. Améliorez votre compte pour accéder à cette section.

Améliorer maintenantMindmap

Cette section est réservée aux utilisateurs payants. Améliorez votre compte pour accéder à cette section.

Améliorer maintenantKeywords

Cette section est réservée aux utilisateurs payants. Améliorez votre compte pour accéder à cette section.

Améliorer maintenantHighlights

Cette section est réservée aux utilisateurs payants. Améliorez votre compte pour accéder à cette section.

Améliorer maintenantTranscripts

Cette section est réservée aux utilisateurs payants. Améliorez votre compte pour accéder à cette section.

Améliorer maintenant5.0 / 5 (0 votes)