Llama-index for beginners tutorial

Summary

TLDRThis video introduces Lama Index, a framework for building applications using LLMs with a focus on RAG (Retrieval Augmented Generation). Unlike Lang Chain, Lama Index specializes in extending external context to LLMs for tasks like question answering from various sources. The tutorial demonstrates creating a question-answering system using a text file, showcasing Lama Index's superior performance in RAG compared to Lang Chain. It guides viewers through installation, setting up a vector store, and building both a query engine and a chat engine, highlighting its ease of use and effectiveness in RAG applications.

Takeaways

- 📚 Lama Index is a framework that uses LLMs for building applications, similar to Lang Chain.

- 🔍 Lama Index's major focus is on implementing RAG (Retrieval Augmented Generation), which extends external context to the LLM.

- 🚀 Lama Index is particularly suited for applications like question answering from various sources such as CSVs, PDFs, and videos.

- ⏩ Lama Index offers faster retrieval compared to Lang Chain, making it a preferred choice for RAG applications.

- 🛠️ To get started with Lama Index, you need to install it via pip and import necessary components like Vector Store Index and Simple Directory Reader.

- 🔑 An OpenAI API key is required and should be set up in the environment variables for authentication.

- 📁 The 'data' folder contains the files that will be loaded as external context; it's important to note that 'data' is a folder, not a file.

- 🧭 The Simple Directory Reader is used to load documents from the 'data' folder, and these documents are then vector indexed.

- 🔍 A query engine is created using the index object from the Vector DB to perform question-answering tasks.

- 💬 For building a chat engine, instead of a single query engine, a chat engine can be constructed to handle multiple questions and simulate a conversation.

- 🌟 Lama Index is user-friendly and as easy to use as Lang Chain, making it a great tool to try out for RAG implementations.

Q & A

What is the primary difference between Lama Index and Lang Chain?

-Lama Index primarily focuses on implementing RAG (Retrieval Augmented Generation), which is used for extending external context to the LLM, making it specialized for tasks like question answering from various sources such as CSVs, PDFs, and videos. Lang Chain, on the other hand, provides broader use cases.

Why might Lama Index be considered superior to Lang Chain in certain scenarios?

-Lama Index can be considered superior to Lang Chain when it comes to RAG due to its faster retrieval capabilities, making it a first preference for applications that involve external resources and context.

What is the first step in setting up a question-answering system with Lama Index?

-The first step is to install Lama Index using pip and then import necessary components such as VectorStoreIndex and SimpleDirectoryReader.

What does RAG stand for, and how does it work?

-RAG stands for Retrieval Augmented Generation. It works by retrieving relevant external information and augmenting the LLM's capabilities to provide more informed responses.

How does Lama Index handle multiple files in a single folder?

-Lama Index treats the specified folder as a data source, loading all files within it as external context. This allows for multiple files to be indexed and used for retrieval.

What is the purpose of setting up an open API key in the environment variable?

-Setting up an open API key in the environment variable is necessary for Lama Index to access external services or databases that may require authentication.

How does the vector database work in Lama Index?

-The vector database in Lama Index stores embeddings of the documents, which are then used for efficient retrieval and context augmentation during question-answering.

What is the role of the query engine in Lama Index?

-The query engine in Lama Index is responsible for processing questions and retrieving relevant information from the indexed documents to provide accurate answers.

Can Lama Index be used to build a chat engine for multiple questions?

-Yes, Lama Index can be used to build a chat engine that handles multiple questions and conversations by building a chat engine instead of a single query engine.

How does Lama Index respond to follow-up questions in a chat engine?

-Lama Index responds to follow-up questions by utilizing the context from previous interactions and the indexed documents to provide relevant and continuous answers.

What is the advantage of using Lama Index for implementing RAG?

-Lama Index is advantageous for implementing RAG because it is designed to be super easy to use, similar to Lang Chain, but with a focus on fast retrieval and external context integration.

Outlines

Cette section est réservée aux utilisateurs payants. Améliorez votre compte pour accéder à cette section.

Améliorer maintenantMindmap

Cette section est réservée aux utilisateurs payants. Améliorez votre compte pour accéder à cette section.

Améliorer maintenantKeywords

Cette section est réservée aux utilisateurs payants. Améliorez votre compte pour accéder à cette section.

Améliorer maintenantHighlights

Cette section est réservée aux utilisateurs payants. Améliorez votre compte pour accéder à cette section.

Améliorer maintenantTranscripts

Cette section est réservée aux utilisateurs payants. Améliorez votre compte pour accéder à cette section.

Améliorer maintenantVoir Plus de Vidéos Connexes

Announcing LlamaIndex Gen AI Playlist- Llamaindex Vs Langchain Framework

The Vertical AI Showdown: Prompt engineering vs Rag vs Fine-tuning

Turn ANY Website into LLM Knowledge in SECONDS

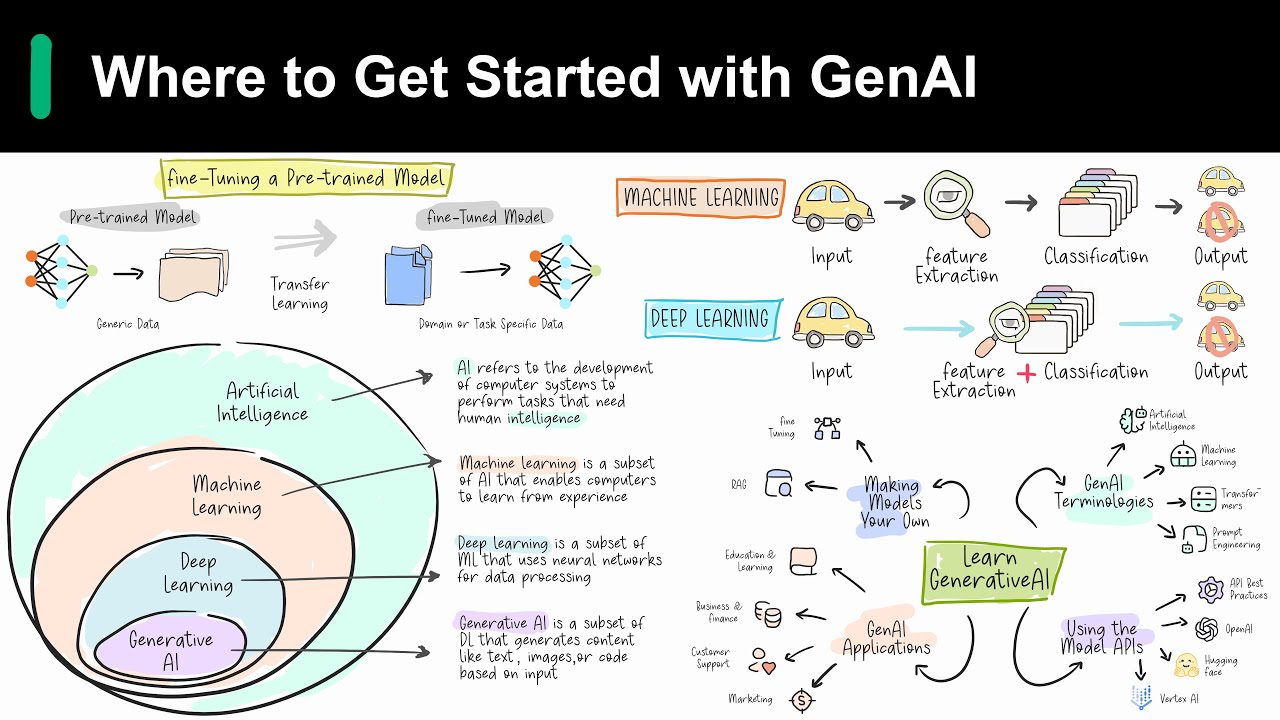

Introduction to Generative AI

4 Levels of LLM Customization With Dataiku

Building Production-Ready RAG Applications: Jerry Liu

5.0 / 5 (0 votes)