Orchestra and dlt: Part 5 - Backfilling

Summary

TLDRThis video demonstrates how to implement backfilling in a data pipeline, allowing users to reload historical data for a specific date range. The script, built on Orchestra’s platform, uses dynamic start and end dates for the backfill process, targeting resource data. The tutorial walks through modifying an incremental script, pushing it to GitHub, and setting up a new pipeline. The pipeline runs successfully, ensuring data is limited to the given date range. The video also hints at exploring Orchestra's Meta Engine for parallel task execution in future sections.

Takeaways

- 📊 Backfilling refers to reloading historical data from a specific time period, such as loading data from 2011 to 2012 even if the dataset spans 2010 to 2020.

- 🛠️ Backfill scripts are similar to incremental scripts but require start and end dates instead of a single incremental date.

- ✏️ Parameters in the backfill script include start date and end date, which are passed as arguments to the resource functions.

- 🔄 Backfilling is applied only to resources where start and end dates are explicitly provided.

- ⚙️ The method `get_attribute` with `apply_hints` is used to implement backfill, changing `updated_at` to `created_at` to define the data range.

- 📈 The `row_order` parameter should be set to ascending to ensure proper ordering of historical data.

- 💾 After script modifications, the backfill script is saved and pushed to the GitHub repository for version control.

- 🚀 Pipelines in Orchestra need to be managed carefully, deleting existing ones if necessary due to the limit on concurrent pipelines.

- -

- 📅 In Orchestra, a new pipeline is created using the backfill script, and environment variables are set for start and end dates to load specific data ranges.

- -

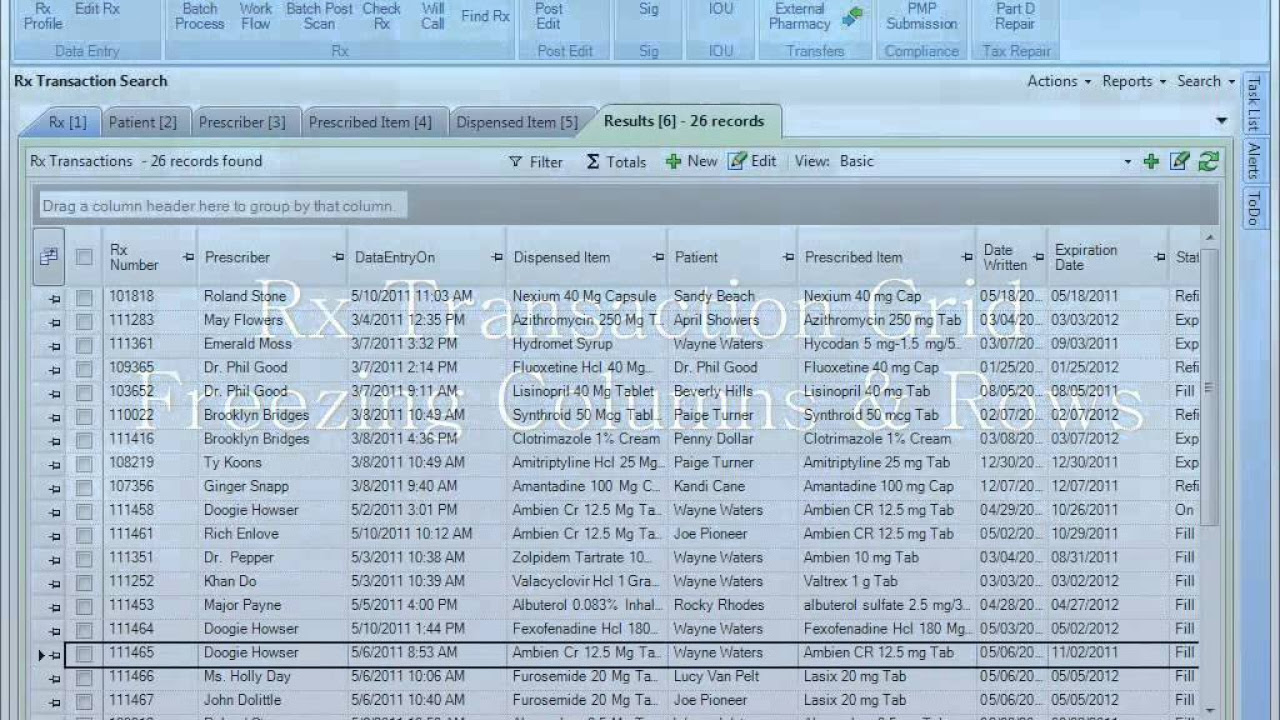

- ✅ Successful execution is verified by querying the resource dataset to confirm that data is loaded correctly between the specified start and end dates.

- -

- 🔍 Ordering the results by `created_at` ascending and descending helps validate that no data exists beyond the intended date range.

- -

- ⚡ The next step in the workflow is using Orchestra's meta engine to dynamically create and run tasks in parallel, enhancing automation and efficiency.

Q & A

What is backfilling in data processing?

-Backfilling is the process of reloading historical data for a specific time period by defining a start date and an end date.

How does backfilling differ from incremental loading?

-Incremental loading processes new data based on a single incremental date, while backfilling retrieves data within a defined range using both a start date and an end date.

What inputs are required to perform backfilling?

-Backfilling requires two inputs: a start date and an end date to define the time range for historical data retrieval.

How is the backfill script created based on an incremental script?

-The backfill script is created by copying the incremental script and modifying it to replace the incremental date parameter with start date and end date parameters.

When is backfilling applied to a resource?

-Backfilling is applied only when both the start date and end date are explicitly provided and are not null.

Which field is used in the script to filter backfilled data?

-The 'created_at' field is used to filter and retrieve data within the specified date range.

What function is used to apply backfilling logic in the script?

-The backfilling logic is applied using methods like 'get_attribute' and 'apply_hints' to define the date range and filtering conditions.

Why is row order specified in the backfill implementation?

-Row order is set to ascending to ensure that data is processed in chronological order from the start date to the end date.

What steps are required after writing the backfill script?

-After writing the script, it should be saved, pushed to a GitHub repository, and then used to create a pipeline in Orchestra.

Why might existing pipelines need to be deleted before creating a new backfill pipeline?

-Because Orchestra limits the number of active pipelines (e.g., to three), older pipelines may need to be deleted to make space for new ones.

How are environment variables used in the backfill pipeline?

-Environment variables are used to pass the start date and end date values dynamically when configuring the pipeline.

How can you verify that backfilling worked correctly?

-You can verify it by querying the dataset and checking that the records fall within the specified date range when sorted by the 'created_at' field.

What result indicates successful backfilling in the example?

-The data retrieved starts from October 1st and does not exceed October 30th, confirming that the backfill was applied correctly.

What happens if start date and end date are not provided for a resource?

-If these dates are not provided, the pipeline dynamically creates separate pipelines for those resources without applying backfilling.

Outlines

Esta sección está disponible solo para usuarios con suscripción. Por favor, mejora tu plan para acceder a esta parte.

Mejorar ahoraMindmap

Esta sección está disponible solo para usuarios con suscripción. Por favor, mejora tu plan para acceder a esta parte.

Mejorar ahoraKeywords

Esta sección está disponible solo para usuarios con suscripción. Por favor, mejora tu plan para acceder a esta parte.

Mejorar ahoraHighlights

Esta sección está disponible solo para usuarios con suscripción. Por favor, mejora tu plan para acceder a esta parte.

Mejorar ahoraTranscripts

Esta sección está disponible solo para usuarios con suscripción. Por favor, mejora tu plan para acceder a esta parte.

Mejorar ahoraVer Más Videos Relacionados

How to Manage DBT Workflows Using Airflow!

Google Sheets - Dashboard Tutorial - Dynamic QUERY Function String - Part 3

PioneerRx - Rx Transaction Grid Tutorial

Learn SQL + Database Concepts in 20 Minutes

How To Build A Trading Bot In Python

😱 Crazy new Supabase feature: Understand and learn about anonymous users

5.0 / 5 (0 votes)