#10 Machine Learning Specialization [Course 1, Week 1, Lesson 3]

Summary

TLDRThis video script explains the fundamentals of supervised learning, focusing on how algorithms process input data sets to predict outcomes. It introduces the concept of a training set comprising input features and output targets, which the model learns from. The model, represented as a function 'f', estimates predictions (Y hat) based on input features (X). The script delves into linear regression, a basic form of supervised learning where 'f' is a straight line, and discusses the importance of the cost function in machine learning. It concludes by encouraging viewers to explore an optional lab for hands-on experience with linear regression in Python.

Takeaways

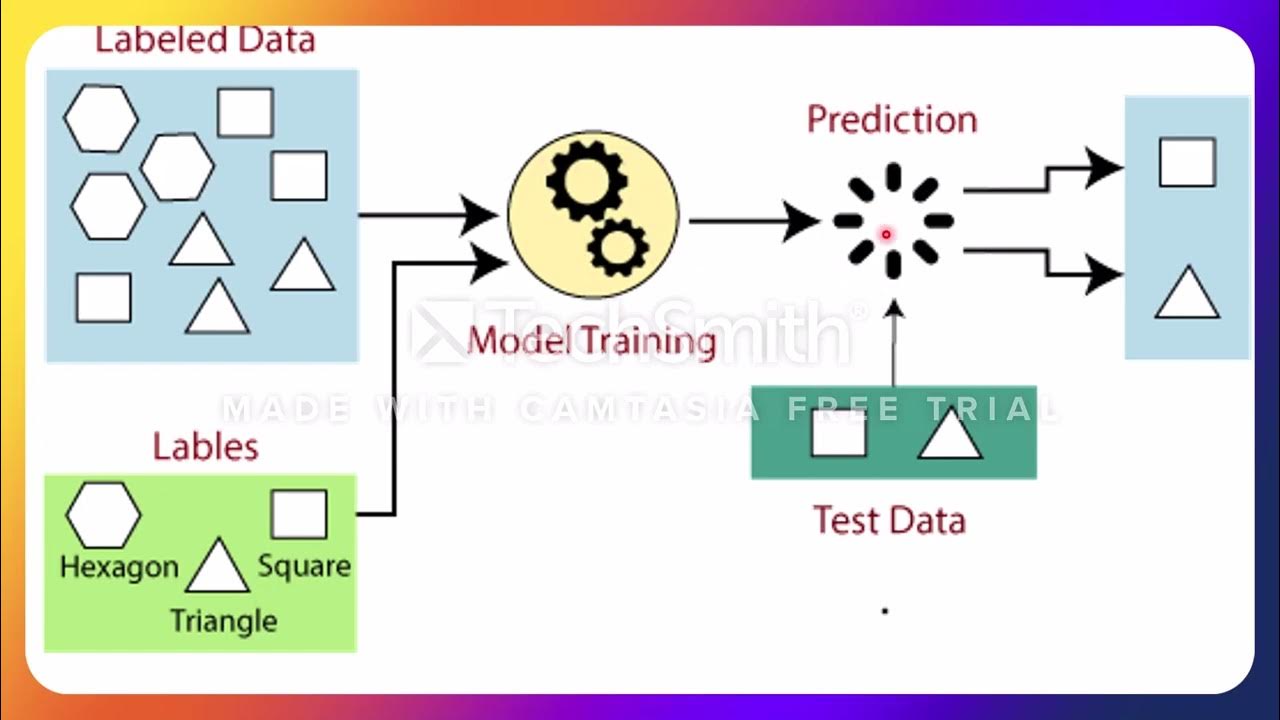

- 📊 Supervised learning algorithms use input data sets to produce a function (f) that can predict output targets.

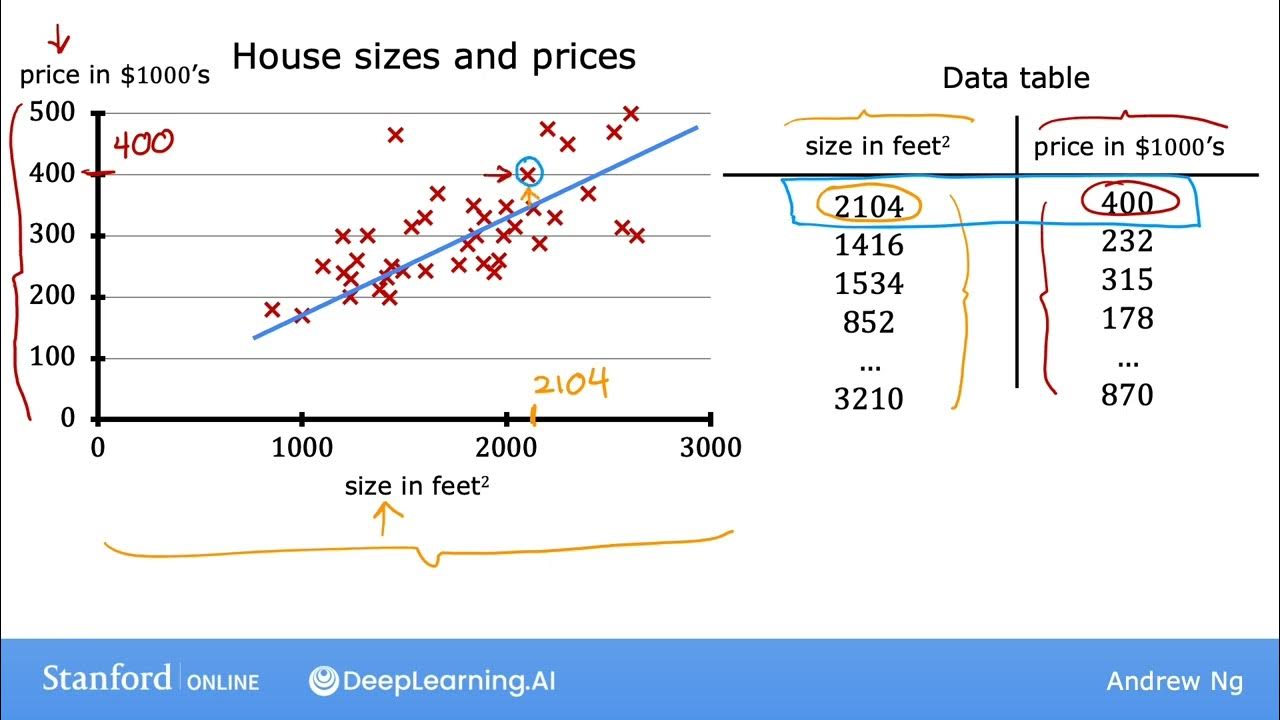

- 🏠 The training set in supervised learning includes both input features (e.g., size of a house) and output targets (e.g., price of a house).

- 🔢 The model's prediction (y hat) is an estimate of the actual target value (Y), which may differ until confirmed.

- 📉 The function f, often represented as a straight line, is used to make predictions based on the input feature X.

- 📈 The choice of a linear function (F_w,b(x) = Wx + b) simplifies the model and serves as a foundation for more complex models.

- 📚 Linear regression is the specific name for the model when the function f is a straight line, especially with one input variable.

- 🔑 Parameters W and B in the function f are crucial as they determine the slope and intercept of the line, respectively.

- 📝 The script suggests an optional lab for practicing defining a straight line function in Python and fitting it to training data.

- 🤖 Cost function is a critical component in machine learning, used to evaluate and improve the model's performance.

- 🔍 The next step after understanding the basics of linear regression is to learn how to construct a cost function for model optimization.

Q & A

What is the primary goal of a supervised learning algorithm?

-The primary goal of a supervised learning algorithm is to learn a function, denoted as 'f', that can take new input data (features) and predict an output (target) based on the training data provided.

What are the two components of a training set in supervised learning?

-The two components of a training set in supervised learning are the input features (such as the size of a house) and the output targets (such as the price of the house).

What does the output target represent in the context of supervised learning?

-The output target represents the correct answer or the actual true value that the model will learn from during training.

How is the function 'f' in supervised learning typically represented?

-In the context of the script, the function 'f' is represented as a straight line equation, which can be mathematically expressed as f(x) = wx + b, where 'w' and 'b' are parameters that need to be learned.

What is the difference between 'y' and 'y-hat' in the script?

-'y' represents the actual true value of the target in the training set, while 'y-hat' (notated as ŷ) is the estimated or predicted value of 'y' made by the model.

Why might a linear function be chosen as the initial model in supervised learning?

-A linear function is chosen as the initial model because it is relatively simple and easy to work with, which serves as a good foundation for understanding more complex models, including non-linear ones.

What is the term for a linear model with a single input variable?

-A linear model with a single input variable is called 'univariate linear regression', where 'univariate' indicates that there is only one variable involved.

What is the purpose of the cost function in the context of supervised learning?

-The cost function in supervised learning is used to measure the performance of the model by quantifying the difference between the model's predictions and the actual values in the training set.

Why is the cost function considered an important concept in machine learning?

-The cost function is considered an important concept in machine learning because it is a universal tool used to evaluate and improve the model's predictions, and it is fundamental to training many advanced AI models.

What is the next step after defining the model function in supervised learning?

-After defining the model function, the next step is to construct a cost function and use it to optimize the parameters of the model to minimize the cost and improve the model's predictions.

Outlines

Esta sección está disponible solo para usuarios con suscripción. Por favor, mejora tu plan para acceder a esta parte.

Mejorar ahoraMindmap

Esta sección está disponible solo para usuarios con suscripción. Por favor, mejora tu plan para acceder a esta parte.

Mejorar ahoraKeywords

Esta sección está disponible solo para usuarios con suscripción. Por favor, mejora tu plan para acceder a esta parte.

Mejorar ahoraHighlights

Esta sección está disponible solo para usuarios con suscripción. Por favor, mejora tu plan para acceder a esta parte.

Mejorar ahoraTranscripts

Esta sección está disponible solo para usuarios con suscripción. Por favor, mejora tu plan para acceder a esta parte.

Mejorar ahoraVer Más Videos Relacionados

5.0 / 5 (0 votes)