Insertion Sort and Asymptotic Analysis

Summary

TLDRThis lecture covers the fundamentals of sorting algorithms, focusing on Insertion Sort and Merge Sort. It explains the concepts of worst, average, and best-case scenarios, and provides an in-depth look at the pseudocode for Insertion Sort, its time complexity, and the asymptotic analysis behind it. Additionally, the lecture introduces Merge Sort, a more efficient sorting algorithm with a time complexity of O(n log n). Emphasis is placed on understanding runtime performance, recurrence relations, and Big-O notation, helping students gain a clear understanding of sorting algorithm efficiency and analysis techniques.

Takeaways

- 😀 Sorting involves rearranging an array in ascending order, where the output is a sorted version of the input array.

- 😀 Insertion Sort is a simple algorithm where elements are picked and inserted into their correct position one by one.

- 😀 Insertion Sort’s pseudocode involves iterating through the array, comparing and shifting elements, and inserting the key element into its correct position.

- 😀 An example of Insertion Sort starts with the second element as the key and shifts larger elements to the right until the correct position for the key is found.

- 😀 The worst-case runtime for Insertion Sort occurs when the array is reverse sorted, with a time complexity of O(n^2).

- 😀 The best-case runtime for Insertion Sort is when the input is already sorted, leading to O(n) time complexity.

- 😀 The average-case runtime for Insertion Sort is also O(n^2), similar to the worst-case scenario.

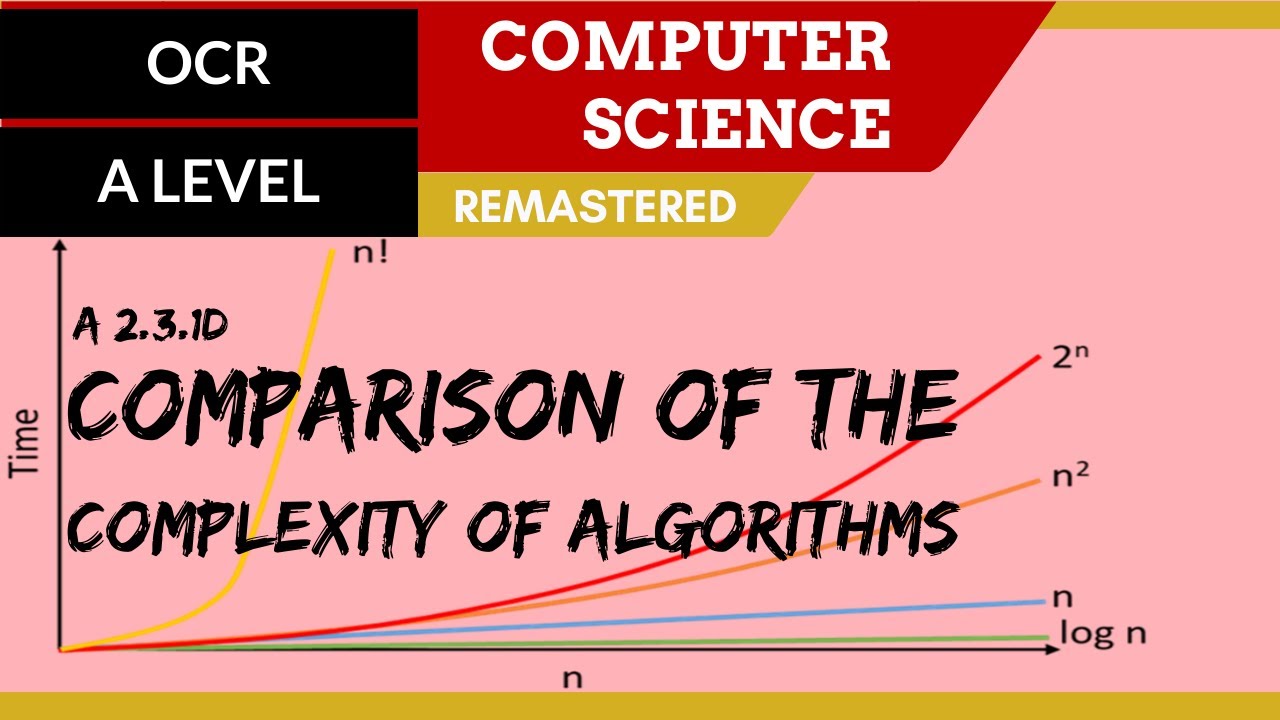

- 😀 Asymptotic analysis like Big O notation is used to represent an algorithm's runtime while ignoring constants and lower-order terms.

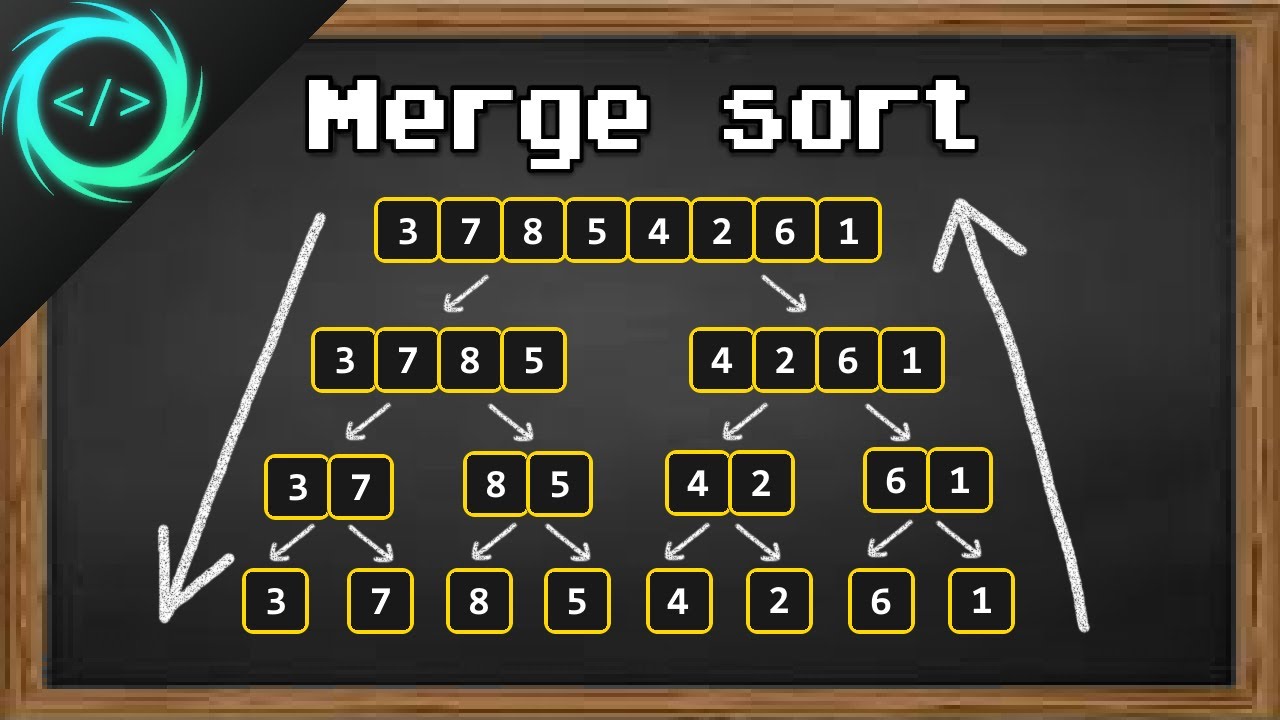

- 😀 Merge Sort is a divide-and-conquer algorithm that divides an array into smaller subarrays, recursively sorts them, and then merges them.

- 😀 The time complexity of Merge Sort is O(n log n), making it more efficient than Insertion Sort for larger datasets.

- 😀 The recurrence relation for Merge Sort is T(n) = 2T(n/2) + O(n), which is solved using recursion trees to determine the overall time complexity.

Q & A

What is the main goal of the sorting problem?

-The main goal of the sorting problem is to rearrange an array of elements in a specific order, usually in ascending order, such that the elements are ordered from smallest to largest.

How does the Insertion Sort algorithm work?

-Insertion Sort works by iterating through an array, selecting a key element, and placing it in its correct position by shifting the larger elements to the right. This process repeats for each element in the array until the array is sorted.

What is the worst-case time complexity for Insertion Sort?

-The worst-case time complexity for Insertion Sort occurs when the input array is reverse sorted. In this case, every element has to be shifted to the front, resulting in a time complexity of O(n²).

How is the best case for Insertion Sort determined?

-The best case for Insertion Sort occurs when the input array is already sorted. In this case, only one comparison is needed for each element, resulting in a time complexity of O(n).

What is the time complexity of Insertion Sort in the average case?

-In the average case, the time complexity of Insertion Sort is O(n²), assuming random distribution of the elements.

What is Merge Sort and how does it differ from Insertion Sort?

-Merge Sort is a divide-and-conquer algorithm that recursively divides an array into two halves, sorts each half, and then merges the sorted halves together. Unlike Insertion Sort, Merge Sort has a time complexity of O(n log n), which is more efficient for large datasets.

What is the time complexity of Merge Sort?

-The time complexity of Merge Sort is O(n log n), where 'n' is the size of the input array. This makes it more efficient than algorithms like Insertion Sort, which have O(n²) time complexity in the worst case.

What is the concept of asymptotic notation?

-Asymptotic notation is a mathematical concept used to describe the growth rate of an algorithm's time or space complexity as the size of the input increases. It helps to analyze how an algorithm performs as the input size approaches infinity, ignoring constant factors and lower-order terms.

What is the significance of Big O notation in algorithm analysis?

-Big O notation is used to describe the upper bound of an algorithm's time complexity, providing an estimate of its worst-case behavior. It helps in understanding the scalability of an algorithm and comparing the efficiency of different algorithms.

How does the recursive tree method help solve recurrence relations in algorithms like Merge Sort?

-The recursive tree method helps solve recurrence relations by visualizing the recursive calls as a tree. Each level of the tree represents a sub-problem, and the total time complexity is determined by summing the time taken at all levels. This approach helps derive the time complexity of divide-and-conquer algorithms like Merge Sort.

Outlines

هذا القسم متوفر فقط للمشتركين. يرجى الترقية للوصول إلى هذه الميزة.

قم بالترقية الآنMindmap

هذا القسم متوفر فقط للمشتركين. يرجى الترقية للوصول إلى هذه الميزة.

قم بالترقية الآنKeywords

هذا القسم متوفر فقط للمشتركين. يرجى الترقية للوصول إلى هذه الميزة.

قم بالترقية الآنHighlights

هذا القسم متوفر فقط للمشتركين. يرجى الترقية للوصول إلى هذه الميزة.

قم بالترقية الآنTranscripts

هذا القسم متوفر فقط للمشتركين. يرجى الترقية للوصول إلى هذه الميزة.

قم بالترقية الآنتصفح المزيد من مقاطع الفيديو ذات الصلة

161. OCR A Level (H446) SLR26 - 2.3 Comparison of the complexity of algorithms

Sorting - Part 1 | Selection Sort, Bubble Sort, Insertion Sort | Strivers A2Z DSA Course

TIK-Pencarian dan Pengurutan

Learn Merge Sort in 13 minutes 🔪

Kurikulum Merdeka : Informatika (SMA Kelas X) || Sorting

3 Types of Algorithms Every Programmer Needs to Know

5.0 / 5 (0 votes)