Introduction to generative AI scaling on AWS | Amazon Web Services

Summary

TLDRLeonardo Moro discusses the transformative impact of generative AI and large language models (LLMs) on industry trends and challenges. He highlights the efficiency of Retrieval-Augmented Generation (RAG) for content creation and the scaling challenges it presents. Moro introduces Amazon Bedrock and Pinecone as solutions for deploying LLM applications and managing vector data, respectively. He invites viewers to explore these technologies further through an upcoming hands-on lab on AWS.

Takeaways

- 🌟 Generative AI and large language models (LLMs) are revolutionizing the industry by changing how we perceive the world and interact with technology.

- 🚀 Many organizations are actively building prototypes and pilots to optimize internal operations and provide new capabilities to external users, leveraging the power of retrieval-augmented generation (RAG).

- 🔍 RAG is an efficient and fast method to enrich the context and knowledge that an LLM has access to, simplifying the process compared to alternatives like fine-tuning or training.

- 💡 The features built around RAG are being well-received by users, who are finding them effective and valuable in their applications.

- 🛠️ Builders face the challenge of scaling their prototypes to meet the demands of a full production environment, requiring acceleration in development and reduction in operational complexity.

- 📈 RAG relies on vector data and vector search, necessitating the storage of numerical representations of data for similarity searches to enhance the LLM's responses.

- 🔄 As the data set grows, the system must maintain user-interactive response times to keep up with user expectations and service levels.

- 🔑 Understanding how vector search retrieves data for the LLM is critical for optimizing responses and driving value from the content provided to the user.

- 🛑 Continuous development and updates are necessary to address feature requests, bug reports, and other user feedback, requiring a safe and quick deployment process.

- 🌐 Amazon Bedrock and Pinecone are two technologies that can significantly ease the deployment and operational challenges associated with LLM-based applications and vector storage/search.

- 🔗 By integrating Pinecone with data from Amazon S3, developers can keep vector representations of their data up to date, ensuring meaningful responses from the LLM.

- 📚 An upcoming hands-on lab will provide an opportunity to build and experiment with these technologies in AWS, offering a practical guide for those interested in implementing such solutions.

Q & A

Who is the speaker in the provided transcript?

-The speaker is Leonardo Moro, who builds cold stuff in AWS using products in the AWS Marketplace.

What is the main topic of discussion in the video script?

-The main topic is industry trends, challenges, and solutions related to generative AI, large language models, and Retrieval-Augmented Generation (RAG).

What does RAG stand for in the context of the script?

-RAG stands for Retrieval-Augmented Generation, which is a method to enrich the context and knowledge that a large language model has access to.

Why are organizations building prototypes and pilots with RAG?

-Organizations are building prototypes and pilots with RAG to optimize their internal operations and provide revolutionary new features and capabilities to external users.

What challenges do builders face when scaling RAG-based services to full production?

-Builders face challenges such as managing vector data and search, ensuring user-interactive response times, and supporting the ongoing development of more features and addressing bug reports.

Why is it important to keep user response times interactive-friendly?

-It is important because users are accustomed to a certain level of service, and maintaining interactive response times ensures a good user experience.

What role does vector data play in RAG?

-Vector data plays a crucial role in RAG as it stores numerical representations of data used for similarity searches, which helps in generating responses for the large language model.

What are the two technologies mentioned in the script that can help solve the challenges faced by builders?

-The two technologies mentioned are Amazon Bedrock and Pinecone, which help in deploying LLM-based applications with production readiness and managing vector storage and search, respectively.

How can Amazon Bedrock help with the deployment of LLM-based applications?

-Amazon Bedrock eliminates a significant amount of effort required to get LLM-based applications deployed and running with production readiness.

What does Pinecone offer for vector storage and search?

-Pinecone offers efficient vector storage and search capabilities, making it easier to observe, monitor, and keep the vector representations of data up to date.

How can viewers get access to Pinecone?

-Viewers can access Pinecone through the AWS Marketplace by clicking on the link provided in the article where they found the video.

What additional resource is being planned for those interested in building with AWS?

-A Hands-On Lab is being planned, where participants will get to build with the speaker in AWS, offering a practical experience of the discussed concepts.

Outlines

هذا القسم متوفر فقط للمشتركين. يرجى الترقية للوصول إلى هذه الميزة.

قم بالترقية الآنMindmap

هذا القسم متوفر فقط للمشتركين. يرجى الترقية للوصول إلى هذه الميزة.

قم بالترقية الآنKeywords

هذا القسم متوفر فقط للمشتركين. يرجى الترقية للوصول إلى هذه الميزة.

قم بالترقية الآنHighlights

هذا القسم متوفر فقط للمشتركين. يرجى الترقية للوصول إلى هذه الميزة.

قم بالترقية الآنTranscripts

هذا القسم متوفر فقط للمشتركين. يرجى الترقية للوصول إلى هذه الميزة.

قم بالترقية الآنتصفح المزيد من مقاطع الفيديو ذات الصلة

Introduction to large language models

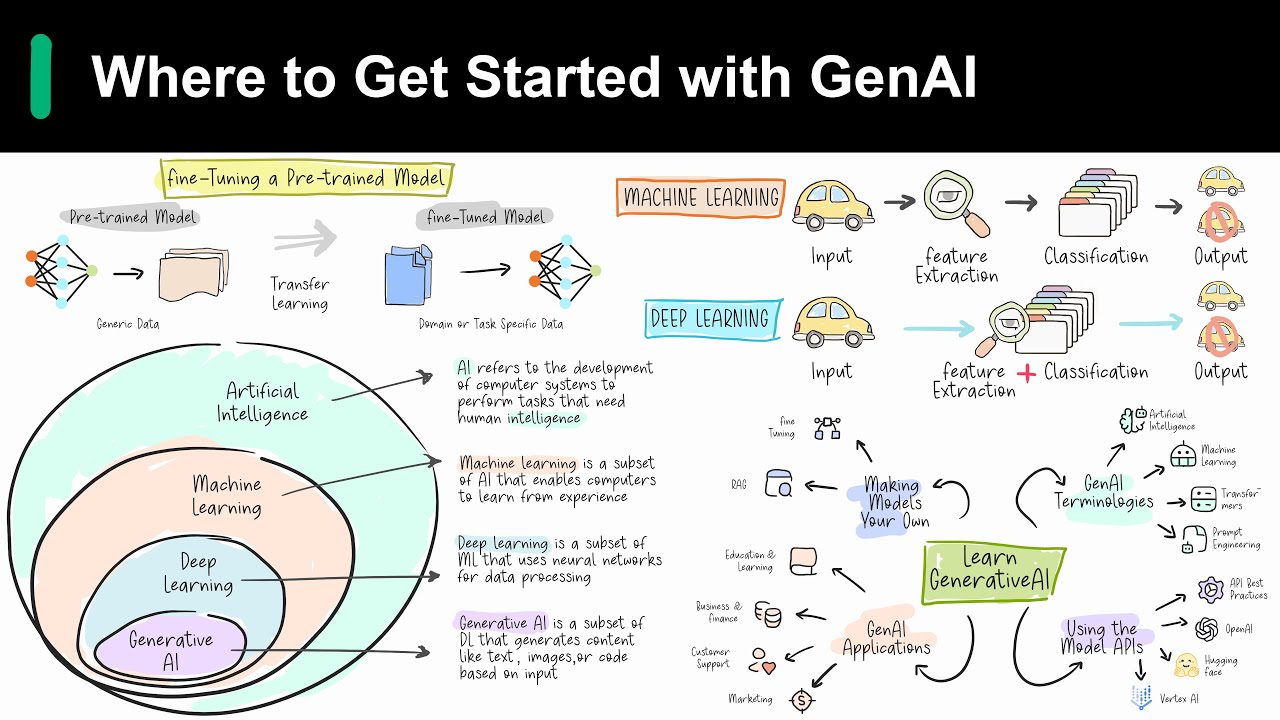

Introduction to Generative AI

Simplifying Generative AI : Explaining Tokens, Parameters, Context Windows and more.

Machine Learning vs. Deep Learning vs. Foundation Models

Practical AI for Instructors and Students Part 1: Introduction to AI for Teachers and Students

How does ChatGPT work? Explained by Deep-Fake Ryan Gosling.

5.0 / 5 (0 votes)