What is a Load Balancer?

Summary

TLDRIn this IBM Cloud video, Bradley Knapp explores the concept of a network load balancer, crucial for managing traffic in high-demand websites. He illustrates the importance of load balancers in distributing user requests across multiple servers to prevent overload, ensuring scalability and reliability. The video discusses three load balancing methods: Round Robin for simple, sequential traffic distribution, smart load balancing for dynamic server load awareness, and random selection for a balance between simplicity and control. The discussion highlights the role of load balancers in cloud-native architectures and their impact on performance and cost.

Takeaways

- 🌐 A network load balancer is essential for distributing incoming traffic across multiple servers to ensure high availability and reliability of a website or application.

- 🚀 It's crucial for scaling a website to handle millions of users by directing traffic efficiently to multiple application servers, preventing any single server from becoming a bottleneck.

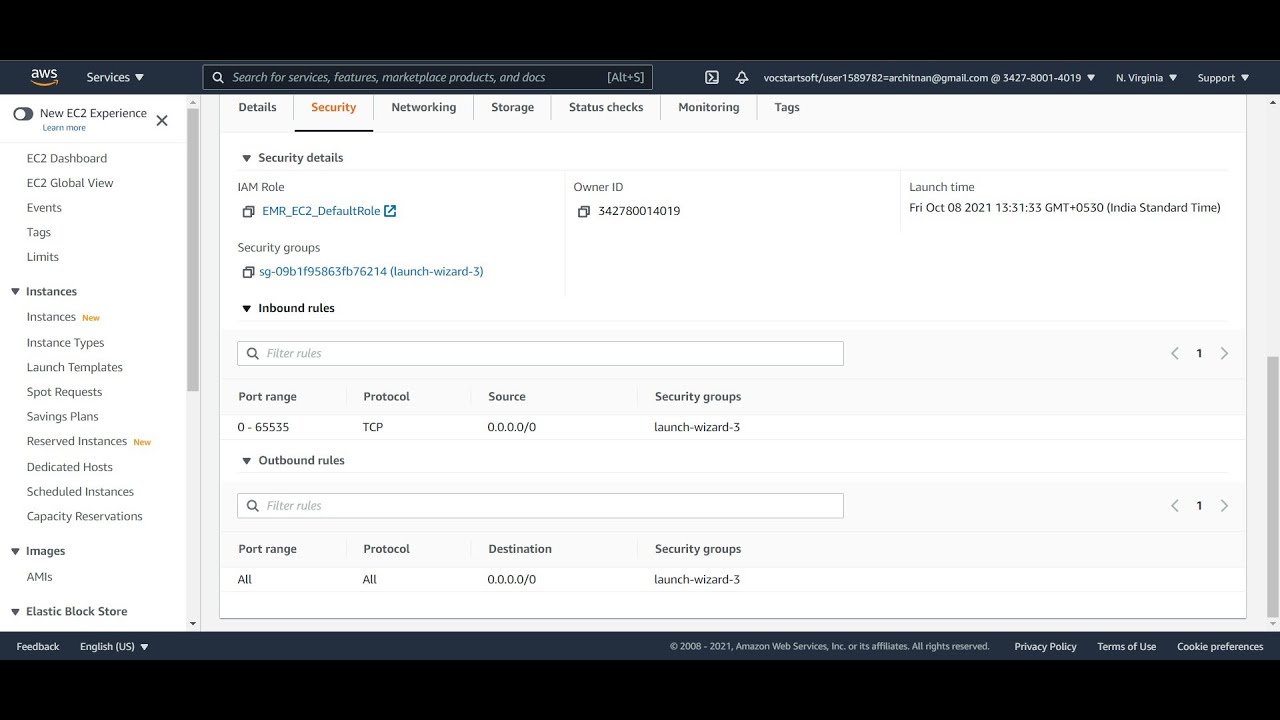

- 💡 Load balancers can be hardware devices or software-defined, and they intercept incoming traffic to distribute it among servers based on various algorithms.

- 🔄 The Round Robin algorithm is a basic load balancing method where traffic is distributed sequentially across servers, but it may not be ideal for long user sessions.

- 🤖 Smart load balancing involves load balancers working in cooperation with application servers, which report their load status to the balancer for more dynamic and efficient traffic distribution.

- 📈 Load balancers can help in auto-scaling by turning off underutilized servers or spinning up new ones based on real-time load data, thus optimizing resource usage and cost.

- 🛡️ Load balancers are a key component of cloud-native architectures, ensuring that traffic is evenly distributed among application servers, which in turn communicate with a common database.

- 🔑 There are more complex load balancing algorithms beyond Round Robin, such as random selection, that can provide better distribution without the need for smart load balancing's complexity.

- 💼 IBM Cloud offers expertise and support in designing and architecting load balancing solutions tailored to specific customer needs and traffic patterns.

- 🔧 For more detailed advice or specific scenarios, reaching out to IBM or exploring additional resources can provide in-depth guidance on implementing effective load balancing strategies.

Q & A

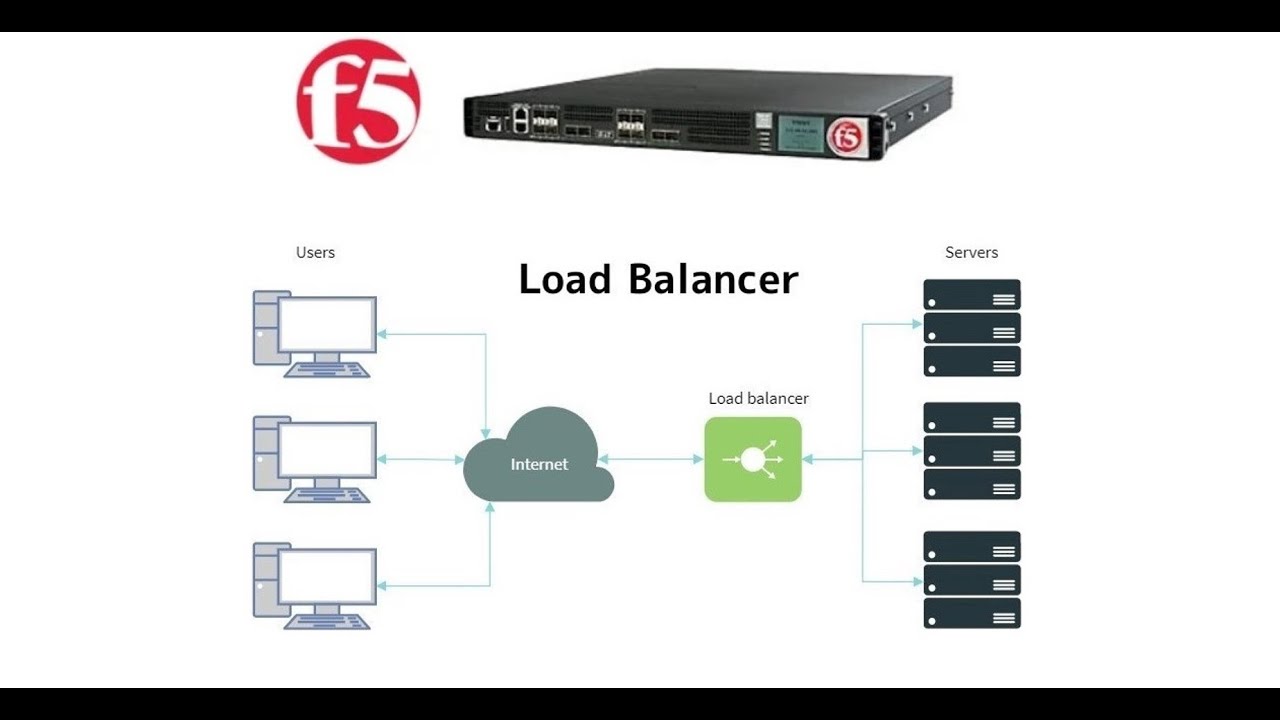

What is a network load balancer?

-A network load balancer is a hardware device or software-defined device that intercepts incoming traffic from the Internet and distributes it across multiple servers to ensure no single server becomes overwhelmed, thus improving the distribution of network or application traffic and providing high availability.

Why is it important to scale application servers?

-Scaling application servers is important to handle increasing loads, such as serving millions of users simultaneously. It allows the infrastructure to grow horizontally by adding more servers rather than just scaling vertically by making existing servers more powerful, which has physical and cost limitations.

How does a load balancer help in managing traffic for a website with high user traffic?

-A load balancer helps by distributing incoming traffic across multiple application servers, ensuring no single server is overwhelmed. It also dynamically scales the application servers up or down based on the current load, which helps in maintaining performance and reducing costs.

What is the Round Robin algorithm used by load balancers?

-Round Robin is a simple load balancing algorithm that distributes traffic sequentially across servers. It sends the first request to the first server, the second request to the second server, and so on, cycling back to the first server after the last one.

What are the limitations of using Round Robin for load balancing?

-Round Robin may not be suitable for scenarios with long user sessions because it can lead to an imbalance in server loads. It does not consider the current load or capacity of the servers, which can result in some servers being overutilized while others are underutilized.

What is smart load balancing and how does it differ from Round Robin?

-Smart load balancing, also known as load-aware load balancing, involves the load balancer working in cooperation with the application servers. It takes into account the current load on each server and makes decisions based on this information, unlike Round Robin, which simply cycles through servers without considering their current load.

Why might a company choose not to use smart load balancing?

-A company might choose not to use smart load balancing due to its higher complexity in setup and configuration, as well as the potentially higher cost of the required load balancer software or hardware.

What is the random selection algorithm used in load balancing?

-The random selection algorithm in load balancing involves the load balancer using a randomizing function to decide which server should handle each incoming connection, providing a balance that is less predictable than Round Robin but simpler than smart load balancing.

How does a load balancer contribute to cloud-native architectures?

-In cloud-native architectures, load balancers are key components that assign traffic to different application servers, ensuring scalability and high availability. They also facilitate communication with a common database tier, preventing issues like split-brain scenarios.

What are some other algorithms that can be used for load balancing besides Round Robin and random selection?

-There are various other algorithms for load balancing, including Least Connections, which sends traffic to the least busy server, and IP Hash, which directs traffic based on the source IP address to ensure session persistence.

How can IBM assist in designing a load balancing solution?

-IBM can assist by providing expertise and resources to help design and architect a load balancing solution tailored to specific needs. This can include consultation on choosing the right type of load balancer, setting up smart load balancing, and integrating with existing infrastructure.

Outlines

هذا القسم متوفر فقط للمشتركين. يرجى الترقية للوصول إلى هذه الميزة.

قم بالترقية الآنMindmap

هذا القسم متوفر فقط للمشتركين. يرجى الترقية للوصول إلى هذه الميزة.

قم بالترقية الآنKeywords

هذا القسم متوفر فقط للمشتركين. يرجى الترقية للوصول إلى هذه الميزة.

قم بالترقية الآنHighlights

هذا القسم متوفر فقط للمشتركين. يرجى الترقية للوصول إلى هذه الميزة.

قم بالترقية الآنTranscripts

هذا القسم متوفر فقط للمشتركين. يرجى الترقية للوصول إلى هذه الميزة.

قم بالترقية الآنتصفح المزيد من مقاطع الفيديو ذات الصلة

5.0 / 5 (0 votes)