The World's First Microprocessor: F-14 Central Air Data Computer

Summary

TLDRThe MP944 microprocessor was developed in 1970 for the F-14 fighter jet and was far more advanced than the Intel 4004, often considered the first microprocessor. The MP944 utilized parallel processing and pipelining to perform complex calculations for the jet's fly-by-wire system. Though largely forgotten today, analysis of the MP944's innovative architecture suggests home computing could have progressed very differently if knowledge of it had been made public earlier.

Takeaways

- 😲 The MP944 microprocessor was developed in 1970, before the Intel 4004, and was much more advanced

- 🤓 The MP944 consisted of 6 ICs - CPU, ROM, RAM, parallel multiplier, parallel divider, and data steering unit

- 🛫 It was used in the Central Air Data Computer for the F-14 Tomcat fighter jet

- 💪🏼 The MP944 could perform arithmetic on 20-bit numbers at high speed using parallel processing

- 🤔 There is debate over whether the MP944 or Intel 4004 was the first true microprocessor

- 👷♂️ The entire 6000+ bit MP944 program was hand-coded in binary in just 3 months

- 🔬 The MP944 chips were tested using a software simulator and a hardware simulator

- 😎 The MP944 automated control of the F-14's variable wing geometry during flight

- ❌ The MP944 project was kept secret by the US military for over 25 years

- 🪦 Iran maintains the last F-14s still in service, but lacks MP944 chips due to US destruction of stock

Q & A

What was the MP944 microprocessor used for?

-The MP944 was used as the central processing unit for the Central Air Data Computer (CADC) on the US Navy's F-14 Tomcat fighter jet. It provided flight control, instrumentation, and weapons systems functionality.

How did the MP944 compare to other microprocessors of the era?

-The MP944 was far more advanced than other microprocessors of the early 1970s. It had a 20-bit architecture, parallel processing capabilities, and innovative design features that weren't seen in consumer chips until the 1980s.

Why was the MP944 kept classified for over 25 years?

-The MP944 was part of the classified avionics system of the F-14 Tomcat. Revealing its capabilities could have compromised national security. It was only declassified in 1998 after the F-14 was retired from frontline US Navy service.

What made programming the MP944 challenging?

-The MP944 had no compiler, so its roughly 3000 instructions of code had to be written in binary machine code. Also, code had to be simulated before being burned onto physical ROM chips since changes were difficult.

How did the MP944 achieve high performance?

-Through parallel processing units, pipelining, optimized hardware for key operations like multiplication, and innovations like adding numbers as they were transferred between chips.

What was innovative about the MP944's architecture?

-It had separate chips for critical tasks like I/O, steering logic, arithmetic, and control flow. This modular architecture with dedicated hardware units enabled high performance.

How were the MP944 chips tested?

-The team wrote test programs that executed on every transistor to detect failures. They also built a physical simulator from discrete components to functionally validate the chips.

Why did the F-14 Tomcat need such an advanced flight computer?

-The F-14 was designed to be an agile, maneuverable fighter for air combat and ground attack. The MP944 automated complex tasks like sweeping the variable-geometry wings during high-G maneuvers.

What happened to the F-14 Tomcats still flying?

-Some F-14s remain active in the Iranian Air Force, having been sold to Iran before the 1979 revolution. The US destroyed its remaining F-14 fleet in 2006 to prevent spare parts from reaching Iran.

Could the MP944 have changed the course of computing history?

-Possibly, if its design had been made public earlier. The MP944's advanced architecture could have influenced microprocessors for personal computers in the 1970s and 80s.

Outlines

此内容仅限付费用户访问。 请升级后访问。

立即升级Mindmap

此内容仅限付费用户访问。 请升级后访问。

立即升级Keywords

此内容仅限付费用户访问。 请升级后访问。

立即升级Highlights

此内容仅限付费用户访问。 请升级后访问。

立即升级Transcripts

此内容仅限付费用户访问。 请升级后访问。

立即升级浏览更多相关视频

Who Wins!! F-14 Tomcat Vs F-15 Eagle: The Best Jet Fighters in the World | Military Summary

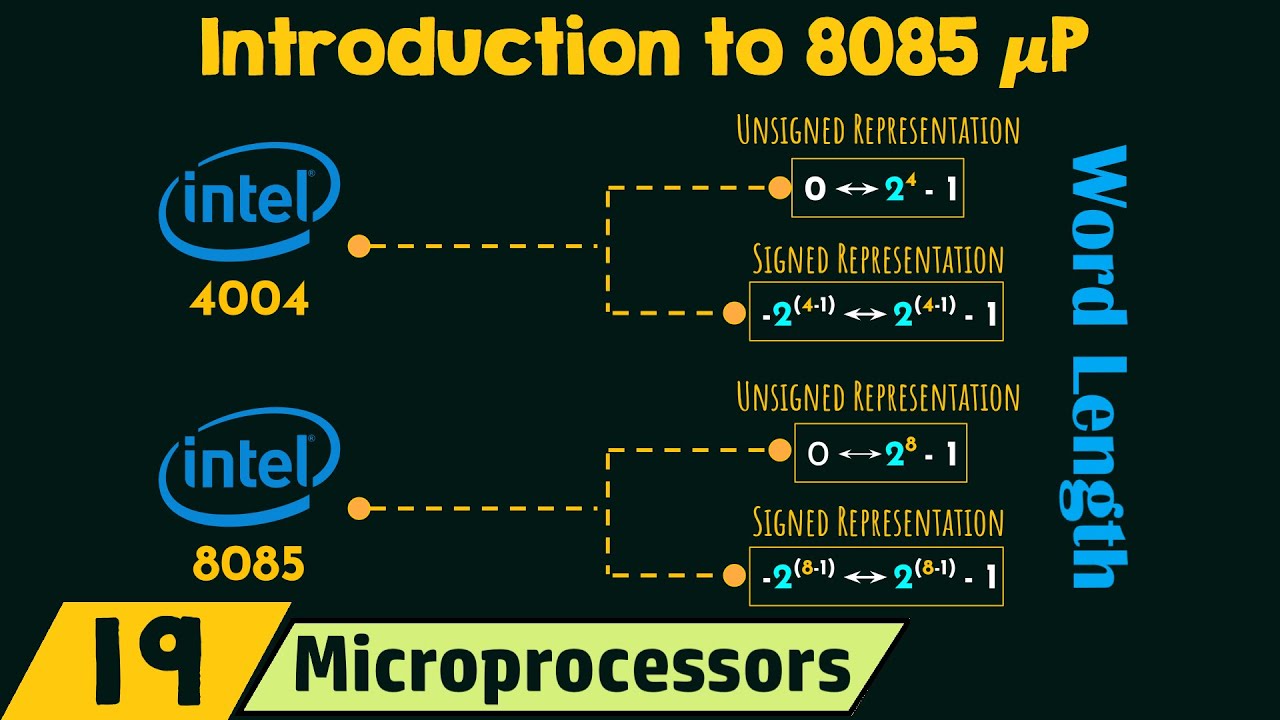

Introduction to 8085 Microprocessor (μP)

Here's Why the F-14 Tomcat Is Such a Badass Plane

1 4 Evolution Du Microprocesseur

F35 Fighter Jet EXPOSED Can India Really Afford It? | Indian Defence Update

Why the F-14 Tomcat Is Such a Badass Plane

5.0 / 5 (0 votes)