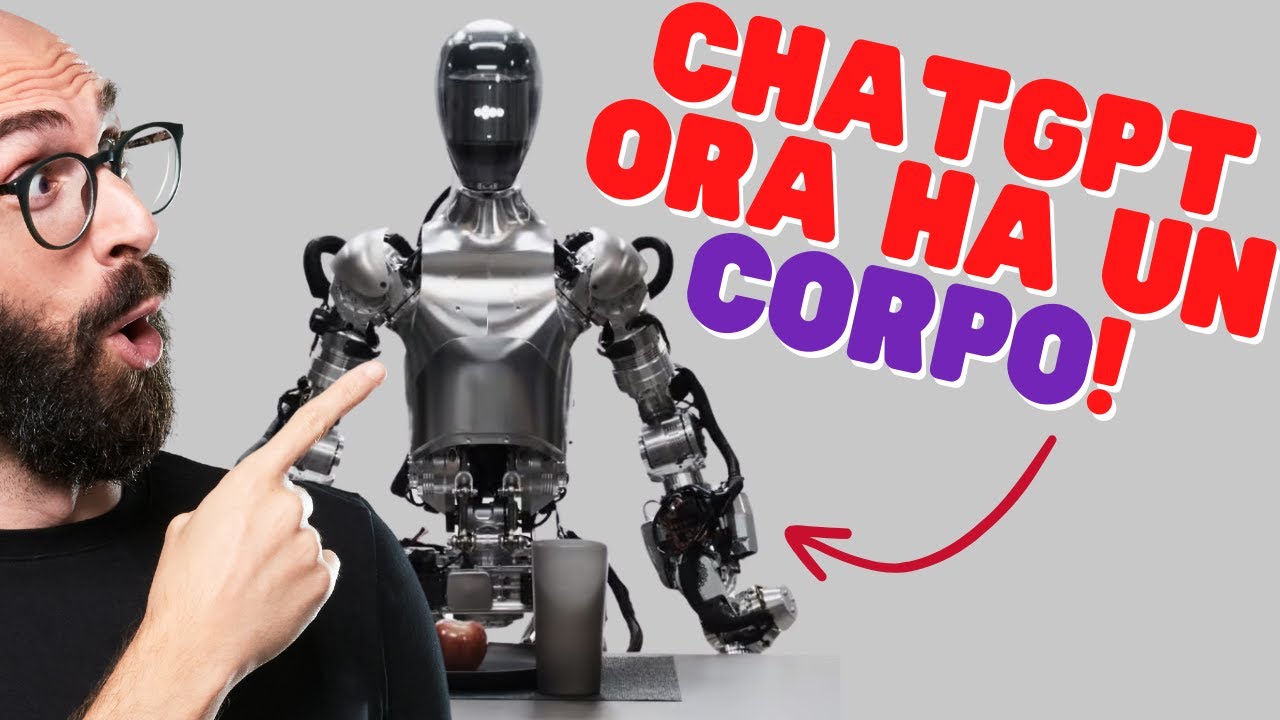

FIGURE 01 AI Robot Update w/ OpenAI + Microsoft Shocks Tech World (THEMIS HUMANOID DEMO)

AI News

2 Feb 202408:01

Summary

The video is abnormal, and we are working hard to fix it.

Please replace the link and try again.

Please replace the link and try again.

The video is abnormal, and we are working hard to fix it.

Please replace the link and try again.

Please replace the link and try again.

The video is abnormal, and we are working hard to fix it.

Please replace the link and try again.

Please replace the link and try again.

Outlines

此内容仅限付费用户访问。 请升级后访问。

立即升级Mindmap

此内容仅限付费用户访问。 请升级后访问。

立即升级Keywords

此内容仅限付费用户访问。 请升级后访问。

立即升级Highlights

此内容仅限付费用户访问。 请升级后访问。

立即升级Transcripts

此内容仅限付费用户访问。 请升级后访问。

立即升级浏览更多相关视频

The Race For AI Robots Just Got Real (OpenAI, NVIDIA and more)

OpenAI's Newest AI Humanoid Robot - Figure 02 - Just Stunned the Robotics World!

OpenAI's 'AGI Robot' Develops SHOCKING NEW ABILITIES | Sam Altman Gives Figure 01 Get a Brain

Questo robot vede, sente e parla grazie a ChatGPT [Figure 01]

The Rise of AI Robots (This is the Future)

ChatGPT-Powered "AGI Robot" STUNS The Entire Industry

Rate This

★

★

★

★

★

5.0 / 5 (0 votes)