SELF-DISCOVER: Large Language Models Self-Compose Reasoning Structures

Summary

TLDRLarge language models have shown promise in reasoning tasks, but various prompting methods make assumptions about universal reasoning processes. The self-discover approach instead aims to uncover the unique reasoning structure for each task. It works in two stages - first, selecting, adapting and implementing reasoning modules into a structured plan based on the task. Second, using this plan to solve problems. Experiments show self-discover enhances reasoning capabilities efficiently across tasks requiring world knowledge. Analyses also demonstrate the importance of each step in the process, and the flexibility of applying self-discovered structures across models.

Takeaways

- 😀 LLMs have made progress in generating text and solving problems, but more reasoning capabilities are needed

- 🧠 Researchers use prompting methods inspired by human reasoning to enhance LLM reasoning

- 🚀 Self-discover aims to uncover the unique reasoning structure for each task

- 🔍 Self-discover has 3 key steps: select, adapt and implement reasoning modules

- 📈 Self-discover outperforms other methods on challenging reasoning tasks

- 💡 Self-discover is efficient, requiring fewer inference calls

- 🌟 Self-discover is effective for tasks requiring world knowledge

- ⚙️ All 3 self-discover steps contribute to enhanced LLM performance

- 🔀 Self-discover blends prompting methods into a versatile approach

- 🤖 Self-discover allows flexibility in how models approach reasoning tasks

Q & A

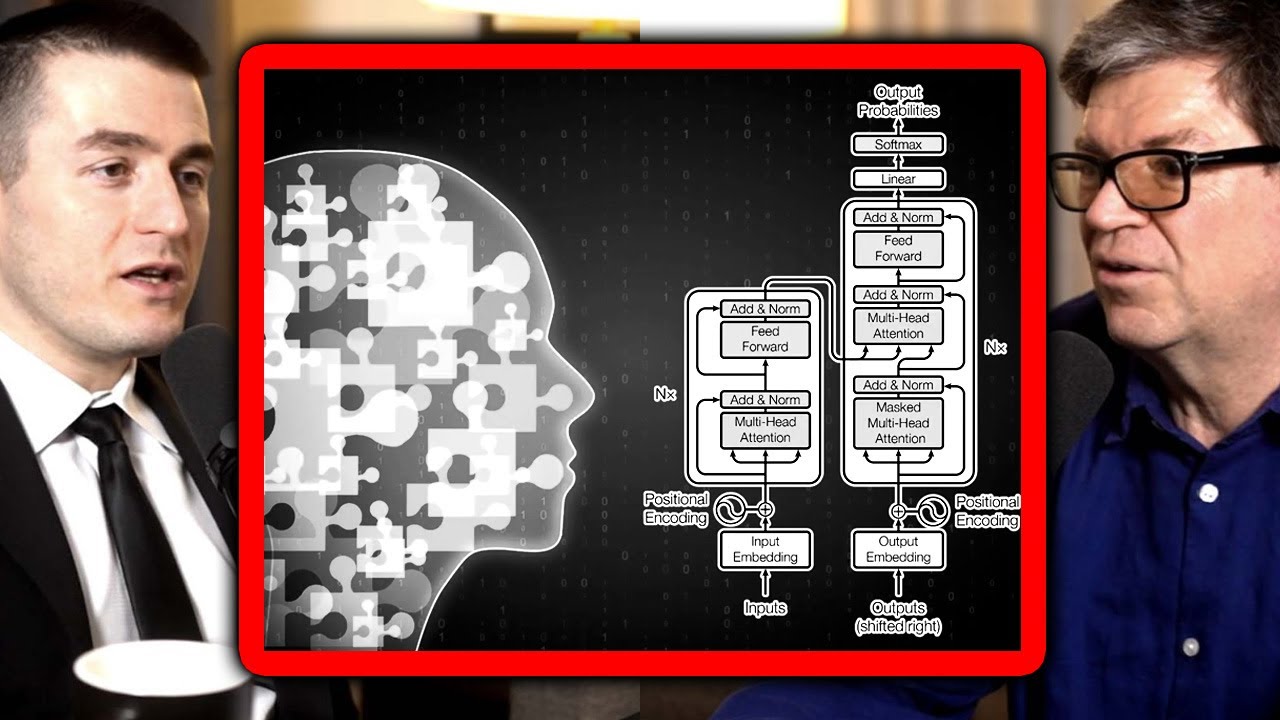

What are large language models (LLMs) and what have they recently shown capabilities for?

-Large language models are neural networks trained on massive amounts of text data. Recently they have shown the ability to generate coherent texts and follow instructions thanks to the power of Transformers.

What is the goal mentioned in developing new methods for enhancing LLMs' reasoning capabilities?

-The goal is to push LLMs even further in their ability to reason and solve complex problems more effectively.

What are some examples of prompting methods inspired by human reasoning that have been tried with LLMs?

-Methods inspired by how humans tackle problems step-by-step, break big problems into smaller pieces, or step back to understand the full nature of a task better.

What limitation is shared by these human reasoning-inspired prompting methods?

-They assume one universal reasoning process will work for all tasks, which isn't always the case. Each task has its own unique puzzle to solve.

How does the self-discover approach aim to address this limitation?

-Self-discover tries to uncover the unique reasoning structure within each task, like how humans would come up with a game plan for a new problem using basic strategies.

What are the two main stages of the self-discover process?

-First it figures out the task's unique reasoning structure by guiding the LLM through steps to generate a plan. Then the LLM uses this plan to solve actual problems.

What are some of the advantages offered by the self-discover approach over other methods?

-It combines different reasoning strategies, is computationally efficient, and provides insights into the task in an understandable way.

On what types of challenging reasoning tasks did self-discover perform particularly well?

-It excelled on tasks requiring world knowledge and did pretty well on algorithmic challenges too.

Where did the self-discover approach stumble in the analysis?

-Mainly on computation errors in math problems.

What do the results suggest about the potential future applicability of self-discover?

-Its ability to transfer reasoning structures between models shows promise for using structured reasoning to tackle challenging problems with LLMs going forward.

Outlines

This section is available to paid users only. Please upgrade to access this part.

Upgrade NowMindmap

This section is available to paid users only. Please upgrade to access this part.

Upgrade NowKeywords

This section is available to paid users only. Please upgrade to access this part.

Upgrade NowHighlights

This section is available to paid users only. Please upgrade to access this part.

Upgrade NowTranscripts

This section is available to paid users only. Please upgrade to access this part.

Upgrade NowBrowse More Related Video

Game OVER? New AI Research Stuns AI Community.

Tree of Thoughts: Deliberate Problem Solving with Large Language Models - Let Your LLMs Play Games!

Reverse Thinking Makes LLMs Stronger Reasoners

Can LLMs reason? | Yann LeCun and Lex Fridman

New "Absolute Zero" Model Learns with NO DATA

The LLM's RL Revelation We Didn't See Coming

5.0 / 5 (0 votes)