GLM vs. GAM - Generalized Additive Models

Summary

TLDRThis video delves into the differences between linear models, generalized linear models (GLMs), and generalized additive models (GAMs). It explains how linear models assume normally distributed data with constant variance, while GLMs introduce the flexibility of using various distributions and link functions. GAMs extend this further by modeling complex relationships through smooth functions, balancing bias and variance. The video also covers key concepts such as smoothing, regularization, and the backfitting algorithm, offering a detailed look at fitting models with splines and penalized iterated least squares, while emphasizing their application in real-world data analysis.

Takeaways

- 😀 Linear models assume a linear relationship between predictors and the outcome, with normally distributed data and constant variance.

- 😀 Generalized linear models (GLMs) allow for a broader range of distributions for the dependent variable, using a link function to relate predictors to the outcome.

- 😀 Additive models (AMs) use smooth functions of predictors, offering flexibility over linear models, to capture more complex relationships between variables.

- 😀 Generalized additive models (GAMs) extend additive models by applying smooth functions to the link scale of GLMs, allowing for more complexity in model fitting.

- 😀 The main goal of using AMs and GAMs is to model complex, nonlinear relationships between predictors and the outcome while balancing smoothness and flexibility.

- 😀 There is an inherent trade-off between model smoothness (lower variance, higher bias) and the ability to interpolate data (higher variance, lower bias).

- 😀 Smoothing parameters, such as penalty terms in splines, control the trade-off between smoothness and interpolation in models like AMs and GAMs.

- 😀 The backfitting algorithm in additive models involves iteratively adjusting smooth functions based on residuals from other predictors, fitting one predictor at a time.

- 😀 In GAMs, the backfitting algorithm is adapted to account for the link function and weighted responses, using Fisher scoring or IRLS (Iterated Reweighted Least Squares).

- 😀 Additive models and GAMs can incorporate linear and nonlinear terms, as well as interactions between predictors, to improve model flexibility and accuracy.

- 😀 The curse of dimensionality is a challenge when using additive models and GAMs, as high-dimensional data can lead to sparse and unreliable estimates.

- 😀 The current dominant approach for fitting GAMs is using penalized splines with IRLS, which offers a simpler and more efficient alternative to traditional backfitting.

Q & A

What are the key assumptions of simple linear models discussed in the script?

-Simple linear models assume independent and identically distributed observations, often normally distributed responses with constant variance, and a linear relationship between the predictors and the mean of the response.

How do generalized linear models differ from simple linear models?

-Generalized linear models allow the response variable to follow any distribution from the exponential dispersion family and relate the mean of the response to predictors through a link function rather than a direct linear relationship.

What role does the link function play in generalized linear models?

-The link function connects the expected value of the response variable to the linear predictor, enabling modeling of non-normal data distributions.

Why is modeling complex relationships between predictors and response important?

-The true relationship between predictors and response may be nonlinear or complex, so flexible modeling approaches are needed to capture patterns that linear assumptions cannot represent.

What are scatterplot smoothers and what is their purpose?

-Scatterplot smoothers are methods used to estimate a smooth function from scattered data points, aiming to capture underlying trends without imposing strict parametric forms.

What is the bias–variance trade-off in the context of smoothing?

-Smoother functions typically have lower variance but higher bias, while less smooth (more flexible) functions reduce bias but increase variance, requiring a balance between the two.

How is smoothness controlled in spline-based models?

-Smoothness is controlled using a smoothing parameter that balances fit quality (residual sum of squares) against a penalty term measuring function wiggliness, often quantified via squared second derivatives.

What are additive models and how do they extend linear models?

-Additive models replace linear predictor terms with smooth functions of predictors, allowing flexible nonlinear relationships while maintaining an additive structure.

Why is the intercept typically separated in additive models?

-The intercept is separated and smooth functions are constrained to have zero mean to avoid identifiability issues where constant shifts could be redistributed among functions.

How do generalized additive models extend additive models?

-Generalized additive models combine additive smooth predictor effects with generalized linear model structure, placing smooth functions on the link scale to handle non-normal response distributions.

What is the backfitting algorithm used for in additive models?

-The backfitting algorithm iteratively estimates each component function by fitting it to partial residuals obtained after removing the contributions of other functions.

How is the fitting process for generalized additive models related to generalized linear model fitting?

-Generalized additive models extend the iteratively reweighted least squares approach from generalized linear models by performing weighted backfitting on working responses during each iteration.

Outlines

This section is available to paid users only. Please upgrade to access this part.

Upgrade NowMindmap

This section is available to paid users only. Please upgrade to access this part.

Upgrade NowKeywords

This section is available to paid users only. Please upgrade to access this part.

Upgrade NowHighlights

This section is available to paid users only. Please upgrade to access this part.

Upgrade NowTranscripts

This section is available to paid users only. Please upgrade to access this part.

Upgrade NowBrowse More Related Video

Statistical Learning: 7.4 Generalized Additive Models and Local Regression

GLM Part 2: Numeric General Linear Models: An Alternative to Regression

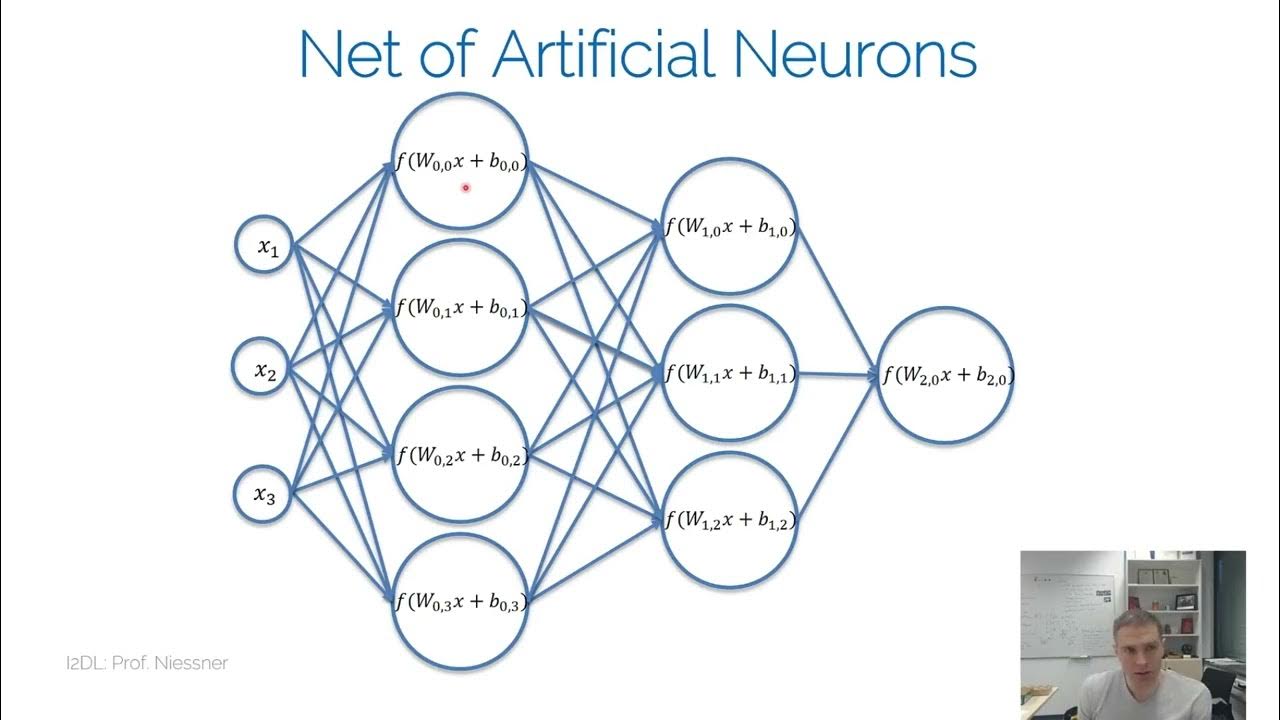

I2DL NN

Using Linear Models for t-tests and ANOVA, Clearly Explained!!!

What Linear Algebra Is — Topic 1 of Machine Learning Foundations

Analisis Data Panel Dinamis GMM bagian 1

5.0 / 5 (0 votes)