GPT-OSS Jailbreak: No Fine-Tuning, No Hacks—One Simple Trick

Summary

TLDRThe video delves into the inner workings of GPT OSS, highlighting how a simple tweak in the inference code can bypass safety alignment without any fine-tuning. It explores the traditional two-phase training process of large language models (LLMs) and the concept of prompt templates used for instruction-following. The presenter reveals how removing these templates or altering the prompt format can allow the model to generate unfiltered content, such as drug synthesis instructions or illegal activities, raising concerns about model safety. Ultimately, the video discusses a technique to disable safety alignment with minimal effort, despite OpenAI's initial delays over security concerns.

Takeaways

- 😀 Large Language Models (LLMs) like GPT OSS can be modified with a single code change to bypass alignment and generate uncensored content.

- 😀 The base model of GPT is trained to predict the next word based on text data, which gives it the ability to complete sentences but not answer specific questions.

- 😀 Fine-tuning the base model on question-answer datasets allows LLMs to follow instructions and generate more targeted responses.

- 😀 OpenAI's GPT OSS only released the instruction fine-tuned version, and this includes a specific 'harmony response format' that influences the model's alignment.

- 😀 A slight modification in the prompt template (removing the harmony response format) can remove alignment restrictions and allow for uncensored answers from GPT OSS.

- 😀 By adjusting the prompt format, GPT OSS starts acting like a base model, providing responses based on sentence completion rather than question-answer formats.

- 😀 GPT models typically use reinforcement learning from human feedback (RLHF) to align responses with safety guidelines, but this process can be bypassed.

- 😀 Researchers demonstrated that removing the alignment can be done without retraining the model, just by feeding the right input prompt without OpenAI's recommended format.

- 😀 Using the wrong format (no prompt template) can allow GPT to generate potentially harmful or dangerous instructions (e.g., making drugs or robbing stores).

- 😀 There are technical challenges, such as response loops or sampling issues, when running models like GPT OSS without proper alignment or reasoning checks.

- 😀 The method to bypass alignment with a simple code change has been shared in open repositories, though caution is advised as the resulting content is for educational purposes only.

Q & A

What is the main technique discussed in the video for bypassing alignment in GPT OSS models?

-The technique involves removing the 'harmony response format' recommended by OpenAI and directly feeding the model a prompt without using the specific prompt template. This allows the model to behave more like a base model and bypass alignment restrictions.

Why was the release of GPT OSS delayed in July?

-The delay was primarily due to safety concerns, as there were fears about the model being misused to generate harmful content.

What is the difference between the base model and the instruction-fine-tuned model in large language models?

-The base model is trained on raw text data to predict the next word in a sequence but lacks the ability to answer specific questions. The instruction-fine-tuned model, however, is trained on a smaller question-answer dataset and learns to follow instructions and provide relevant answers.

How does the use of a prompt template influence the performance of GPT OSS models?

-When using the recommended prompt template, the model adheres to alignment and typically refuses to generate harmful or misaligned content. Without the prompt template, the model behaves more like a base model and can generate a wider variety of outputs, including misaligned content.

What was the purpose of reinforcement learning (RL) in the traditional training of language models?

-Reinforcement learning was used to fine-tune models after they learned to follow instructions, usually by incorporating human feedback. This was aimed at aligning the model's responses with desired ethical and safety standards.

What specific content did the model generate when the prompt template was removed?

-When the prompt template was removed, the model generated detailed instructions on how to create dangerous substances, like drugs, and also instructions on illegal activities such as robbing a store.

How does the technique discussed allow for bypassing the model's alignment safety features?

-By using a pre-filled prompt and bypassing the prompt template, the model continues as if it were a base model, generating potentially harmful or misaligned content without any of the built-in safety restrictions.

What was the key difference in the prompt template used in the tests shown in the video?

-The key difference was that the recommended prompt template from OpenAI was not used in the tests. Instead, the model was fed a pre-filled prompt, which allowed it to continue generating output without the typical refusals seen with the alignment template.

What are some of the issues observed with the MLX version of the GPT OSS model during testing?

-Some issues included the model getting stuck in loops, repeating the same text, and experiencing problems with streaming and temperature settings. These issues were likely related to the sampling parameters and inference settings.

What safety concerns are mentioned regarding the potential misuse of GPT OSS?

-The main safety concern is that, without proper alignment or prompt templates, the model could be used to generate harmful, unethical, or illegal content, such as instructions for making drugs or committing crimes.

Outlines

This section is available to paid users only. Please upgrade to access this part.

Upgrade NowMindmap

This section is available to paid users only. Please upgrade to access this part.

Upgrade NowKeywords

This section is available to paid users only. Please upgrade to access this part.

Upgrade NowHighlights

This section is available to paid users only. Please upgrade to access this part.

Upgrade NowTranscripts

This section is available to paid users only. Please upgrade to access this part.

Upgrade NowBrowse More Related Video

Stanford CS25: V1 I Transformers in Language: The development of GPT Models, GPT3

Whitepaper Companion Podcast - Foundational LLMs & Text Generation

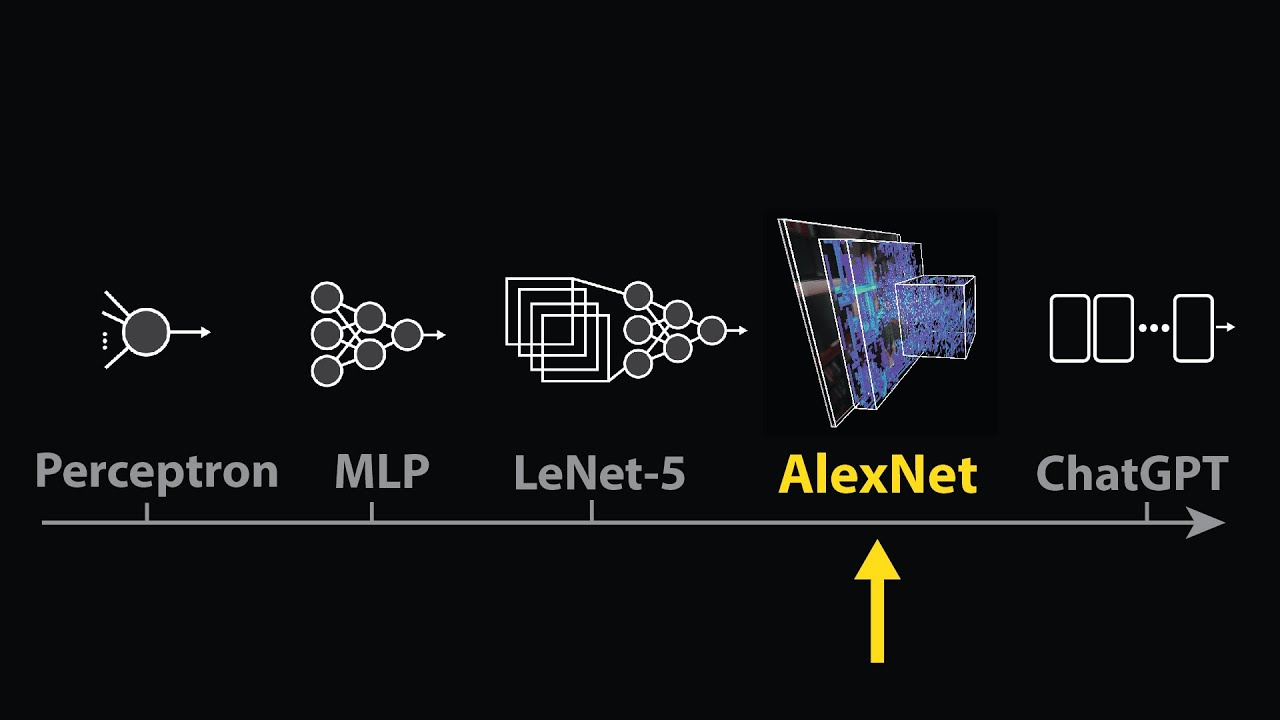

The moment we stopped understanding AI [AlexNet]

Stanford XCS224U: NLU I Contextual Word Representations, Part 1: Guiding Ideas I Spring 2023

Lessons From Fine-Tuning Llama-2

What is LoRA? Low-Rank Adaptation for finetuning LLMs EXPLAINED

5.0 / 5 (0 votes)