Prompt Engineering

Summary

TLDRThis training module introduces the fundamentals of generative AI and large language models (LLMs), focusing on their architecture, training, and practical applications. It explores prompt engineering techniques, emphasizing how well-structured prompts can guide AI models like Gemini to produce accurate responses. The module also showcases how a cloud architect, Sasha, can leverage Gemini for creating cloud network designs by crafting effective prompts. Best practices for prompt engineering, such as being clear, concise, and defining boundaries, are highlighted to ensure optimal AI interaction for various tasks.

Takeaways

- 😀 Generative AI refers to a subset of artificial intelligence that creates content (text, images, code) based on input prompts.

- 😀 Large Language Models (LLMs) are specific generative AI models focused on language tasks, trained on massive datasets to predict responses.

- 😀 A prompt is a specific instruction or question given to a model to generate a response, and its quality impacts the output.

- 😀 Hallucinations occur when LLMs generate incorrect or nonsensical responses due to insufficient data, lack of context, or ambiguous prompts.

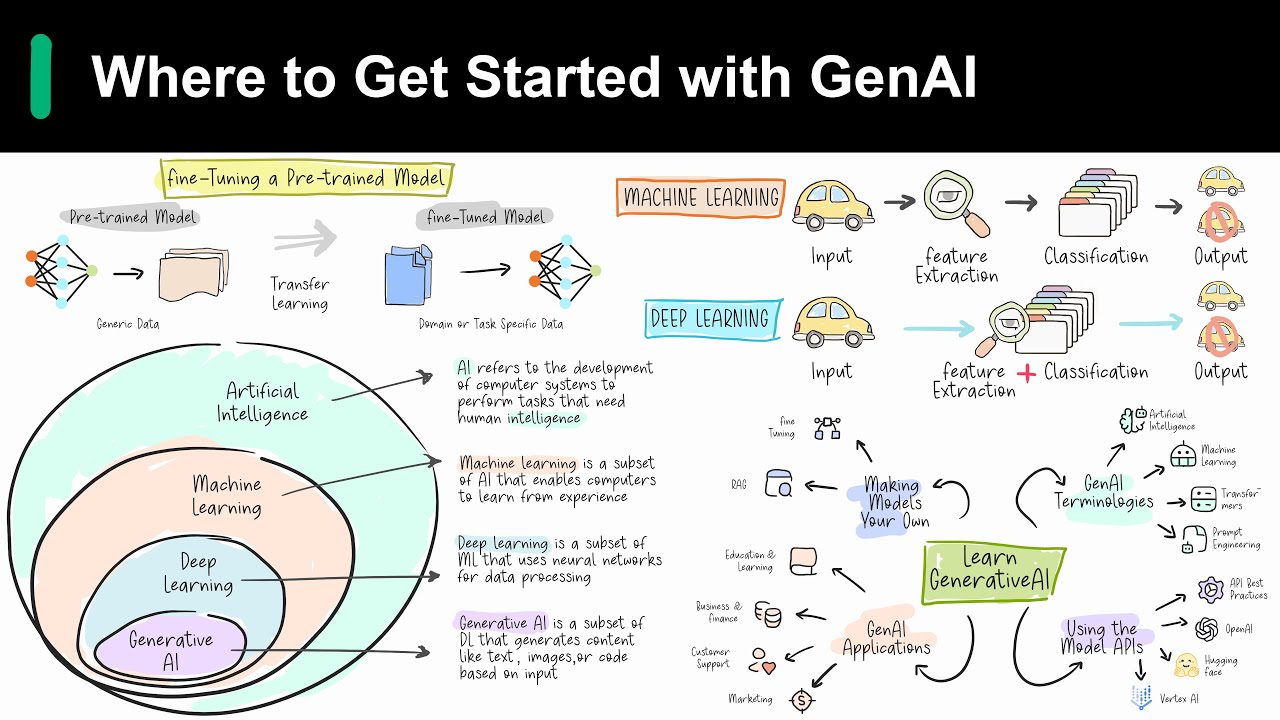

- 😀 LLMs are pre-trained with a large dataset for general purposes and fine-tuned with smaller datasets for specific tasks.

- 😀 Types of prompts include zero-shot (no context), one-shot (one example), few-shot (multiple examples), and role prompts (a defined persona).

- 😀 Prompt engineering involves crafting clear and effective prompts to enhance the quality of responses from LLMs.

- 😀 The two main components of a prompt are the preamble (context and instructions) and the input (the core question or task).

- 😀 Best practices for prompt engineering include being detailed and explicit, defining boundaries, using personas, and keeping sentences concise.

- 😀 In real-world applications, such as Sasha’s use case with Google Cloud, refined prompts lead to more accurate and useful AI-generated outputs.

Q & A

What is Generative AI?

-Generative AI is a subset of artificial intelligence capable of creating various types of content, such as text, images, or data, in response to input prompts. It learns patterns from large datasets and generates new content with similar characteristics.

How does Generative AI differ from Large Language Models (LLMs)?

-Generative AI is a broader category of models that can generate various types of content beyond just text, while LLMs specifically focus on language tasks. LLMs are a subset of generative AI that specializes in understanding and generating human language.

What role does training play in Large Language Models (LLMs)?

-Training is essential for LLMs, as they learn the underlying patterns and structures of language by processing vast datasets during pre-training. This helps LLMs predict and generate human-like responses to prompts.

What are hallucinations in the context of LLMs?

-Hallucinations are incorrect or nonsensical responses generated by LLMs, often due to insufficient context or training data. LLMs can sometimes produce misleading or grammatically incorrect information because they are limited to the data they were trained on.

How can prompt engineering help improve the results generated by LLMs?

-Prompt engineering involves crafting clear and detailed prompts to guide the LLM in generating relevant and accurate responses. A well-structured prompt can significantly improve the quality of the output by providing necessary context and constraints.

What are the different types of prompts used in prompt engineering?

-The main types of prompts are zero-shot, one-shot, few-shot, and role prompts. Zero-shot prompts provide no context, while one-shot and few-shot prompts give one or more examples, respectively. Role prompts involve specifying a role or persona for the LLM to adopt when answering.

What is the significance of using a preamble in a prompt?

-The preamble sets the context for the LLM, providing introductory information and guiding the model on the task at hand. It can include the task, examples, or other details that help the model generate a more accurate and relevant response.

How can providing detailed instructions help in getting better responses from LLMs?

-Detailed and explicit instructions help reduce vagueness, making it easier for the LLM to understand the task. This improves the accuracy of the output, as the model has clear guidelines to follow.

What best practices should be followed while crafting prompts for LLMs?

-Best practices include being clear and concise, defining boundaries for the prompt, adopting a persona for better context, using shorter sentences to avoid confusion, and providing fallback outputs for when the model encounters uncertainty.

Can you give an example of how Sasha, the cloud architect, used prompt engineering in her task?

-Sasha refined her prompt by adding role context to make it more specific to her task. For example, she changed the prompt to specify that she wanted the LLM to act as a cloud architect and help with creating a dual-stack Google Cloud network using gcloud commands.

Outlines

This section is available to paid users only. Please upgrade to access this part.

Upgrade NowMindmap

This section is available to paid users only. Please upgrade to access this part.

Upgrade NowKeywords

This section is available to paid users only. Please upgrade to access this part.

Upgrade NowHighlights

This section is available to paid users only. Please upgrade to access this part.

Upgrade NowTranscripts

This section is available to paid users only. Please upgrade to access this part.

Upgrade NowBrowse More Related Video

5.0 / 5 (0 votes)