Learn Apache Spark In-Depth with Databricks for Data Engineering

Summary

TLDRThis comprehensive course on Apache Spark offers learners a deep dive into the framework with two major projects on AWS and Azure. It covers Spark's internal mechanisms, structured and lower-level APIs, and production deployment. The course includes detailed notes, a data engineering community for support, and a combo package with Python, SQL, and Snowflake basics. A certificate is provided upon completion, and a limited-time 50% discount is available for new enrolments.

Takeaways

- 📚 The course offers comprehensive learning on Apache Spark with two end-to-end projects on AWS and Azure.

- 🔍 It covers the internal workings of Apache Spark, including its architecture, APIs, and production deployment.

- 💻 Learners will gain practical experience by writing transformation code and working with different data types and file formats.

- 📈 The course includes detailed notes for easy reference and revision, especially useful for interview preparation.

- 🤝 Access to a private data engineering community is provided for shared learning and project collaboration.

- 🎓 Prerequisites for the course include a basic understanding of Python, SQL, and a data warehouse tool like Snowflake.

- 🏆 Successful completion of the course materials leads to a certificate.

- 🎥 The course is structured into multiple modules, starting from an introduction to Apache Spark to in-depth projects.

- 🚀 The course is designed to boost confidence in writing Spark code and understanding its execution.

- 🌐 Special focus is given to Databricks and its architecture, including the lakehouse approach and Delta Lake.

- 🛒 A limited-time 50% discount is offered for both the combo package and the Apache Spark course for new learners.

Q & A

What are the key benefits of learning Apache Spark for a data engineer?

-Apache Spark is a crucial skill for data engineers as it is used by top companies for large-scale data processing. It allows for the writing of transformation code and is central to many data engineering projects.

What types of projects are included in the course?

-The course includes three mini-projects and two end-to-end data engineering projects, specifically designed to enhance practical understanding and provide portfolio-worthy experiences.

What is the significance of the structured API in Apache Spark?

-The structured API is significant as it forms 80 to 90% of the work in organizations, making it one of the most important sections to understand for effective Apache Spark usage.

How does the course address the learning of the lower-level API in Apache Spark?

-The course dedicates a module to understanding the lower-level API, such as Resilient Distributed Datasets (RDD), including both theoretical knowledge and hands-on practice for a comprehensive understanding.

What are the production-ready aspects of Apache Spark applications covered in the course?

-The course covers how to write, deploy, and debug Apache Spark applications, including understanding Spark's life cycle, deployment processes, monitoring through Spark UI, and troubleshooting common errors.

What is Databricks, and how does it relate to Apache Spark?

-Databricks is a tool for Apache Spark that simplifies the process of working with Spark. The course covers Databricks architecture, lakehouse architecture, and the use of Delta Lake and Medallion architecture for effective data engineering.

What are the two end-to-end projects included in the course, and on which platforms are they based?

-The two end-to-end projects are based on AWS and Azure. The AWS project involves a Spotify data pipeline, while the Azure project focuses on e-commerce data processing using Azure Data Lake Storage and Databricks.

What are the prerequisites for taking this Apache Spark course?

-A basic understanding of Python, SQL, and a data warehouse tool like Snowflake is recommended before taking the course to ensure a solid foundation for learning Apache Spark.

What bonuses come with the course?

-The course includes detailed notes for easy reference, access to a private data engineering community for support and collaboration, and a significant discount on future courses.

How does the course ensure a comprehensive learning experience?

-The course combines theoretical knowledge with hands-on practice, including mini-projects and end-to-end projects, to ensure a thorough understanding of Apache Spark and its applications.

What is the format for accessing the course content after purchase?

-After purchasing the course, learners get lifetime access to all course materials, which can be accessed through the website and a dedicated mobile application for on-the-go learning.

Outlines

This section is available to paid users only. Please upgrade to access this part.

Upgrade NowMindmap

This section is available to paid users only. Please upgrade to access this part.

Upgrade NowKeywords

This section is available to paid users only. Please upgrade to access this part.

Upgrade NowHighlights

This section is available to paid users only. Please upgrade to access this part.

Upgrade NowTranscripts

This section is available to paid users only. Please upgrade to access this part.

Upgrade NowBrowse More Related Video

Master Apache Airflow: 5 Real-World Projects to Get You Started

Intro to Stream Processing with Apache Flink | Apache Flink 101

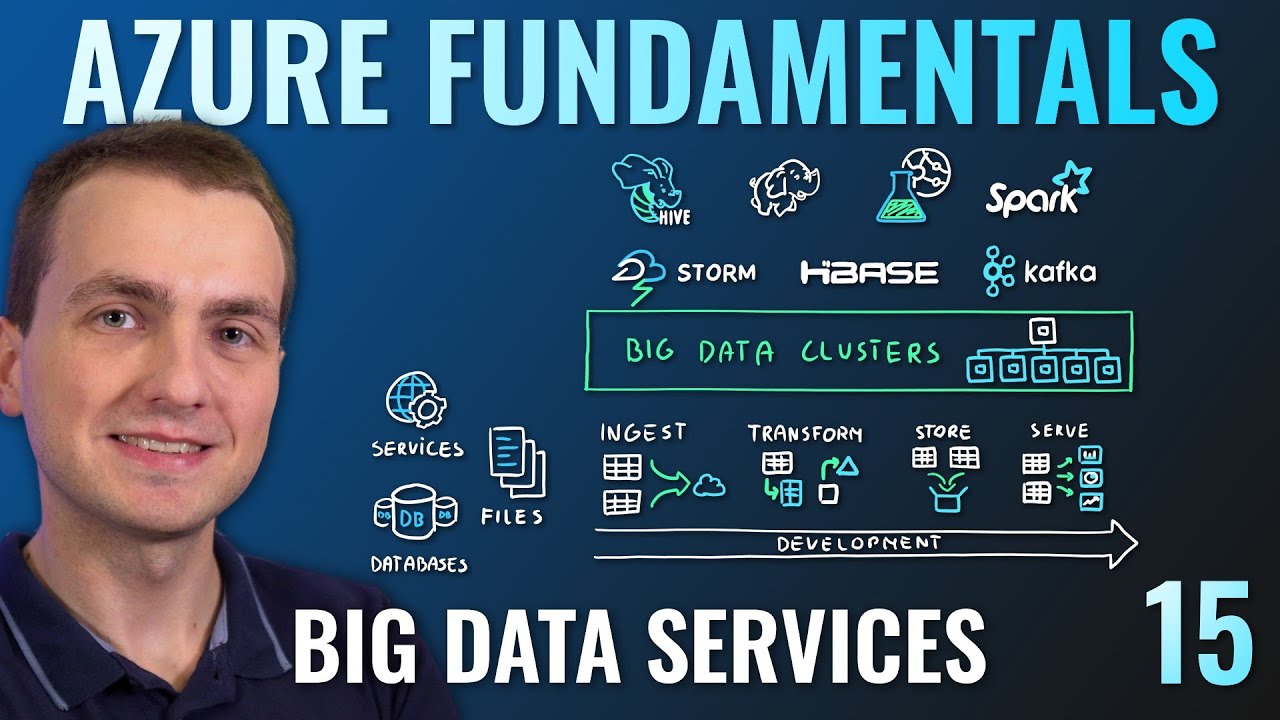

AZ-900 Episode 15 | Azure Big Data & Analytics Services | Synapse, HDInsight, Databricks

Introduction - AZ-900 Certification Course

01 PySpark - Zero to Hero | Introduction | Learn from Basics to Advanced Performance Optimization

God Tier Data Engineering Roadmap (By a Google Data Engineer)

5.0 / 5 (0 votes)