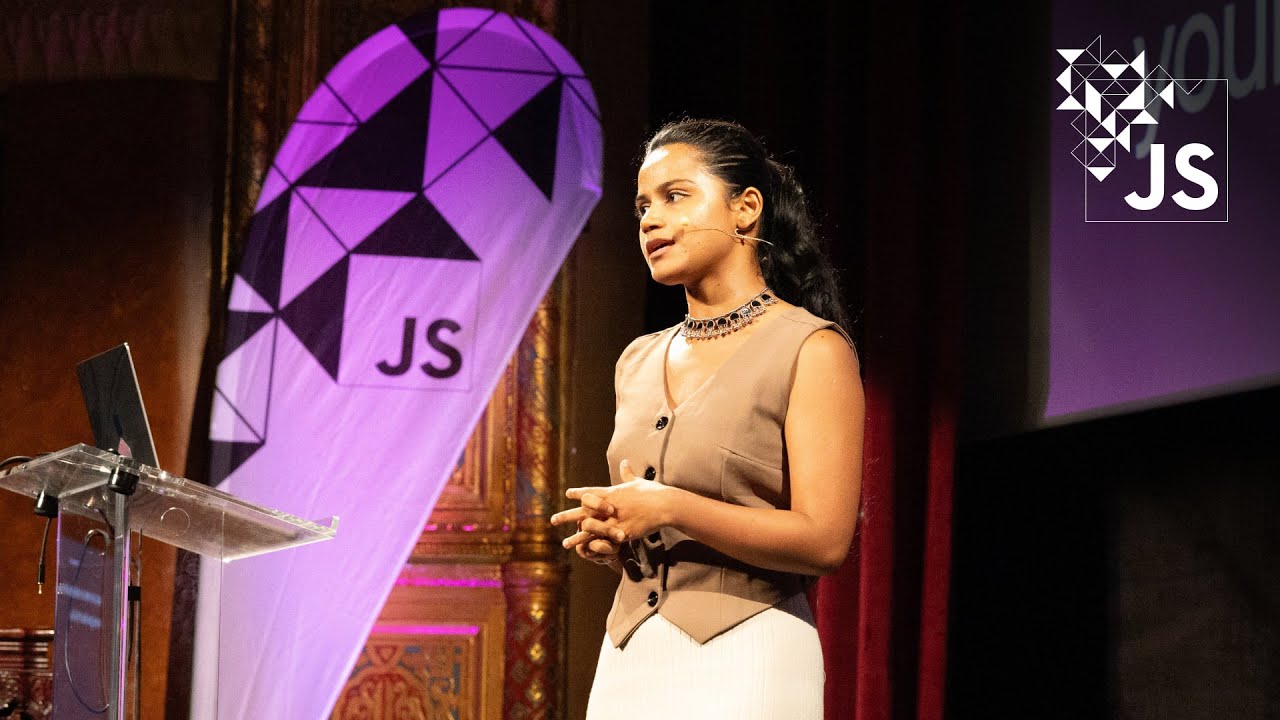

The Hidden Life of Embeddings: Linus Lee

Summary

TLDRIn this inaugural AI engineer conference talk, L from Notion shares their journey with AI, focusing on the power of embeddings and latent spaces in language models. With a background in experimenting with traditional NLP and language models, L delves into how embeddings offer a more direct control over steering language models, akin to navigating with precision rather than guesswork. Through practical examples, including manipulating text and image embeddings, L demonstrates the potential for creating expressive interfaces and the ability to interpret and control models more intuitively. The talk highlights recent advancements, introduces open-source models for experimentation, and envisions a future where interfaces allow deeper interaction and understanding of AI's underlying mechanisms.

Takeaways

- 😃 L from Notion discussed the power and potential of embeddings in AI at the inaugural AI engineer conference.

- 👨💻 Before joining Notion, L worked independently on language models and traditional NLP techniques like TF-IDF and BM25 to create innovative reading and writing interfaces.

- 🚀 Notion AI was launched around November 2022, introducing features like AI autofill and translation, with more innovations on the way.

- 👥 Notion is actively seeking AI engineers, product engineers, and machine learning engineers to expand their team.

- 🚨 L highlighted the challenge of steering language models effectively, likening it to controlling a car with a pool noodle from the back seat.

- 🔍 Latent spaces within embedding models offer a more direct layer of control by allowing inspection and manipulation of model internals, beyond just using prompts.

- 💬 By exploring embeddings and latent spaces, L demonstrates how to build more expressive interfaces and achieve a deeper understanding of what models 'see' in their inputs.

- 📈 L showcased practical examples of manipulating embeddings for text and images to alter content length, sentiment, and even blend concepts in creative ways.

- 🔧 The talk introduced custom models based on T5 architecture, fine-tuned and adapted for unique tasks like embedding recovery and model interoperability.

- 📦 L announced the availability of their models on Hugging Face, encouraging others to experiment with them and share findings.

Q & A

What is the main focus of the talk given by the speaker at the inaugural AI engineer conference?

-The main focus of the talk is on embeddings and latent spaces of models, particularly how they can be used to control and interpret AI models more directly.

What is the speaker's background and their work prior to the conference?

-The speaker, L, has worked on AI at Notion for the last year or so, and before that, did a lot of independent work experimenting with language models, traditional NLP techniques like TF-IDF and BM25, and embedding models to build interfaces for reading and writing.

What are some of the new features launched in Notion AI since its public beta in November 2022?

-Since the public beta in November 2022, Notion AI has steadily launched new features including AI autofill inside databases and translation.

Why does the speaker compare prompting language models to steering a car with a pool noodle?

-The speaker uses this analogy to illustrate the indirect and somewhat imprecise control one has over language models when using just prompts, highlighting the challenges in steering the model's output directly.

What are latent spaces, and why are they important according to the speaker?

-Latent spaces are high-dimensional vector spaces inside models, including embedding models and activation spaces. They are important because they potentially encode salient features of the input data, offering a more direct layer of control and understanding of the model's operations.

How does the speaker propose to manipulate embeddings to achieve more direct control over model outputs?

-The speaker suggests that by understanding and intervening in the latent spaces of models, specifically by manipulating embeddings, one can achieve a more direct control over the model's outputs and build more expressive interfaces.

Can you describe an example given by the speaker of how embeddings can be manipulated?

-The speaker describes manipulating embeddings to vary text length while maintaining the topic, by identifying a direction in the embedding space that represents shorter text and then moving the embedding in that direction before decoding it back to text.

What innovative visual representation did the speaker showcase?

-The speaker showcased a visual representation of their transformation from beginning to end, showcasing the transition from a photographic to a cartoon version of themselves.

What is the significance of the research work mentioned by the speaker, such as the AO World model paper?

-The research work, including the AO World model paper, is significant because it represents groundbreaking efforts in understanding and manipulating latent spaces within models, offering new ways to interpret and control AI models' behaviors.

How does the speaker view the future of human interfaces to knowledge in the context of AI?

-The speaker is optimistic about the future, believing that by digging deeper into what models are actually looking at and building interfaces around them, more human-centric interfaces to knowledge are possible, enhancing our understanding and interaction with AI.

Outlines

This section is available to paid users only. Please upgrade to access this part.

Upgrade NowMindmap

This section is available to paid users only. Please upgrade to access this part.

Upgrade NowKeywords

This section is available to paid users only. Please upgrade to access this part.

Upgrade NowHighlights

This section is available to paid users only. Please upgrade to access this part.

Upgrade NowTranscripts

This section is available to paid users only. Please upgrade to access this part.

Upgrade NowBrowse More Related Video

Privacy-Friendly Applications with Ollama, Vector Functions, and LangChainJS by Pratim Bhosale

Generative AI: Output & Probabilities

The History of Natural Language Processing (NLP)

Belajar AI dari Nol di 2025: Panduan Lengkap dalam 8 Menit! Panduan Dasar AI untuk Pemula (2025)

AI Has a Fatal Flaw—And Nobody Can Fix It

Exploring and comparing different LLMs [Pt 2] | Generative AI for Beginners

5.0 / 5 (0 votes)